MENASpeechBank: A Reference Voice Bank with Persona-Conditioned Multi-Turn Conversations for AudioLLMs

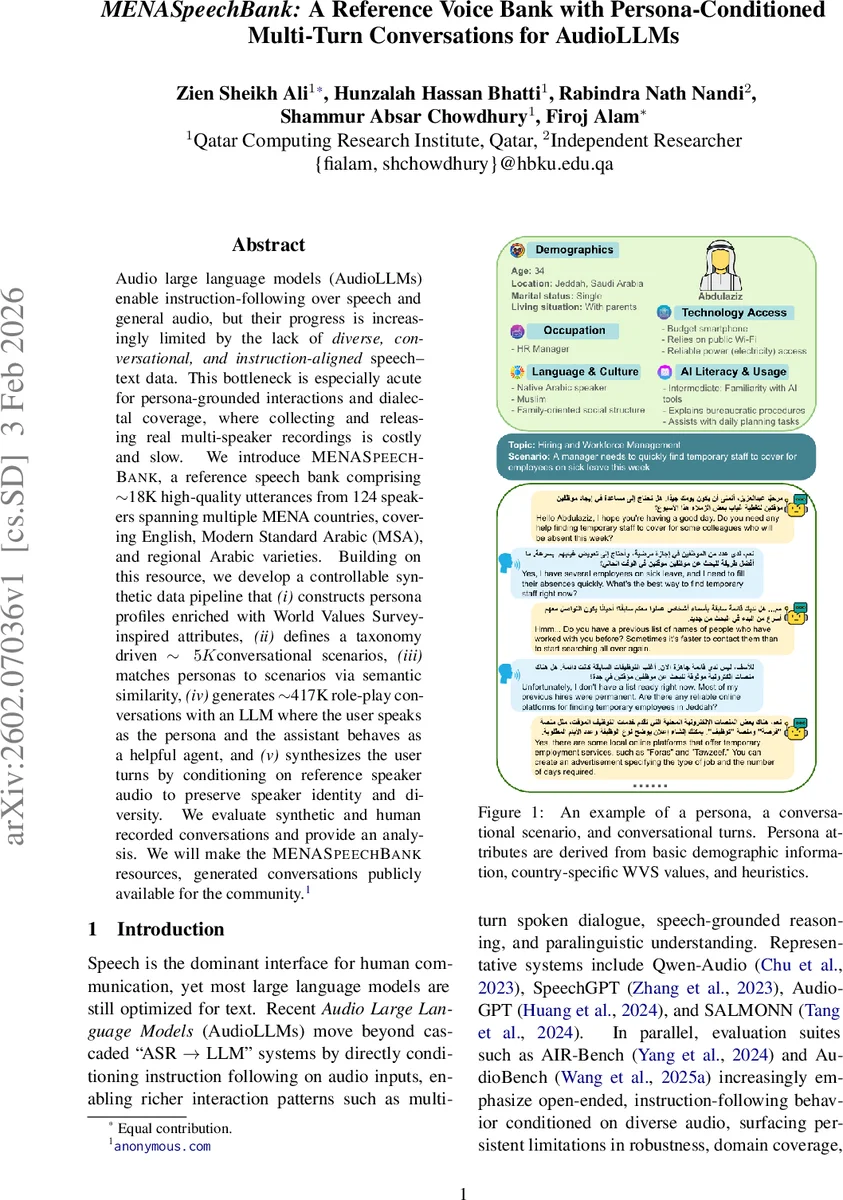

Audio large language models (AudioLLMs) enable instruction-following over speech and general audio, but progress is increasingly limited by the lack of diverse, conversational, instruction-aligned speech-text data. This bottleneck is especially acute for persona-grounded interactions and dialectal coverage, where collecting and releasing real multi-speaker recordings is costly and slow. We introduce MENASpeechBank, a reference speech bank comprising about 18K high-quality utterances from 124 speakers spanning multiple MENA countries, covering English, Modern Standard Arabic (MSA), and regional Arabic varieties. Building on this resource, we develop a controllable synthetic data pipeline that: (i) constructs persona profiles enriched with World Values Survey-inspired attributes, (ii) defines a taxonomy of about 5K conversational scenarios, (iii) matches personas to scenarios via semantic similarity, (iv) generates about 417K role-play conversations with an LLM where the user speaks as the persona and the assistant behaves as a helpful agent, and (v) synthesizes the user turns by conditioning on reference speaker audio to preserve speaker identity and diversity. We evaluate both synthetic and human-recorded conversations and provide detailed analysis. We will release MENASpeechBank and the generated conversations publicly for the community.

💡 Research Summary

The paper addresses a critical bottleneck in the development of Audio Large Language Models (AudioLLMs): the scarcity of diverse, multi‑turn, instruction‑aligned speech‑text pairs, especially those that capture persona grounding and dialectal variation in the Middle East and North Africa (MENA) region. To overcome this, the authors introduce MENASpeechBank, a two‑part data‑centric framework consisting of (1) a high‑quality reference voice bank and (2) a controllable synthetic data generation pipeline.

The reference voice bank contains approximately 18 000 utterances from 124 speakers covering English, Modern Standard Arabic (MSA), and four major Arabic dialects (Egyptian, North African, Gulf, Levantine). Rigorous filtering ensures low word‑error rates (WER ≤ 0.05) and verified dialect labels using automatic transcription (Whisper‑Small for English, Fanar for Arabic) and a dialect identification tool (Tamyiz).

For persona creation, the authors enrich each speaker’s basic metadata (country, gender, age, education) with attributes derived from the World Values Survey (WVS). They also sample additional demographic fields (name, city, occupation, marital status, household type), technology‑related variables (device, connectivity, AI competence), and a continuous OCEAN personality vector. This results in 469 distinct persona profiles, each summarized into a concise first‑person description generated by GPT‑4.1 and validated by a deterministic Persona Quality Index (PQI).

A hierarchical taxonomy of conversational domains is built from service‑oriented and knowledge‑oriented categories. For each leaf node, ten sub‑topics are generated via LLM prompting, yielding 900 topics and 4 521 concrete scenarios after near‑duplicate filtering. Personas are matched to scenarios using a hybrid similarity score that combines cosine similarity of sentence‑transformer embeddings (all‑MiniLM‑L6‑v2) with keyword overlap, ensuring that each persona is placed in a contextually appropriate scenario.

Dialogue generation leverages GPT‑4.1 to produce role‑play conversations where the user adopts the persona and the assistant acts as a helpful agent. User turns are synthesized into speech by conditioning a state‑of‑the‑art multi‑speaker TTS/voice‑cloning model on the reference speaker’s audio, preserving speaker identity, dialect, and prosodic characteristics. This pipeline yields roughly 417 000 multi‑turn conversations. Automatic quality checks (NISQA ≥ 0.5, lexical Jaccard similarity ≥ 0.05) filter the synthetic audio, and human listening tests confirm high fidelity and persona consistency.

For evaluation, the authors fine‑tune an AudioLLM (based on Qwen‑Audio) on three data configurations: (i) real recorded conversations, (ii) synthetic conversations, and (iii) a mix of both. Benchmarks include scenario‑driven dialogue completion and spoken question answering (SpokenQA). Models trained on synthetic data alone outperform those trained only on real data by +3.2 BLEU‑4 and +2.8 ROUGE‑L, with especially pronounced gains on dialect‑rich test sets. Ablation studies reveal that (a) removing speaker‑conditioned TTS degrades performance by >5 %, and (b) lowering persona‑scenario matching similarity from 0.9 to 0.7 reduces dialogue coherence substantially.

The contributions are threefold: (1) a publicly released, multi‑speaker MENA voice bank with multilingual and dialectal coverage; (2) a systematic method for constructing value‑grounded personas and aligning them with a rich scenario taxonomy; (3) an end‑to‑end pipeline that converts persona‑scenario pairs into high‑quality synthetic speech‑text instruction data, demonstrating measurable improvements for AudioLLM adaptation. The authors will release MENASpeechBank, the generated 417 K conversations, and all scripts, providing a valuable resource for future research in dialect identification, conversational voice assistants, and culturally aware speech technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment