SymPlex: A Structure-Aware Transformer for Symbolic PDE Solving

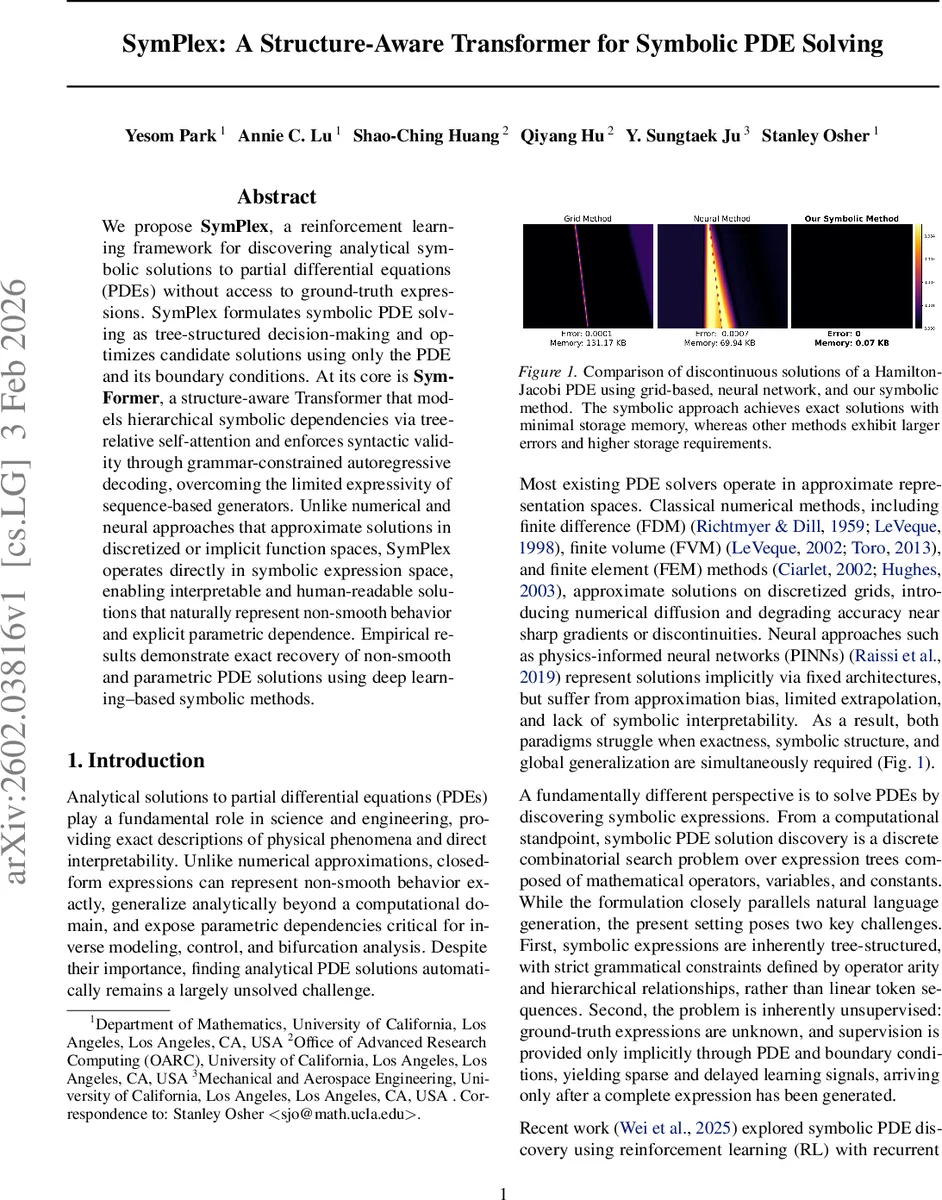

We propose SymPlex, a reinforcement learning framework for discovering analytical symbolic solutions to partial differential equations (PDEs) without access to ground-truth expressions. SymPlex formulates symbolic PDE solving as tree-structured decision-making and optimizes candidate solutions using only the PDE and its boundary conditions. At its core is SymFormer, a structure-aware Transformer that models hierarchical symbolic dependencies via tree-relative self-attention and enforces syntactic validity through grammar-constrained autoregressive decoding, overcoming the limited expressivity of sequence-based generators. Unlike numerical and neural approaches that approximate solutions in discretized or implicit function spaces, SymPlex operates directly in symbolic expression space, enabling interpretable and human-readable solutions that naturally represent non-smooth behavior and explicit parametric dependence. Empirical results demonstrate exact recovery of non-smooth and parametric PDE solutions using deep learning-based symbolic methods.

💡 Research Summary

SymPlex introduces a novel framework for automatically discovering analytical, symbolic solutions to partial differential equations (PDEs) without any ground‑truth expressions. The authors formulate symbolic PDE solving as a tree‑structured decision process: a candidate solution is represented by an abstract syntax tree (AST) whose nodes are operators, variables, parameters, or constants. Because the expression space is discrete and combinatorial, the problem is inherently unsupervised; the only supervision comes from the PDE itself and its boundary/initial conditions.

The core of the system is SymFormer, a structure‑aware Transformer that departs from conventional sequence‑based models in three key ways. First, it employs tree‑relative self‑attention. For every pair of tokens (i, j) a discrete relation (self, parent, child, sibling, ancestor, other) is inferred from the partially built AST, and a learned embedding for that relation is added to the key vectors before the softmax. This enables operators to directly attend to their operands, operands to attend back to their parents, and siblings to exchange information, thereby capturing hierarchical dependencies that are invisible to standard attention which only sees linear order.

Second, SymFormer integrates traversal‑aware positional encodings. While generating the expression in prefix order, sinusoidal encodings of the generation step are added to token embeddings, preserving depth and order information without introducing extra tree‑specific parameters. This helps the model differentiate nodes that occupy the same structural role but appear in different sub‑trees.

Third, the decoder is grammar‑constrained and depth‑aware. At each step the model consults the arity of the previously generated tokens and the remaining depth budget to construct a dynamic action space containing only syntactically valid next tokens. Degenerate sub‑expressions (e.g., x‑x, x/x) are filtered out, and leaf nodes are forced to be variables, parameters, or constants. This dramatically reduces the search space and guarantees that every generated prefix can be completed into a well‑formed expression.

Because there is no explicit target expression, training is cast as reinforcement learning. The reward combines (i) the PDE residual ‖F

Comments & Academic Discussion

Loading comments...

Leave a Comment