Context Compression via Explicit Information Transmission

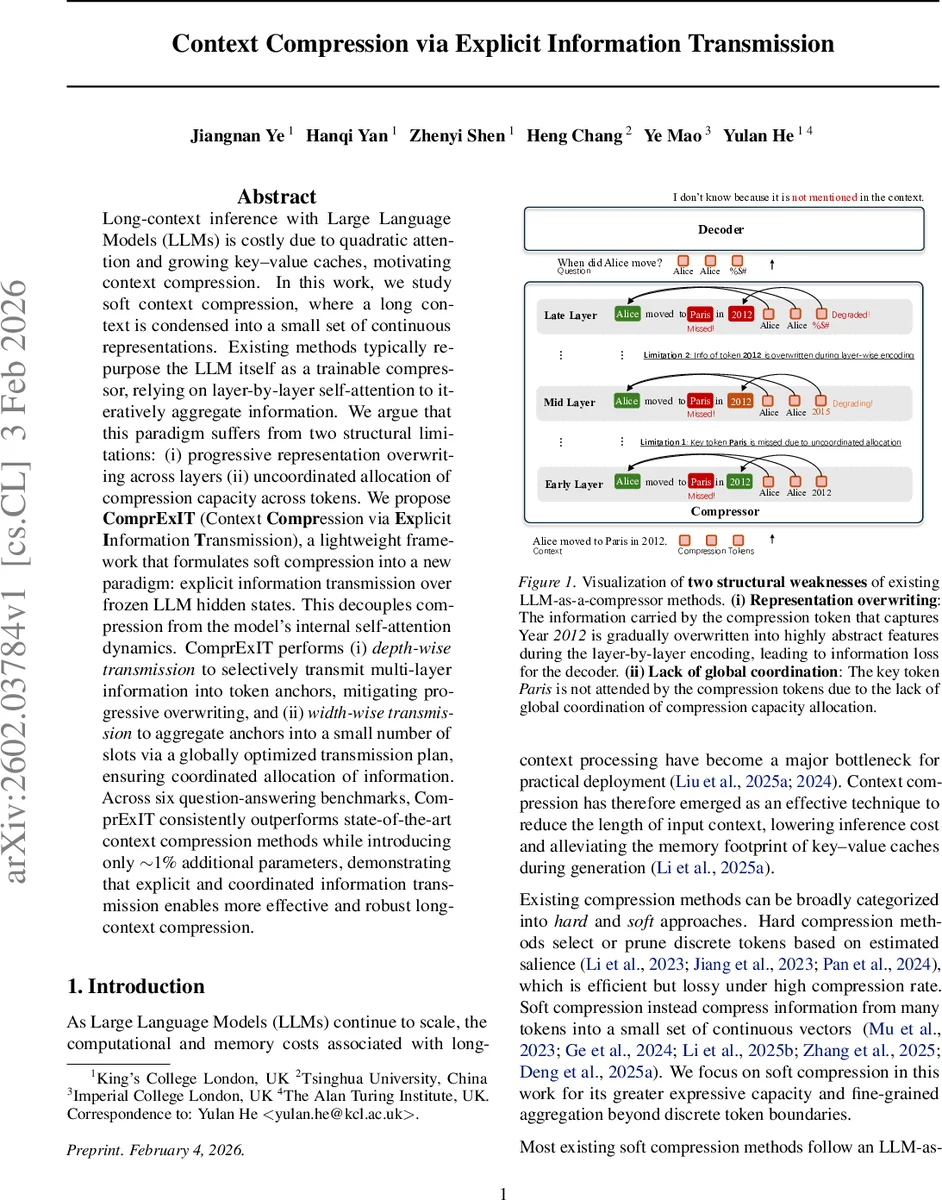

Long-context inference with Large Language Models (LLMs) is costly due to quadratic attention and growing key-value caches, motivating context compression. In this work, we study soft context compression, where a long context is condensed into a small set of continuous representations. Existing methods typically re-purpose the LLM itself as a trainable compressor, relying on layer-by-layer self-attention to iteratively aggregate information. We argue that this paradigm suffers from two structural limitations: (i) progressive representation overwriting across layers (ii) uncoordinated allocation of compression capacity across tokens. We propose ComprExIT (Context Compression via Explicit Information Transmission), a lightweight framework that formulates soft compression into a new paradigm: explicit information transmission over frozen LLM hidden states. This decouples compression from the model’s internal self-attention dynamics. ComprExIT performs (i) depth-wise transmission to selectively transmit multi-layer information into token anchors, mitigating progressive overwriting, and (ii) width-wise transmission to aggregate anchors into a small number of slots via a globally optimized transmission plan, ensuring coordinated allocation of information. Across six question-answering benchmarks, ComprExIT consistently outperforms state-of-the-art context compression methods while introducing only ~1% additional parameters, demonstrating that explicit and coordinated information transmission enables more effective and robust long-context compression.

💡 Research Summary

The paper tackles the growing computational burden of long‑context inference in large language models (LLMs), which stems from the quadratic cost of self‑attention and the expanding key‑value cache during generation. While hard token‑pruning methods reduce context length, they are inherently lossy and limited in compression ratio. Soft context compression, which condenses many tokens into a small set of continuous vectors, offers higher expressive capacity but most existing approaches treat the LLM itself as a trainable compressor. They introduce special “gist” or “memory” tokens and rely on layer‑by‑layer self‑attention updates to absorb information from the context.

The authors identify two fundamental structural weaknesses in this paradigm. First, the iterative layer‑wise updates cause a progressive overwriting of early‑layer information: representations that capture lexical or syntactic details are gradually transformed into highly abstract, generation‑oriented features, leading to a distribution mismatch between the final compression tokens and the decoder’s expected input space. Second, each compression token aggregates information independently, without any global coordination of the limited compression budget. Consequently, some context regions are redundantly covered while others are under‑represented, and the ordering of information can become non‑monotonic.

To overcome these issues, the authors propose a new compression paradigm that decouples compression from the LLM’s internal self‑attention. They keep the LLM frozen, extract its hidden states across all layers, and perform explicit information transmission over these cached representations. The transmission occurs along two orthogonal dimensions:

-

Depth‑wise transmission (inter‑layer). For each token position, a weighted mixture of its representations across all L layers is computed using learnable layer priors (w_\ell). A gating mechanism, conditioned on a shared linear projection and a learned layer embedding, produces soft gating scores (\alpha_{t,\ell}) that determine how much information from each layer flows into a token‑anchor vector (\tilde{h}_t). This explicit gating mitigates the progressive overwriting problem by allowing the compressor to selectively draw from low‑level lexical features or high‑level semantic abstractions as needed.

-

Width‑wise transmission (token‑to‑slot). The set of N token anchors is aggregated into K compression slots. The token sequence is uniformly partitioned into K local fields (F_k); each field’s mean forms a receiver representation (r_k), preserving locality and order. A utility matrix (U_{t,k}) is built from cosine similarity between transformed anchors and receivers. Each anchor also predicts an information capacity (\rho_t) (softmax over a linear layer), while each slot receives an equal share (\rho_k = 1/K). The final transmission plan (\Pi) is obtained by solving a constrained optimal‑transport problem that minimizes the total cost (C_{t,k}=1-U_{t,k}) subject to the capacity constraints. This global plan ensures that important tokens are distributed across slots without redundancy and that less important tokens can be discarded.

The resulting framework, named ComprExIT (Context Compression via Explicit Information Transmission), adds only about 1 % extra parameters to the base LLM (gating weights, projection matrices, and the transport solver).

Experimental evaluation is conducted on six question‑answering benchmarks (including NaturalQuestions, TriviaQA, HotpotQA, etc.) using a LLaMA‑2‑7B backbone. Across a range of compression ratios (K/N from 0.05 to 0.2), ComprExIT consistently outperforms prior soft‑compression methods such as Gist, ICAE, UniGist, Activation Beacon, and others, achieving absolute accuracy gains of 2–4 % on average. Ablation studies demonstrate that removing depth‑wise gating (replacing it with a simple average) dramatically degrades performance, confirming the importance of mitigating representation drift. Likewise, substituting the globally optimized transport plan with a random or greedy assignment eliminates the benefits of coordinated capacity allocation.

The authors also analyze the sensitivity to compression budget, showing that performance remains robust even at aggressive compression levels, and they provide visualizations of the transmission plan that reveal how tokens related to key entities (e.g., dates, locations) are preferentially routed to distinct slots.

Contributions and impact:

- Identification of representation overwriting and uncoordinated allocation as core limitations of existing LLM‑as‑compressor methods.

- Introduction of a novel, frozen‑LLM‑based compression paradigm that treats compression as explicit information transmission.

- Design of depth‑wise gated token anchors and a globally optimal width‑wise transport plan, together forming a lightweight yet powerful compressor.

- Empirical validation showing state‑of‑the‑art results with minimal overhead, and extensive analyses that deepen understanding of long‑context compression dynamics.

The paper suggests future directions such as extending the framework to other transformer variants (e.g., Longformer, Transformer‑XL), applying it to multimodal contexts, and learning more expressive transmission graphs. Overall, ComprExIT offers a compelling solution that separates compression from the model’s internal dynamics, enabling stable, coordinated, and efficient long‑context summarization for large language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment