Test-Time Conditioning with Representation-Aligned Visual Features

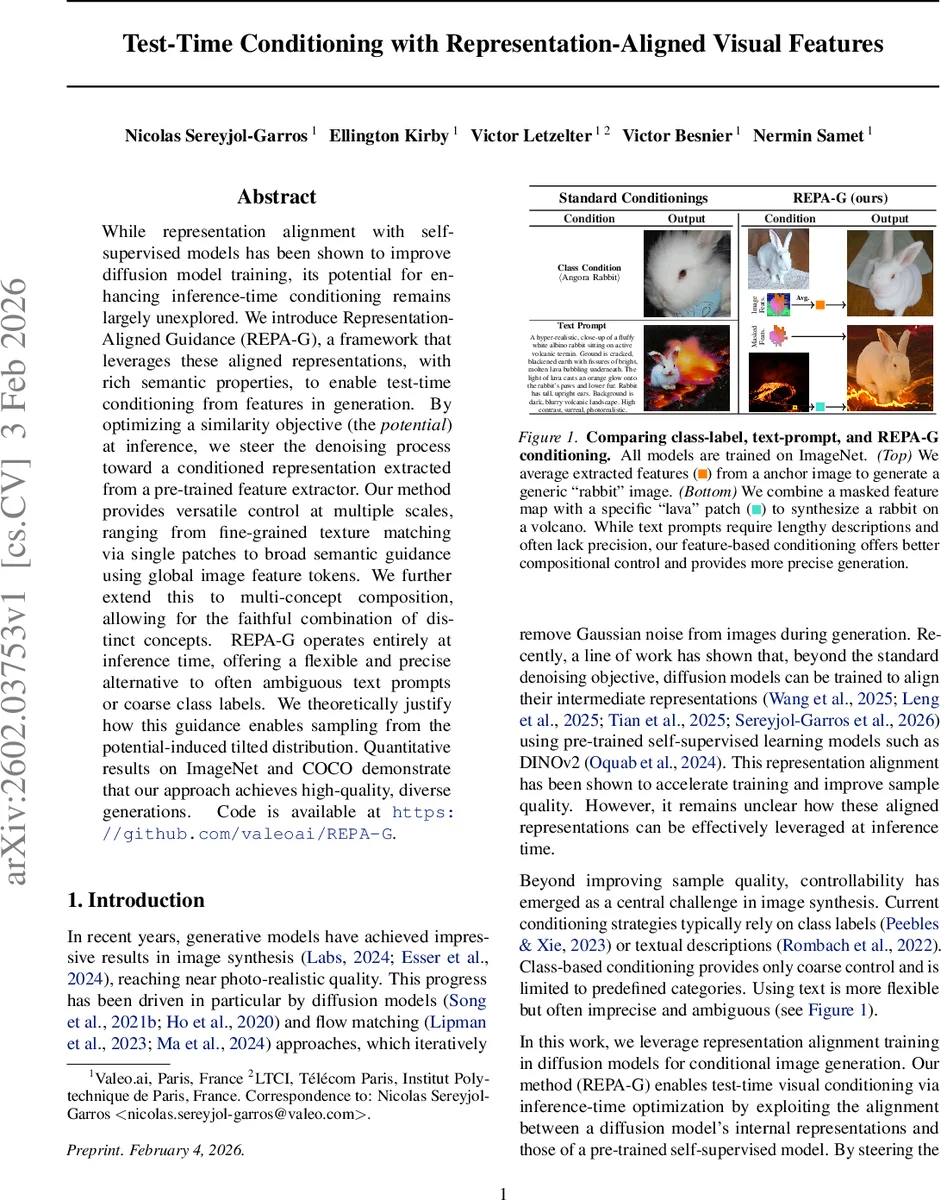

While representation alignment with self-supervised models has been shown to improve diffusion model training, its potential for enhancing inference-time conditioning remains largely unexplored. We introduce Representation-Aligned Guidance (REPA-G), a framework that leverages these aligned representations, with rich semantic properties, to enable test-time conditioning from features in generation. By optimizing a similarity objective (the potential) at inference, we steer the denoising process toward a conditioned representation extracted from a pre-trained feature extractor. Our method provides versatile control at multiple scales, ranging from fine-grained texture matching via single patches to broad semantic guidance using global image feature tokens. We further extend this to multi-concept composition, allowing for the faithful combination of distinct concepts. REPA-G operates entirely at inference time, offering a flexible and precise alternative to often ambiguous text prompts or coarse class labels. We theoretically justify how this guidance enables sampling from the potential-induced tilted distribution. Quantitative results on ImageNet and COCO demonstrate that our approach achieves high-quality, diverse generations. Code is available at https://github.com/valeoai/REPA-G.

💡 Research Summary

The paper introduces Representation‑Aligned Guidance (REPA‑G), a novel test‑time conditioning framework for diffusion‑based generative models. Traditional conditioning relies on class labels or textual prompts, which can be coarse or ambiguous. REPA‑G leverages the fact that many recent diffusion models are trained with representation alignment: an auxiliary loss forces intermediate latent features of the diffusion model to match those of a frozen self‑supervised vision backbone (e.g., DINOv2). This alignment creates a shared semantic feature space between the generator and the backbone.

During inference, REPA‑G extracts a target feature vector ϕ(x_c) from any reference image (or a masked region thereof) using the same backbone. A similarity potential V(h, h*) = ⟨h, h*⟩ is defined between the generator’s current latent representation h = (h_θ ∘ f_θ)(x_t, t) and the target ϕ(x_c). The gradient of this potential, scaled by a coefficient λ, is added to the score term in the stochastic differential equation (SDE) that drives the reverse diffusion process. Mathematically, the modified SDE becomes

dx_t = (v★(x_t, t) – t∇_x log p_t(x_t) – 2λt∇_x V((h_θ∘f_θ)(x_t, t), ϕ(x_c))) dt + √(2t) dW̄_t,

which the authors prove (under two assumptions: global optimality of the aligned model and vanishing Jensen gap) samples from a “tilted” distribution

\tilde p_0(x; x_c) ∝ p_0(x)·exp(λ·V(ϕ(x), ϕ(x_c))).

Thus, the generation is biased toward images whose backbone features are close to the conditioning features.

The framework supports three granularity levels: (1) global average tokens (the mean of all backbone features) for abstract concepts such as “rabbit” or “car”; (2) masked tokens that preserve spatial layout or pose; and (3) single‑patch tokens for fine‑grained texture or color control. By combining multiple tokens, REPA‑G can compose concepts (e.g., a rabbit on a lava background) without any additional training or architectural changes.

Empirical evaluation on ImageNet and COCO demonstrates that REPA‑G yields higher fidelity (lower FID) and better semantic alignment (higher CLIPScore) than standard text‑prompt or class‑label conditioning. Qualitative examples show precise texture matching and compositional control that text prompts struggle to achieve. A toy experiment validates the theoretical claim that the modified SDE indeed samples from the intended tilted distribution.

Ablation studies reveal that representation alignment is crucial: models trained without the alignment loss produce feature spaces that do not cluster semantically, making them unsuitable for conditioning. The authors also discuss the sensitivity to λ and the need for the model to be close to a global optimum; otherwise, the guidance may become unstable.

Limitations include reliance on the quality of the self‑supervised backbone and the assumption that the alignment loss has converged. Future work could explore adaptive λ schedules, multi‑backbone ensembles, and extensions to video or 3D generation.

In summary, REPA‑G provides a principled, training‑free method to condition diffusion models directly on visual features, offering fine‑grained, semantically meaningful control that overcomes the ambiguities of text‑based prompts while preserving generation quality.

Comments & Academic Discussion

Loading comments...

Leave a Comment