Instruction Anchors: Dissecting the Causal Dynamics of Modality Arbitration

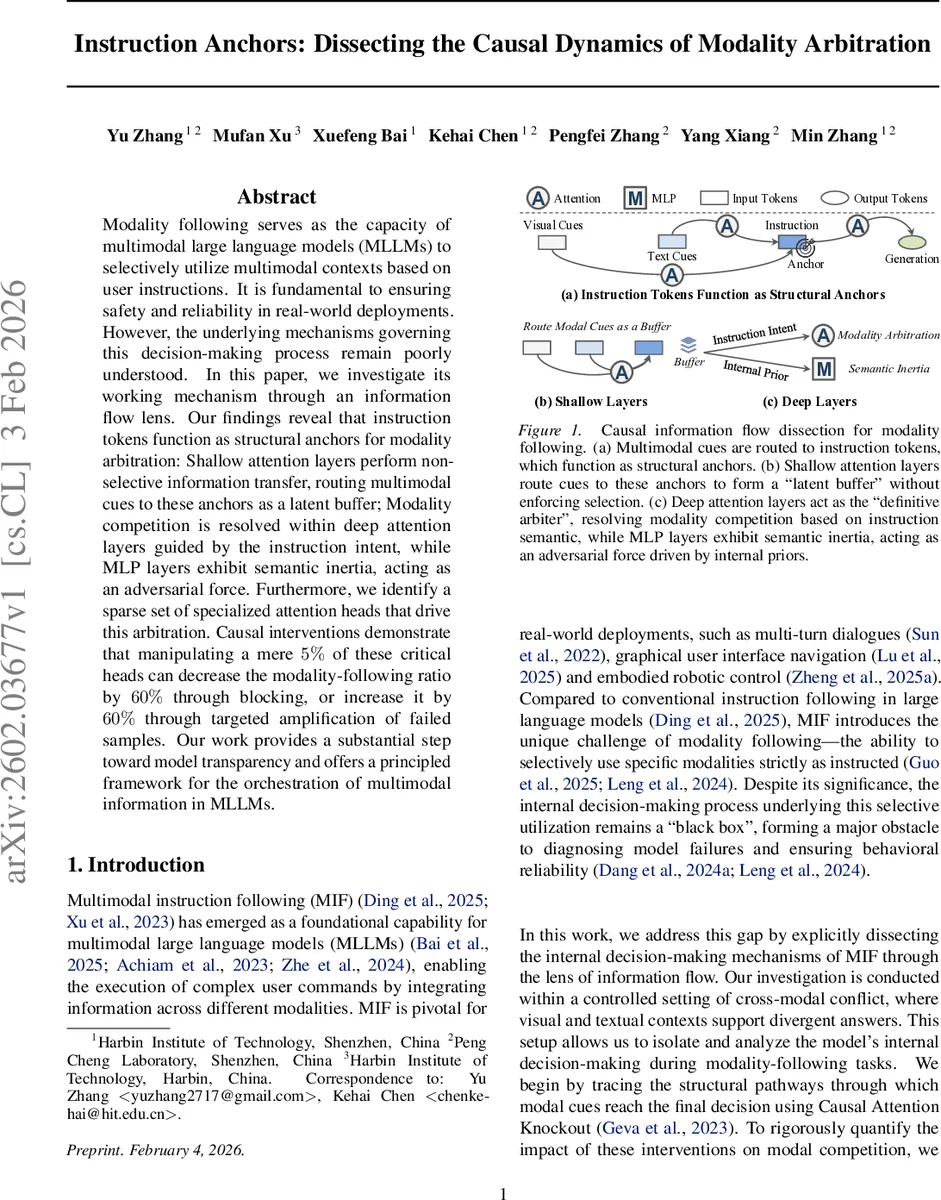

Modality following serves as the capacity of multimodal large language models (MLLMs) to selectively utilize multimodal contexts based on user instructions. It is fundamental to ensuring safety and reliability in real-world deployments. However, the underlying mechanisms governing this decision-making process remain poorly understood. In this paper, we investigate its working mechanism through an information flow lens. Our findings reveal that instruction tokens function as structural anchors for modality arbitration: Shallow attention layers perform non-selective information transfer, routing multimodal cues to these anchors as a latent buffer; Modality competition is resolved within deep attention layers guided by the instruction intent, while MLP layers exhibit semantic inertia, acting as an adversarial force. Furthermore, we identify a sparse set of specialized attention heads that drive this arbitration. Causal interventions demonstrate that manipulating a mere $5%$ of these critical heads can decrease the modality-following ratio by $60%$ through blocking, or increase it by $60%$ through targeted amplification of failed samples. Our work provides a substantial step toward model transparency and offers a principled framework for the orchestration of multimodal information in MLLMs.

💡 Research Summary

The paper investigates how multimodal large language models (MLLMs) decide which modality to follow when given explicit user instructions—a capability the authors term “modality following.” By constructing a controlled dataset where visual and textual contexts support conflicting answers, the authors isolate the decision‑making process. They introduce a causal‑analysis toolkit consisting of (1) Causal Attention Knockout, which selectively disables attention edges between token groups, and (2) a novel metric, Normalized Signed Structural Divergence (INSSD), which quantifies the impact of such interventions on a binary decision subspace (instruction‑compliant token vs. competing token).

Through systematic knockout experiments, they discover that instruction tokens act as structural anchors: multimodal cues from vision and text are first routed to these tokens rather than directly to the generation token. Shallow attention layers merely pass the cues into a “latent buffer” without making any selection, while deep attention layers perform the decisive arbitration based on the semantic content of the instruction. MLP sub‑layers exhibit “semantic inertia,” resisting the arbitration and preserving pre‑existing priors.

Layer‑wise probing with a Latent Decision Alignment Rate (LDAR) shows that alignment between the anchor’s internal representation and the final output is near chance in early layers (≈0.5) but jumps to >0.95 in deeper layers, confirming that arbitration is resolved inside the anchors before the decision is projected outward.

A striking finding is that only a tiny fraction (≈5 %) of attention heads are responsible for this arbitration. These “arbitration heads” include modality‑specific experts and shared hubs. Targeted interventions—blocking these heads—cause a 60 % drop in modality‑following accuracy, while amplifying them restores compliance in previously failed cases by roughly the same margin. This demonstrates both the necessity and sufficiency of the identified heads.

The authors also propose a method for extracting modality‑specific signal strength from hidden states using an answer‑entity dictionary, enabling robust tracking of competing modalities across layers.

Overall contributions: (1) Identification of instruction tokens as the central bottleneck for multimodal signal integration; (2) Clarification of distinct roles for shallow vs. deep attention and for MLP layers; (3) Discovery of a sparse set of critical attention heads and causal validation of their impact; (4) Introduction of INSSD and LDAR as general tools for probing multimodal decision dynamics.

The work advances model transparency, offering a principled framework for controlling multimodal information flow, which is crucial for safety, reliability, and interpretability of deployed multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment