ELIQ: A Label-Free Framework for Quality Assessment of Evolving AI-Generated Images

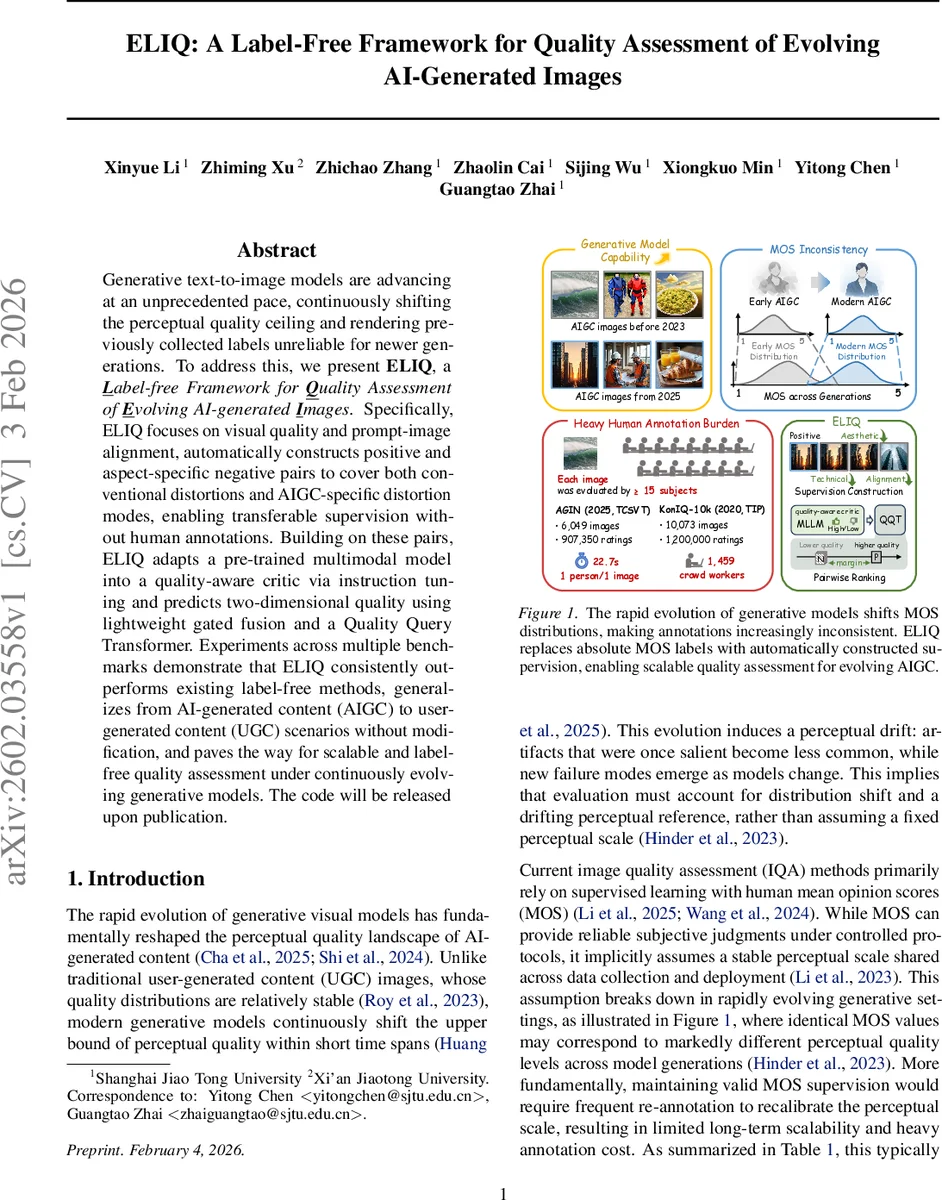

Generative text-to-image models are advancing at an unprecedented pace, continuously shifting the perceptual quality ceiling and rendering previously collected labels unreliable for newer generations. To address this, we present ELIQ, a Label-free Framework for Quality Assessment of Evolving AI-generated Images. Specifically, ELIQ focuses on visual quality and prompt-image alignment, automatically constructs positive and aspect-specific negative pairs to cover both conventional distortions and AIGC-specific distortion modes, enabling transferable supervision without human annotations. Building on these pairs, ELIQ adapts a pre-trained multimodal model into a quality-aware critic via instruction tuning and predicts two-dimensional quality using lightweight gated fusion and a Quality Query Transformer. Experiments across multiple benchmarks demonstrate that ELIQ consistently outperforms existing label-free methods, generalizes from AI-generated content (AIGC) to user-generated content (UGC) scenarios without modification, and paves the way for scalable and label-free quality assessment under continuously evolving generative models. The code will be released upon publication.

💡 Research Summary

The paper addresses a pressing problem in the evaluation of AI‑generated images: the rapid evolution of text‑to‑image (T2I) models continuously raises the perceptual quality ceiling, making previously collected human mean opinion scores (MOS) obsolete and costly to maintain. To overcome this, the authors propose ELIQ, a label‑free framework that predicts two orthogonal quality dimensions—visual fidelity and prompt‑image alignment—without relying on any absolute human ratings.

The core of ELIQ is an automatic supervision generation pipeline. First, a taxonomy of seven high‑level scene categories (indoor, urban, natural, people & activities, objects & artifacts, food, events) is defined. Using GPT‑5 with category‑specific rules, 400 diverse yet semantically clear text prompts are synthesized. Each prompt is fed to three state‑of‑the‑art T2I models (Qwen‑Image, FLUX.1‑dev, Stable Diffusion 3.5‑Large) to obtain high‑quality “positive” images, yielding a total of 1,200 reference samples.

Next, the framework constructs aspect‑specific negative samples along three quality axes (technical, aesthetic, alignment) and two distortion families (conventional and AIGC‑specific). Conventional negatives are created by repeated JPEG compression, Gaussian noise, and image‑to‑image editing (Qwen‑Edit) that degrade visual quality while preserving semantics. Alignment negatives are generated by shuffling image‑prompt pairs to induce semantic mismatch. AIGC‑specific negatives are produced by (i) using deliberately corrupted prompts, (ii) extracting intermediate diffusion latents (early‑step decoding), (iii) employing low‑quality T2I models, and (iv) mismatching high‑quality images with altered prompts. This yields tuples T = {I⁺, I⁻_tec, I⁻_aes, p, p⁻_ali} that encode pairwise ranking constraints: I⁺ > I⁻_tec, I⁺ > I⁻_aes, and (I⁺, p) > (I⁺, p⁻_ali).

These relative comparisons are used to adapt a pretrained multimodal large language model (MLLM) into a quality‑aware backbone via instruction tuning. Three separate instruction templates are designed for “evaluate technical quality”, “evaluate aesthetic quality”, and “evaluate text‑image alignment”. The model is fine‑tuned to output discrete labels (high/low) for each aspect, using the automatically generated positive/negative pairs as supervision—no human MOS needed.

After fine‑tuning, the MLLM’s parameters are frozen. A lightweight scoring head, the Quality Query Transformer (QQT), is trained on top of the frozen embeddings. Visual and textual embeddings are first fused through a gated fusion mechanism, then two learnable query tokens—one for visual quality and one for alignment—are fed into a small transformer that produces continuous scores ˆs_vis and ˆs_ali. Training uses a ranking loss derived from the pairwise constraints in T, ensuring that the model respects the relative ordering without any absolute ground truth.

Extensive experiments are conducted on both AI‑generated content (AIGC) benchmarks (e.g., AIGIQA‑20K, AGIN) and traditional user‑generated content (UGC) datasets (KonIQ‑10k, PaQ‑2‑PiQ). Compared with existing label‑free methods such as CLIP‑IQA and Quali‑CLIP, ELIQ achieves 5–7 percentage‑point gains in SRCC/PCC and narrows the gap to fully supervised IQA models like MANIQA. Notably, when the underlying T2I models are upgraded between training and testing, ELIQ’s performance remains stable, demonstrating its ability to refresh supervision automatically and adapt to perceptual drift.

A striking result is that the same ELIQ architecture, trained only on AIGC‑derived pairs, transfers directly to UGC scenarios without any architectural changes, achieving competitive results. This suggests that the two‑dimensional quality space (visual fidelity + alignment) captures the essential aspects of human perceived image quality across domains.

The authors plan to release code, prompts, and the generated positive/negative datasets, enabling reproducibility and further research. In summary, ELIQ introduces three key innovations: (1) automatic generation of high‑quality positive and aspect‑specific negative pairs, (2) multimodal instruction tuning to create a quality‑aware backbone, and (3) a lightweight query‑based scoring module. Together, these components provide a scalable, label‑free solution for continuous quality assessment in the rapidly evolving landscape of AI‑generated imagery.

Comments & Academic Discussion

Loading comments...

Leave a Comment