Failure is Feedback: History-Aware Backtracking for Agentic Traversal in Multimodal Graphs

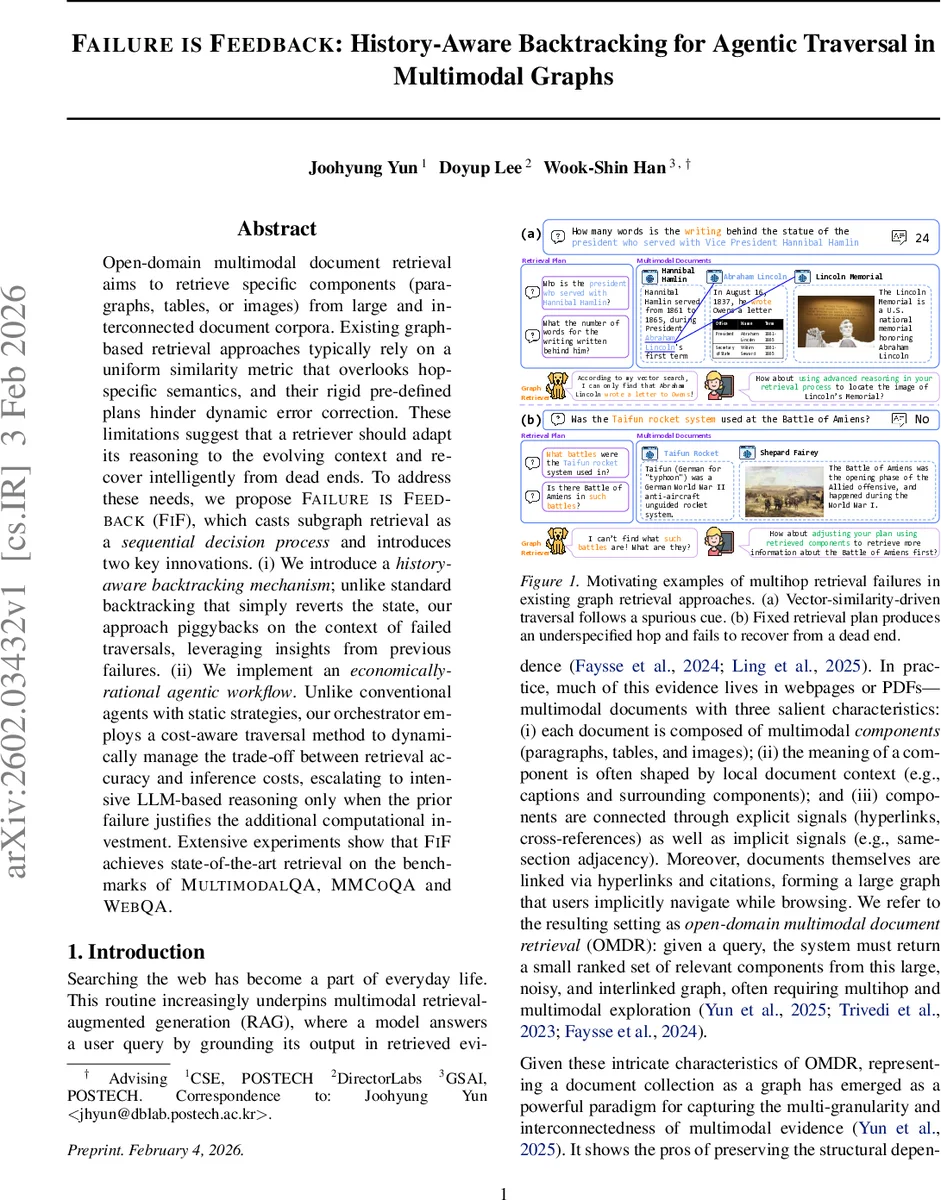

Open-domain multimodal document retrieval aims to retrieve specific components (paragraphs, tables, or images) from large and interconnected document corpora. Existing graph-based retrieval approaches typically rely on a uniform similarity metric that overlooks hop-specific semantics, and their rigid pre-defined plans hinder dynamic error correction. These limitations suggest that a retriever should adapt its reasoning to the evolving context and recover intelligently from dead ends. To address these needs, we propose Failure is Feedback (FiF), which casts subgraph retrieval as a sequential decision process and introduces two key innovations. (i) We introduce a history-aware backtracking mechanism; unlike standard backtracking that simply reverts the state, our approach piggybacks on the context of failed traversals, leveraging insights from previous failures. (ii) We implement an economically-rational agentic workflow. Unlike conventional agents with static strategies, our orchestrator employs a cost-aware traversal method to dynamically manage the trade-off between retrieval accuracy and inference costs, escalating to intensive LLM-based reasoning only when the prior failure justifies the additional computational investment. Extensive experiments show that FiF achieves state-of-the-art retrieval on the benchmarks of MultimodalQA, MMCoQA and WebQA.

💡 Research Summary

The paper tackles open‑domain multimodal document retrieval (OMDR), where the goal is to locate specific paragraphs, tables, or images across a large, interlinked corpus of web pages and PDFs. Existing graph‑based methods treat traversal as a static, similarity‑driven process: a single embedding‑based scoring function is applied uniformly across hops, and a pre‑specified plan dictates the path. This design ignores hop‑specific semantics and cannot recover from mistakes, causing errors to cascade in multi‑hop queries.

To overcome these limitations, the authors cast OMDR as a finite‑horizon information‑state Markov Decision Process (MDP). The state consists of a structured memory that records accumulated evidence, the history of sub‑queries, chosen retrieval strategies, and explicit success/failure outcomes. At each step the orchestrator (the agent) selects one of three actions: (i) TRAVERSE – move to neighboring nodes using a chosen strategy, (ii) PLAN – revise or expand the sub‑query as the information need evolves, or (iii) STOP – invoke a final reranker over all gathered candidates.

Two core innovations are introduced:

-

History‑aware backtracking – Traditional backtracking simply reverts to a previous node after a failure. FiF (Failure is Feedback) stores the full failure trace (failed sub‑query, strategy, and path) and uses it to “re‑anchor” the search. When a dead‑end is detected, the agent can (a) jump back to a more promising prior context, (b) generate a refined sub‑query that avoids the same mistake, and (c) optionally elevate the reasoning strategy. This turns failures into constructive feedback rather than wasted steps.

-

Economically‑rational agentic workflow – FiF maintains a portfolio of retrieval strategies spanning a cost‑accuracy spectrum: lightweight vector matching, intermediate rule‑based filtering, and expensive large‑language‑model (LLM) reasoning. For each hop the agent estimates uncertainty (e.g., low similarity gaps, ambiguous candidates) and consults the failure history. If the hop is ambiguous or a previous attempt failed, the agent escalates to a higher‑cost strategy; otherwise it stays with the cheapest effective option. The reward function in the MDP jointly rewards retrieval precision (e.g., top‑k accuracy, nDCG) and penalizes computational cost (time, GPU memory, LLM calls), encouraging a policy that balances accuracy and efficiency.

The underlying graph is a three‑layered component graph:

- Layer 0 (Documents) – each document node stores a short textual summary for early pruning.

- Layer 1 (Components) – multimodal components (paragraphs, tables, images).

- Layer 2 (Subcomponents) – fine‑grained elements such as sentences, table rows, or visual objects.

Hierarchical “contains” edges enable drilling down from document to component to subcomponent, while navigational edges (hyperlinks, cross‑references) connect components across documents. Adding the document‑level layer improves early pruning compared with prior two‑layer designs like LILAC.

Experiments were conducted on three benchmark suites: MultimodalQA, MMCoQA, and WebQA. FiF was compared against strong baselines including LILAC, VisRAG, and flat retrieval models. Results show consistent improvements: top‑1 accuracy gains of 4–7 percentage points, nDCG@10 gains of up to 6.8 pp, and especially large margins on queries requiring three or more hops. Cost analysis reveals that lightweight strategies are used for roughly 60 % of hops; LLM‑driven reasoning is invoked only on ambiguous or previously failed hops, keeping overall inference time around 1.8 seconds (≈30 % faster than baselines) and GPU memory under 2.2 GB. The proportion of expensive LLM calls stays below 22 % of total steps, yielding a ~30 % reduction in overall computational budget.

Ablation studies confirm the value of each component: removing history‑aware backtracking drops accuracy by ~3 pp and increases the number of LLM calls, while disabling cost‑aware escalation leads to a 20 % rise in inference time with negligible accuracy gain.

Limitations include the overhead of maintaining and querying the failure memory, and the reliance on explicit navigational edges; implicit semantic links (e.g., inferred from co‑occurrence or user click logs) are not yet exploited. Moreover, while the cost‑aware policy reduces average expense, worst‑case latency can still be high for particularly ambiguous queries that trigger multiple LLM escalations.

Conclusion – FiF demonstrates that treating multimodal graph traversal as an adaptive, economically rational decision process, and turning failures into actionable feedback, yields a system that is both more accurate and more efficient than existing static graph‑based retrievers. Future work will explore richer graph signals, dynamic learning of the cost‑accuracy trade‑off, and deployment in large‑scale real‑time retrieval services.

Comments & Academic Discussion

Loading comments...

Leave a Comment