FactNet: A Billion-Scale Knowledge Graph for Multilingual Factual Grounding

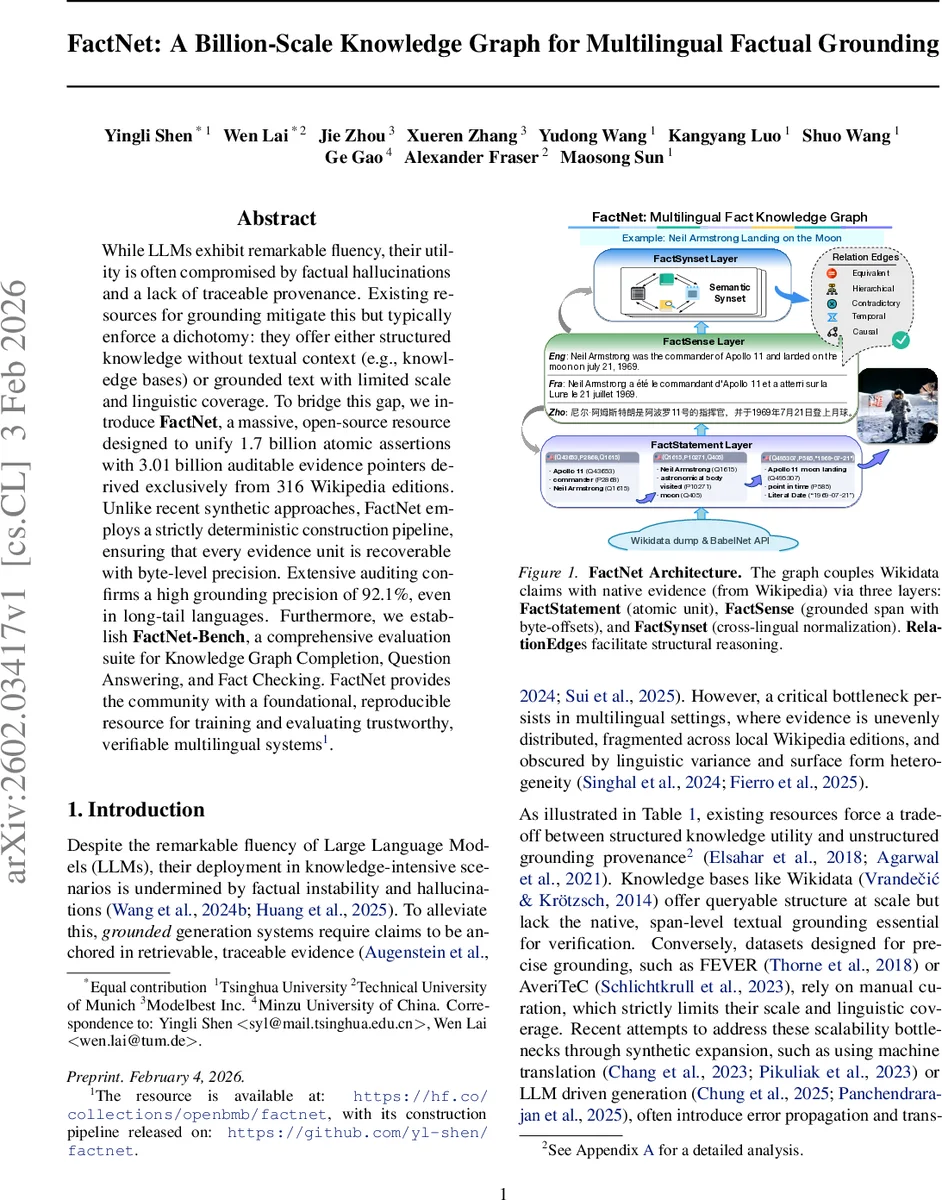

While LLMs exhibit remarkable fluency, their utility is often compromised by factual hallucinations and a lack of traceable provenance. Existing resources for grounding mitigate this but typically enforce a dichotomy: they offer either structured knowledge without textual context (e.g., knowledge bases) or grounded text with limited scale and linguistic coverage. To bridge this gap, we introduce FactNet, a massive, open-source resource designed to unify 1.7 billion atomic assertions with 3.01 billion auditable evidence pointers derived exclusively from 316 Wikipedia editions. Unlike recent synthetic approaches, FactNet employs a strictly deterministic construction pipeline, ensuring that every evidence unit is recoverable with byte-level precision. Extensive auditing confirms a high grounding precision of 92.1%, even in long-tail languages. Furthermore, we establish FactNet-Bench, a comprehensive evaluation suite for Knowledge Graph Completion, Question Answering, and Fact Checking. FactNet provides the community with a foundational, reproducible resource for training and evaluating trustworthy, verifiable multilingual systems.

💡 Research Summary

FactNet addresses the pervasive problem of factual hallucinations in large language models (LLMs) by providing a massive, open‑source multilingual knowledge graph that tightly couples structured assertions with human‑authored textual evidence. The resource unifies 1.7 billion atomic Wikidata statements (FactStatements) with 3.01 billion grounded spans (FactSenses) extracted from 316 Wikipedia language editions, and aggregates equivalent statements into 1.55 billion canonical equivalence classes (FactSynsets). A deterministic, three‑stage construction pipeline guarantees that every evidence pointer can be reproduced at byte‑level precision from the original Wikimedia dumps, ensuring full auditability and provenance.

The architecture consists of three tightly coupled layers:

- FactStatement – language‑neutral atomic unit containing subject (QID), property (PID), value (typed), qualifiers, references, and rank.

- FactSense – a concrete realization of a FactStatement within a specific Wikipedia page, identified by language, page‑id, revision‑id, view type (sentence, infobox, table), and deterministic character offsets.

- FactSynset – a canonical equivalence class generated by a versioned normalization policy π that normalizes values (date precision, unit conversion, coordinate rounding) and orders qualifiers deterministically. Merge reasons are stored as machine‑readable metadata, allowing users to trace or adjust the merging criteria.

RelationEdges provide additional structural connectivity. Three families of edges are released: Direct Joins (entity‑valued synsets linked to subject synsets), Schema‑Based Relations (derived from a property‑relation map with a hop limit of two), and Conflict Signals (potential contradictions identified via functional‑property violations or temporal incompatibilities). Each edge carries provenance information, and edges are tiered by reliability so downstream users can filter according to their tolerance for noise.

The pipeline avoids any stochastic components. Stage 1 parses raw dumps into three deterministic views: Sentence (plain text after template stripping), Template (AST‑based infobox extraction), and Table (structured cell parsing). Stage 2 applies the π policy to produce canonical keys and deterministic hashes for FactSynsets. Stage 3 aligns statements to evidence using a hierarchy of matchers: (i) structure‑based matching for literal values in infoboxes/tables, (ii) link‑based matching for entity‑valued objects via wikilinks or anchors, and (iii) lexical matching for literals within sentences. The highest‑confidence matcher is selected, but all alternative matches are retained as metadata. No machine‑learning models are employed, guaranteeing that the same input dumps and configuration always yield identical outputs.

Multilingual coverage is achieved by grounding a statement only when the subject entity has a sitelink to the target language edition. A conservative title‑match fallback is allowed only when a normalized title resolves to a single non‑disambiguation page, which preserves high precision even for long‑tail languages. Auditing on the 2025‑11‑01 snapshot shows a grounding precision of 92.1 % across all 316 languages.

FactNet‑Bench provides three standardized evaluation suites:

- FactNet‑KGC – knowledge‑graph completion (link prediction, entity prediction) using the full graph; it offers over ten times the number of triples and three hundred times the language diversity of prior benchmarks (e.g., OGB‑WikiKG2, T‑REx).

- FactNet‑MKQA – multilingual knowledge‑based question answering; models must retrieve and extract the correct FactSense span to answer a question, enabling direct assessment of retrieval‑augmented LLMs.

- FactNet‑MFC – multilingual fact‑checking; it evaluates claim‑evidence matching accuracy and the detection of Conflict Signals. Each suite includes fixed train/validation/test splits and baseline results to foster reproducibility.

Statistically, FactNet contains 1.7 B FactStatements spanning 12.1 K properties, 1.55 B FactSynsets, 3.01 B FactSenses, and 3.69 B RelationEdges. The dataset is distributed in sharded JSONL/Parquet files together with indexing scripts, and all schema definitions, normalization policies, language packs, and mapping resources are released under permissive licenses (Wikidata CC0, Wikipedia CC‑BY‑SA). Raw evidence text is provided in a separate CC‑BY‑SA package with mandatory attribution, complying with Wikimedia licensing terms.

In summary, FactNet is the first publicly available resource that simultaneously offers billion‑scale structured knowledge, precise human‑authored evidence, and deterministic provenance across hundreds of languages. By enabling fully auditable grounding, it paves the way for trustworthy, retrieval‑augmented LLMs that can cite, verify, and reason over multilingual facts with unprecedented scale and reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment