AesRec: A Dataset for Aesthetics-Aligned Clothing Outfit Recommendation

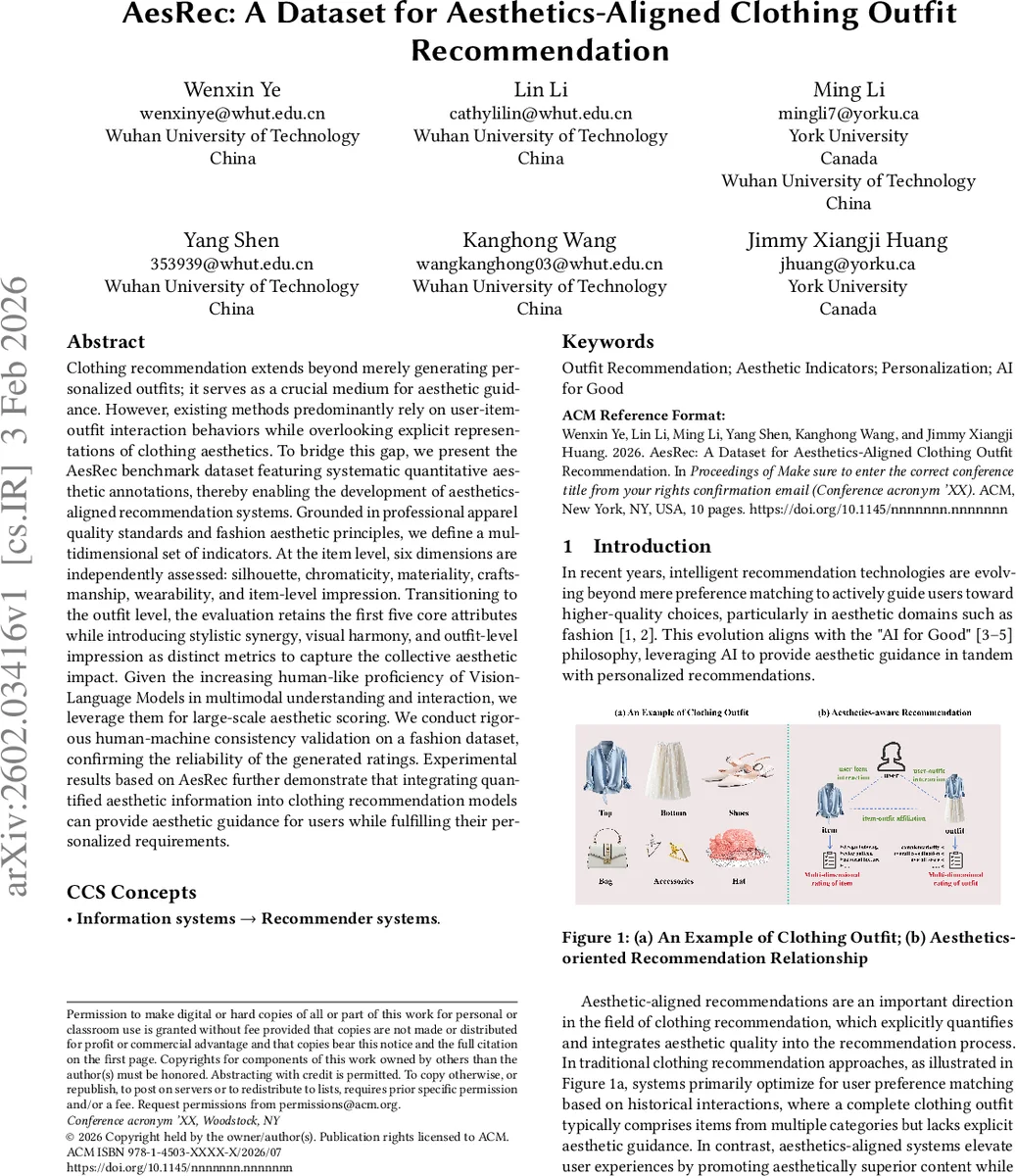

Clothing recommendation extends beyond merely generating personalized outfits; it serves as a crucial medium for aesthetic guidance. However, existing methods predominantly rely on user-item-outfit interaction behaviors while overlooking explicit representations of clothing aesthetics. To bridge this gap, we present the AesRec benchmark dataset featuring systematic quantitative aesthetic annotations, thereby enabling the development of aesthetics-aligned recommendation systems. Grounded in professional apparel quality standards and fashion aesthetic principles, we define a multidimensional set of indicators. At the item level, six dimensions are independently assessed: silhouette, chromaticity, materiality, craftsmanship, wearability, and item-level impression. Transitioning to the outfit level, the evaluation retains the first five core attributes while introducing stylistic synergy, visual harmony, and outfit-level impression as distinct metrics to capture the collective aesthetic impact. Given the increasing human-like proficiency of Vision-Language Models in multimodal understanding and interaction, we leverage them for large-scale aesthetic scoring. We conduct rigorous human-machine consistency validation on a fashion dataset, confirming the reliability of the generated ratings. Experimental results based on AesRec further demonstrate that integrating quantified aesthetic information into clothing recommendation models can provide aesthetic guidance for users while fulfilling their personalized requirements.

💡 Research Summary

The paper introduces AesRec, a large‑scale benchmark dataset designed to enable aesthetics‑aligned clothing outfit recommendation. Existing outfit recommendation datasets (e.g., DeepFashion, POG) contain rich visual, textual, and interaction data but lack explicit aesthetic annotations, limiting research that seeks to guide users toward not only personalized but also aesthetically superior outfits. To fill this gap, the authors first define a multidimensional set of aesthetic indicators grounded in internationally recognized apparel quality standards (ISO, ASTM, AATCC) and contemporary fashion aesthetic research. At the item level, six independent dimensions are annotated: silhouette, chromaticity, materiality, craftsmanship, wearability, and item‑level impression. At the outfit level, the first five core dimensions are retained, and three additional metrics—stylistic synergy, visual harmony, and outfit‑level impression—are introduced, yielding a total of nine quantitative scores per outfit/item.

For large‑scale labeling, the authors leverage a Vision‑Language Model (VLM) with hundreds of millions of parameters to automatically score all items in the POG dataset (1.01 M outfits, 583 K items). They validate the VLM’s reliability by having three fashion experts manually rate 100 randomly selected outfits; the Pearson correlation between expert averages and VLM scores exceeds 0.78, demonstrating strong human‑machine consistency.

The dataset construction pipeline includes: (1) selecting POG as the source because it provides extensive user‑outfit and user‑item interaction logs (280 M clicks from 3.57 M users) and detailed item metadata; (2) cleaning invalid image URLs; (3) consolidating 80 fine‑grained categories into 17 major categories for interpretability; and (4) applying the VLM to generate the nine aesthetic scores for every item and outfit.

To assess the practical impact of aesthetic information, the authors integrate the AesRec scores into seven state‑of‑the‑art bundle recommendation models (BPR‑MF, BGCN, MultiCBR, HyperMBR, CrossCBR, MIDGN, and a recent graph‑based model). Integration is performed by adding an aesthetic regularization term to the loss function or by treating aesthetic scores as auxiliary supervision. Two evaluation metrics are used: average aesthetic score of recommended outfits and exposure rank (the ranking of high‑aesthetic outfits in the recommendation list). Across all models, incorporating aesthetic guidance yields a 12 %–23 % increase in average aesthetic score and a noticeable improvement in exposure rank, indicating that users are presented with more aesthetically appealing outfits without sacrificing personalization. The most pronounced gains appear in graph‑based models, suggesting that structural user‑item‑outfit relationships benefit strongly from explicit aesthetic signals.

Beyond the experimental results, the authors release the full AesRec dataset, the VLM scoring pipeline, and the evaluation protocol, thereby providing a reproducible foundation for future work. Potential extensions include personalized aesthetic profiling to account for individual taste variance, continual refinement of VLM scoring as newer multimodal models emerge, and coupling aesthetic‑aware recommendation with generative fashion models to produce new outfits that satisfy both user preferences and aesthetic standards.

In summary, AesRec bridges a critical data gap by delivering a million‑scale, interaction‑rich fashion dataset annotated with nine rigorously defined aesthetic dimensions. The study demonstrates that such quantitative aesthetic information can be seamlessly integrated into existing recommendation architectures, leading to measurable improvements in the aesthetic quality of recommended outfits. This work paves the way for a new generation of fashion AI systems that balance personalization with aesthetic excellence.

Comments & Academic Discussion

Loading comments...

Leave a Comment