SEW: Strengthening Robustness of Black-box DNN Watermarking via Specificity Enhancement

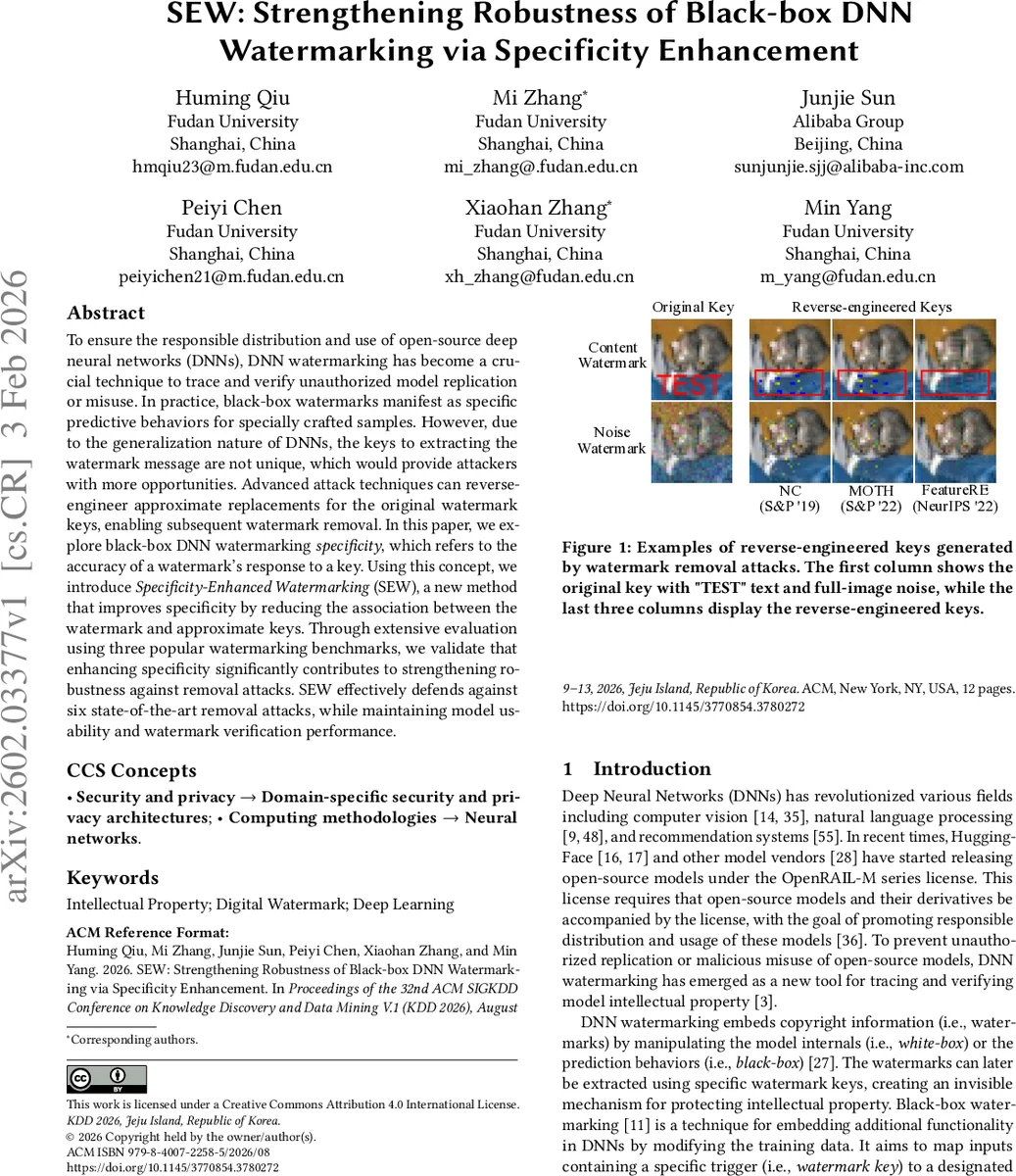

To ensure the responsible distribution and use of open-source deep neural networks (DNNs), DNN watermarking has become a crucial technique to trace and verify unauthorized model replication or misuse. In practice, black-box watermarks manifest as specific predictive behaviors for specially crafted samples. However, due to the generalization nature of DNNs, the keys to extracting the watermark message are not unique, which would provide attackers with more opportunities. Advanced attack techniques can reverse-engineer approximate replacements for the original watermark keys, enabling subsequent watermark removal. In this paper, we explore black-box DNN watermarking specificity, which refers to the accuracy of a watermark’s response to a key. Using this concept, we introduce Specificity-Enhanced Watermarking (SEW), a new method that improves specificity by reducing the association between the watermark and approximate keys. Through extensive evaluation using three popular watermarking benchmarks, we validate that enhancing specificity significantly contributes to strengthening robustness against removal attacks. SEW effectively defends against six state-of-the-art removal attacks, while maintaining model usability and watermark verification performance.

💡 Research Summary

The paper tackles a critical weakness in current black‑box deep neural network (DNN) watermarking: the lack of “specificity.” Because DNNs generalize, many inputs that are slight variations of the original watermark key (so‑called approximate keys) can still trigger the watermark. Attackers exploit this by reverse‑engineering such approximate keys and then applying removal techniques (pruning, fine‑tuning, unlearning, or the specialized Dehydra attack) to erase the watermark while preserving model performance.

To address this, the authors first formalize watermark specificity as the difference between the model’s response accuracy on the genuine key and on perturbed inputs that remain within a “noise boundary.” The noise boundary is defined as the maximal perturbation magnitude that still yields the target watermark label. Since exact analytical computation is infeasible for high‑dimensional, non‑linear networks, they propose a sampling‑based algorithm to estimate the boundary and thereby quantify specificity.

Armed with this metric, they evaluate several existing black‑box watermark schemes and find that most exhibit low specificity, explaining their vulnerability. The core contribution, Specificity‑Enhanced Watermarking (SEW), modifies the embedding process by jointly training on two sets of trigger samples: (1) the original key samples labeled with the watermark target, and (2) “approximate‑key” samples generated by adding controlled noise or transformations to the original key but retaining their true (non‑watermark) labels. The loss function simultaneously enforces the watermark on the genuine key while suppressing any association on the approximate keys. This forces the decision boundary to be steep only around the exact key, dramatically raising the specificity score.

Experiments are conducted on three popular black‑box watermark benchmarks (NC, MOTH, FeatureRE) and against six state‑of‑the‑art removal attacks, including pruning, fine‑tuning, unlearning, and Dehydra. SEW consistently maintains watermark detection rates above 80 % under all attacks, while incurring less than 1 % drop in top‑1 accuracy. Notably, under the Dehydra unlearning attack, traditional methods see detection collapse to below 30 %, whereas SEW retains about 85 % detection, confirming that higher specificity directly translates into stronger robustness.

The authors also discuss practical considerations: generating realistic approximate‑key samples can be domain‑specific, and excessive emphasis on approximate‑key training could marginally affect generalization, though empirical results show negligible impact. They propose the specificity metric as a new standard for evaluating black‑box watermarks and suggest future work on automated approximate‑key generation, specificity‑driven watermark optimization, and defenses against broader attack families such as model steering.

In summary, by introducing a principled definition and measurement of watermark specificity and by designing SEW to explicitly suppress the effectiveness of approximate keys, the paper delivers a significant advance in making black‑box DNN watermarks more resilient to modern removal attacks while preserving model utility.

Comments & Academic Discussion

Loading comments...

Leave a Comment