PACE: Pretrained Audio Continual Learning

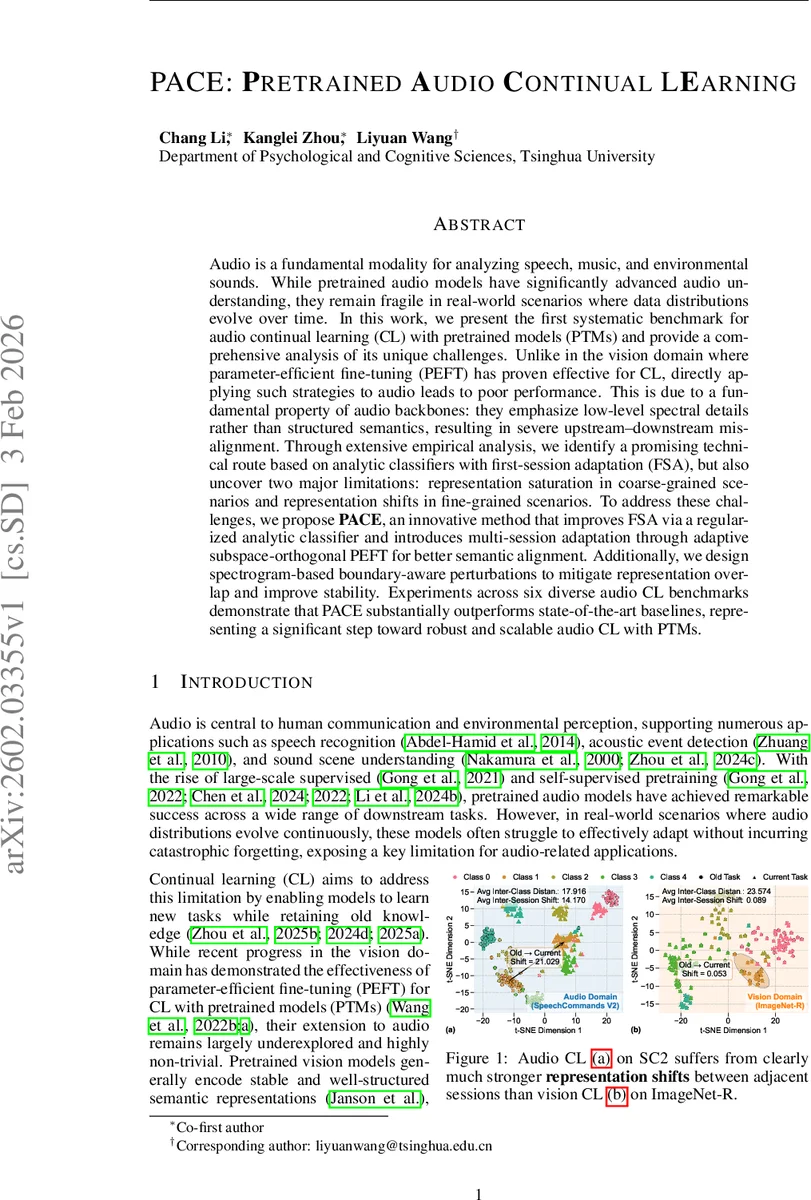

Audio is a fundamental modality for analyzing speech, music, and environmental sounds. Although pretrained audio models have significantly advanced audio understanding, they remain fragile in real-world settings where data distributions shift over time. In this work, we present the first systematic benchmark for audio continual learning (CL) with pretrained models (PTMs), together with a comprehensive analysis of its unique challenges. Unlike in vision, where parameter-efficient fine-tuning (PEFT) has proven effective for CL, directly transferring such strategies to audio leads to poor performance. This stems from a fundamental property of audio backbones: they focus on low-level spectral details rather than structured semantics, causing severe upstream-downstream misalignment. Through extensive empirical study, we identify analytic classifiers with first-session adaptation (FSA) as a promising direction, but also reveal two major limitations: representation saturation in coarse-grained scenarios and representation drift in fine-grained scenarios. To address these challenges, we propose PACE, a novel method that enhances FSA via a regularized analytic classifier and enables multi-session adaptation through adaptive subspace-orthogonal PEFT for improved semantic alignment. In addition, we introduce spectrogram-based boundary-aware perturbations to mitigate representation overlap and improve stability. Experiments on six diverse audio CL benchmarks demonstrate that PACE substantially outperforms state-of-the-art baselines, marking an important step toward robust and scalable audio continual learning with PTMs.

💡 Research Summary

The paper introduces the first systematic benchmark for continual learning (CL) with pretrained audio models (PTMs) and proposes a novel method, PACE, to address the unique challenges of this setting. While pretrained vision models have benefited from parameter‑efficient fine‑tuning (PEFT) techniques such as prompts, directly transferring these methods to audio results in severe performance degradation. The authors attribute this gap to a fundamental property of audio backbones: they prioritize low‑level time‑frequency details over high‑level semantic structure, leading to large representation shifts between successive CL sessions.

To quantify these issues, the authors construct six audio CL benchmarks—three coarse‑grained (ESC‑50, UrbanSound8K, SpeechCommands V2) and three fine‑grained (TIMIT‑2, TIMIT‑3, VocalSet). Experiments show that vision‑based PEFT methods (L2P, DualPrompt, S‑Prompt++) suffer three‑fold larger forgetting in audio, while statistics‑based analytic classifiers (RanPAC, ACL) are more stable but still limited. Two key findings emerge: (1) representation saturation on coarse tasks—pretrained backbones already capture most discriminative information in the first session, limiting further gains; and (2) a large performance gap on fine‑grained tasks, where the upstream‑downstream semantic mismatch prevents a single‑session adaptation from succeeding.

Building on these observations, PACE consists of three stages. Improved First‑Session Adaptation (FSA) freezes shallow layers, adapts only deeper layers with LoRA modules, and replaces the parametric head with a second‑order analytic classifier, preserving pretrained semantics while adding modest plasticity. Multi‑Session Adaptation (MSA) introduces a subspace‑orthogonal PEFT mechanism: for each new session, gradients are projected onto a subspace that is orthogonal to previously learned directions (implemented via LoRA subtraction and gradient projection). This controls drift and enables progressive alignment without replay. Boundary‑Aware Regularization applies spectrogram‑based perturbations near class boundaries, encouraging intra‑class compactness and inter‑class separability, effectively reducing representation overlap.

Extensive experiments demonstrate that PACE consistently outperforms state‑of‑the‑art CL baselines across all six benchmarks. On coarse datasets, the gap to joint‑training upper bounds shrinks to under 1 %, while on fine‑grained datasets PACE yields absolute improvements of +5.3 % (TIMIT‑2), +4.1 % (TIMIT‑3), and +6.3 % (VocalSet). Moreover, the method reduces catastrophic forgetting dramatically, as visualized by t‑SNE plots and forgetting curves.

The authors also release the constructed benchmarks, reproduced baselines, and code, providing a solid foundation for future research. In summary, the paper identifies why PEFT fails for audio CL, uncovers representation saturation and drift issues, and proposes a principled combination of analytic classification, subspace‑orthogonal adaptation, and boundary‑aware perturbations. PACE represents a significant step toward robust, scalable continual learning with pretrained audio models, bridging the gap between large‑scale pretraining and real‑world, evolving audio streams.

Comments & Academic Discussion

Loading comments...

Leave a Comment