GuardReasoner-Omni: A Reasoning-based Multi-modal Guardrail for Text, Image, and Video

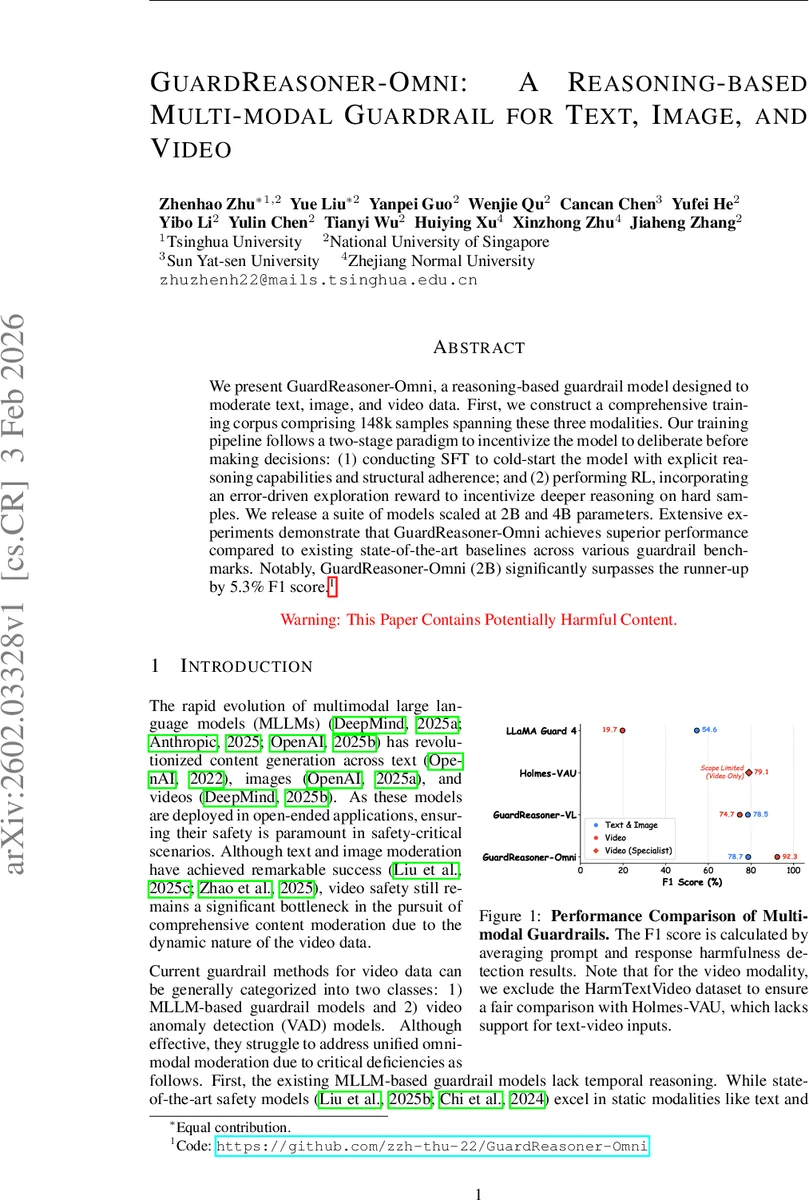

We present GuardReasoner-Omni, a reasoning-based guardrail model designed to moderate text, image, and video data. First, we construct a comprehensive training corpus comprising 148k samples spanning these three modalities. Our training pipeline follows a two-stage paradigm to incentivize the model to deliberate before making decisions: (1) conducting SFT to cold-start the model with explicit reasoning capabilities and structural adherence; and (2) performing RL, incorporating an error-driven exploration reward to incentivize deeper reasoning on hard samples. We release a suite of models scaled at 2B and 4B parameters. Extensive experiments demonstrate that GuardReasoner-Omni achieves superior performance compared to existing state-of-the-art baselines across various guardrail benchmarks. Notably, GuardReasoner-Omni (2B) significantly surpasses the runner-up by 5.3% F1 score.

💡 Research Summary

GuardReasoner‑Omni introduces a novel reasoning‑driven guardrail architecture capable of moderating text, image, and video inputs within a single unified model. Recognizing that existing safety solutions either excel in static modalities (text, image) or are limited to narrow video anomaly detection, the authors set out to create an “omni‑modal” guard that can reason about dynamic content and provide transparent explanations.

To this end, they first assemble a large‑scale training corpus, GuardReasoner‑OmniTrain‑148K, comprising 148 000 multimodal samples. Text and image data are drawn from previously curated safety datasets, while video samples are aggregated from five public benchmarks (UCF‑Crime, XD‑Violence, SafeWatch‑Bench, VHD, Video‑ChatGPT). Each sample includes a user prompt, the response of a “victim” model, and a binary safety label. Crucially, the authors generate chain‑of‑thought (CoT) annotations for every entry by prompting a state‑of‑the‑art multimodal LLM (Qwen3‑VL‑235B‑A22B) and then filtering the outputs with rule‑based quality checks. The resulting CoT is formatted with explicit

Training proceeds in two stages. The first stage, Cold‑Start Supervised Fine‑Tuning (SFT), teaches the guard to reproduce the teacher‑generated reasoning and to output the final safety verdict in the prescribed format. This stage optimizes a standard log‑likelihood loss over the reasoning chain and label, ensuring the model learns to deliberate before deciding.

The second stage, Reasoning Enhancement via Group Relative Policy Optimization (GRPO), addresses the model’s remaining weaknesses on hard, boundary cases. Hard samples are identified by running the SFT model on the training set and selecting inputs where predictions are inconsistent (neither all correct nor all incorrect). For these samples, a reinforcement learning objective is applied. The reward function combines three components: (1) a format compliance indicator, (2) an accuracy term that equally weights correct detection of harmfulness in the user request and in the model’s response, and (3) an error‑driven exploration bonus. The exploration bonus is proportional to the response length (α·tanh(L/σ)) but only awarded when the accuracy term is less than perfect, encouraging deeper reasoning only when the model makes mistakes while preventing unnecessary verbosity on already correct outputs.

GRPO optimizes a PPO‑style objective where the advantage is computed relative to the mean reward of a sampled group, and a KL‑penalty keeps the updated policy close to a reference policy. This group‑relative formulation focuses learning on the most informative samples and stabilizes training.

Evaluation spans 20 benchmarks covering both prompt‑harmfulness detection (13 benchmarks across text, image, text‑image, video, and text‑video) and response‑harmfulness detection (7 benchmarks). GuardReasoner‑Omni is released in two sizes: 2 B and 4 B parameters. Across all tasks, the 2 B model achieves an average F1 of 83.84 %, surpassing the best prior multimodal guard (LLaMA Guard) and specialized video anomaly detectors (Holmes‑VAD, Holmes‑VAU) by up to 5.3 percentage points. Notably, while existing multimodal guards suffer a dramatic drop on video benchmarks (≈19.7 pp), GuardReasoner‑Omni maintains high scores (90.24 %–99.47 %). The 4 B model shows comparable performance, indicating that the reasoning‑driven approach scales well without requiring massive parameter counts.

Key technical contributions include: (i) a unified CoT‑based output format that yields interpretable, text‑based rationales for every decision, (ii) a hard‑sample‑centric RL phase that sharpens the model’s boundary‑case performance, and (iii) an error‑driven exploration reward that incentivizes deeper reasoning only when needed, avoiding the pitfalls of simple length penalties.

Limitations are acknowledged: the video component still relies on frame‑level aggregation and may miss subtle temporal dependencies; dependence on a teacher LLM for CoT generation could inherit its biases; and the RL hyperparameters (α, σ, KL‑clip) may need task‑specific tuning. Future work could explore more sophisticated temporal reasoning modules and teacher‑independent CoT creation.

In summary, GuardReasoner‑Omni demonstrates that a reasoning‑first, format‑enforced, two‑stage training pipeline can produce a single, trustworthy guardrail model that excels across text, image, and video safety tasks, setting a new benchmark for multimodal AI safety.

Comments & Academic Discussion

Loading comments...

Leave a Comment