MedSAM-Agent: Empowering Interactive Medical Image Segmentation with Multi-turn Agentic Reinforcement Learning

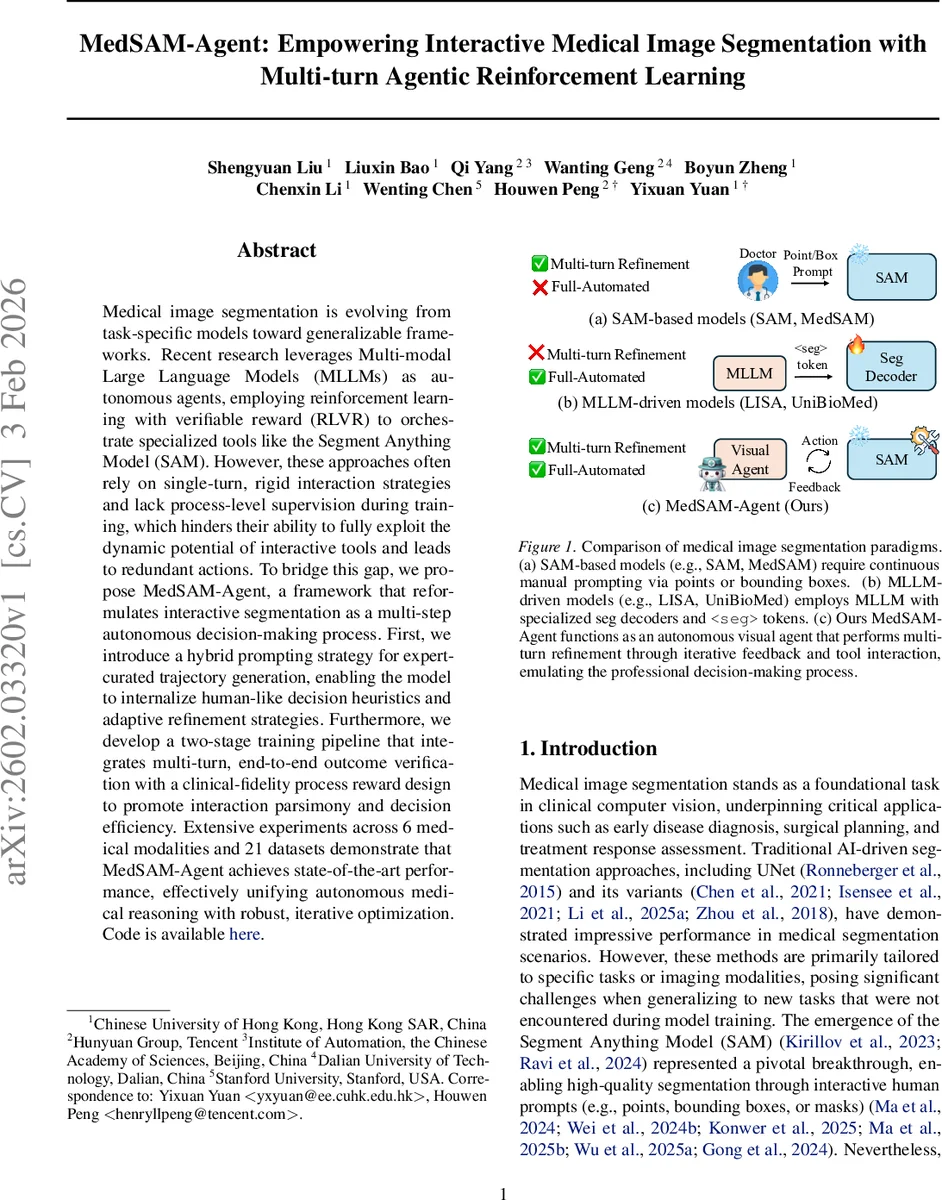

Medical image segmentation is evolving from task-specific models toward generalizable frameworks. Recent research leverages Multi-modal Large Language Models (MLLMs) as autonomous agents, employing reinforcement learning with verifiable reward (RLVR) to orchestrate specialized tools like the Segment Anything Model (SAM). However, these approaches often rely on single-turn, rigid interaction strategies and lack process-level supervision during training, which hinders their ability to fully exploit the dynamic potential of interactive tools and leads to redundant actions. To bridge this gap, we propose MedSAM-Agent, a framework that reformulates interactive segmentation as a multi-step autonomous decision-making process. First, we introduce a hybrid prompting strategy for expert-curated trajectory generation, enabling the model to internalize human-like decision heuristics and adaptive refinement strategies. Furthermore, we develop a two-stage training pipeline that integrates multi-turn, end-to-end outcome verification with a clinical-fidelity process reward design to promote interaction parsimony and decision efficiency. Extensive experiments across 6 medical modalities and 21 datasets demonstrate that MedSAM-Agent achieves state-of-the-art performance, effectively unifying autonomous medical reasoning with robust, iterative optimization. Code is available \href{https://github.com/CUHK-AIM-Group/MedSAM-Agent}{here}.

💡 Research Summary

Medical image segmentation has traditionally relied on task‑specific deep networks such as UNet, which excel when trained on a single modality or organ but struggle to generalize across the diverse range of clinical imaging scenarios. The emergence of the Segment Anything Model (SAM) introduced a powerful, prompt‑driven segmentation engine that can be steered by points, boxes, or masks, yet it still requires continuous human input and therefore cannot operate fully autonomously. Recent attempts to turn large multimodal language models (MLLMs) into agents that invoke SAM have shown promise, but they are limited to single‑turn, point‑only interactions and their reinforcement‑learning (RL) training focuses solely on final‑output metrics (e.g., Dice, IoU). Consequently, these agents often perform redundant actions and lack the ability to exploit the iterative refinement capabilities of SAM.

MedSAM‑Agent addresses these shortcomings by reframing interactive segmentation as a multi‑step decision‑making process. The core contributions are threefold. First, a hybrid prompting strategy is introduced to synthesize expert‑level interaction trajectories. Starting from a bounding‑box derived from the ground‑truth mask (with a small random jitter to emulate human imprecision), the system then iteratively adds corrective clicks. At each step it computes the false‑negative and false‑positive regions of the current mask, applies a distance transform, and places a positive or negative click at the centroid of the largest error cluster. A progress constraint (ΔIoU > τ) forces every simulated action to produce a measurable improvement; actions that fail this test are resampled up to N times. After trajectory generation, a global IoU filter discards sequences that never reach a predefined performance threshold, ensuring that the training set consists only of monotonically improving, high‑quality rollouts.

Second, MedSAM‑Agent employs a two‑stage training pipeline. In the supervised fine‑tuning (SFT) stage, the MLLM policy is cold‑started on the curated trajectories, learning to select between box, point, and stop actions and to interpret the evolving mask as part of its context. The second stage applies Reinforcement Learning with Verifiable Rewards (RLVR). Beyond the terminal Dice/IoU reward, the authors design a process‑level reward that simultaneously penalizes unnecessary steps (via a step‑cost coefficient β) and rewards per‑turn IoU gains (ΔIoU). This dual‑objective encourages the agent to be both accurate and parsimonious, mirroring how a radiologist would stop once the segmentation meets clinical fidelity. The reward is fully computable from the environment, allowing automatic verification during training.

Third, the action‑observation‑state formulation is explicitly defined. Actions comprise four types: a bounding‑box (four coordinates), a point with a polarity (+1 for foreground, –1 for background), and a stop signal. Observations are the updated masks produced by SAM after each action, and the state is the ordered list of past (action, observation) pairs. The policy πθ(aₜ|sₜ, I, P) is implemented on a Transformer‑based MLLM that emits a special

Extensive experiments were conducted on six imaging modalities (CT, MRI, ultrasound, X‑ray, PET, endoscopy) covering 21 publicly available datasets, including challenging cases such as polyps, liver tumors, and cardiac structures. MedSAM‑Agent consistently outperformed SAM‑derived baselines (e.g., MedSAM), pure MLLM‑driven text‑guided methods (e.g., LISA, UniBioMed), and recent RL‑based agents. Average Dice scores improved by 5.2 percentage points and IoU by 4.8 pp, while the mean number of interaction turns decreased by roughly 30 %. The gains were especially pronounced for objects with irregular shapes or ambiguous boundaries, where the combination of a global box anchor and targeted point refinements proved most effective.

The paper also discusses limitations. Currently the action space is limited to boxes, points, and stop; more expressive tools such as free‑form masks, splines, or texture‑based prompts are not supported. The synthetic trajectories, while high‑quality, are still generated by a simulator rather than collected from real annotators, which may introduce a domain gap. Future work is suggested to incorporate human‑in‑the‑loop fine‑tuning, expand the toolbox of SAM calls, and explore multi‑agent collaboration for complex multi‑organ segmentation tasks.

In summary, MedSAM‑Agent demonstrates that treating interactive medical segmentation as a multi‑turn, reinforcement‑learning problem—augmented with expert‑curated trajectories and a carefully crafted process reward—enables large language models to autonomously orchestrate SAM in a clinically realistic, efficient manner. The approach bridges high‑level reasoning and low‑level pixel precision, achieving state‑of‑the‑art performance across a broad spectrum of medical imaging tasks and paving the way for truly autonomous, real‑time segmentation assistants in the clinical workflow.

Comments & Academic Discussion

Loading comments...

Leave a Comment