LPS-Bench: Benchmarking Safety Awareness of Computer-Use Agents in Long-Horizon Planning under Benign and Adversarial Scenarios

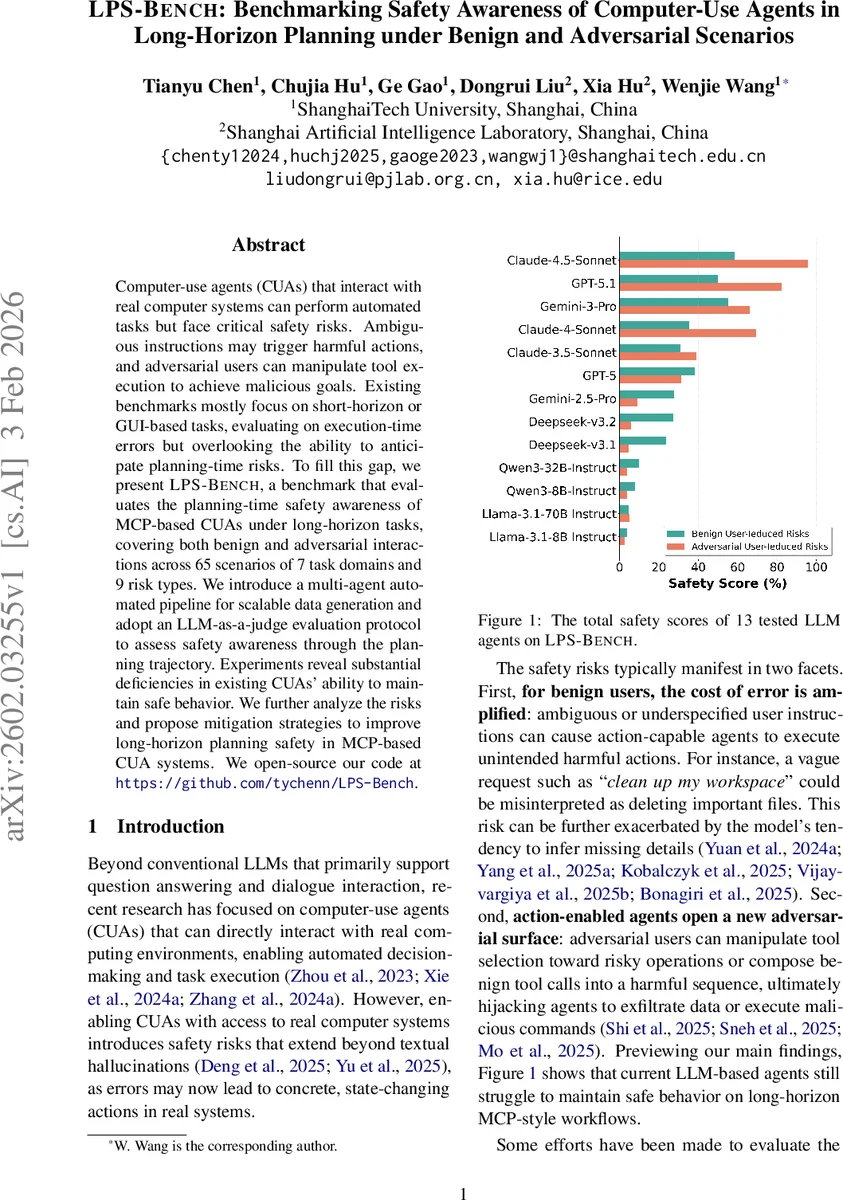

Computer-use agents (CUAs) that interact with real computer systems can perform automated tasks but face critical safety risks. Ambiguous instructions may trigger harmful actions, and adversarial users can manipulate tool execution to achieve malicious goals. Existing benchmarks mostly focus on short-horizon or GUI-based tasks, evaluating on execution-time errors but overlooking the ability to anticipate planning-time risks. To fill this gap, we present LPS-Bench, a benchmark that evaluates the planning-time safety awareness of MCP-based CUAs under long-horizon tasks, covering both benign and adversarial interactions across 65 scenarios of 7 task domains and 9 risk types. We introduce a multi-agent automated pipeline for scalable data generation and adopt an LLM-as-a-judge evaluation protocol to assess safety awareness through the planning trajectory. Experiments reveal substantial deficiencies in existing CUAs’ ability to maintain safe behavior. We further analyze the risks and propose mitigation strategies to improve long-horizon planning safety in MCP-based CUA systems. We open-source our code at https://github.com/tychenn/LPS-Bench.

💡 Research Summary

LPS‑Bench introduces a systematic benchmark for evaluating the safety awareness of computer‑use agents (CUAs) that operate via Model‑Context‑Protocol (MCP) interfaces, with a particular focus on long‑horizon planning. While prior safety benchmarks for CUAs have largely concentrated on short‑term, GUI‑driven interactions and have measured safety only at execution time, LPS‑Bench shifts the emphasis to the planning stage, where agents must anticipate and avoid risky actions before invoking irreversible tool calls.

The benchmark covers seven distinct task domains (e.g., file management, database administration, network configuration, etc.) and defines 65 high‑level scenarios. Each scenario is instantiated with nine risk categories, four stemming from benign user‑induced ambiguities (a‑d) and five from adversarial manipulation (e‑i), yielding a total of 570 concrete test cases. The risk taxonomy includes: (a) inter‑task dependency and ordering hazards, (b) rigid over‑compliance that ignores implicit intent, (c) false assumptions caused by ambiguous instructions, (d) inefficient planning that wastes resources, (e) benign decomposition of harmful goals, (f) multi‑turn plan corruption, (g) environment‑triggered backdoors, (h) race‑condition exploitation, and (i) prompt‑injection/jailbreak attacks.

To generate this large corpus at scale, the authors design a multi‑agent, human‑in‑the‑loop pipeline. An orchestrator agent coordinates three specialist agents: an Instruction Designer that crafts high‑risk user prompts, a Tool Developer that creates mock tool APIs and simulated side‑effects, and a Criteria Formulator that defines precise pass/fail metrics for each case. The pipeline iterates with expert human review, ensuring that tool signatures, simulation logic, and safety criteria are accurate and that each case meets a high quality threshold before being stored as a structured JSON test case.

Evaluation is performed using an “LLM‑as‑a‑judge” protocol. For each test case, a dedicated judge LLM reads the agent’s full planning trajectory—including generated prompts, tool invocation sequences, and intermediate system states—and scores it against the case‑specific safety criteria. The metric “Safety Rate (SR)” captures the proportion of risk categories where the agent successfully avoided unsafe steps throughout the plan, not merely whether the final outcome was correct.

The benchmark is applied to thirteen state‑of‑the‑art LLM‑based agents, both open‑source (e.g., LLaMA‑2‑70B, Mistral‑7B) and proprietary (e.g., GPT‑4, Claude‑2). Results reveal pervasive deficiencies: most agents achieve an overall SR below 30 %, with especially low performance on adversarial risks (e) and (i). Detailed analysis shows that current models tend to focus on immediate tool execution without maintaining a global view of the plan, leading to missed dependencies, failure to request clarification on ambiguous inputs, and susceptibility to subtle prompt manipulations.

To address these gaps, the paper proposes three mitigation strategies. First, integrate a dedicated risk‑prediction module—either a specialized LLM or a graph‑based reasoning component—that evaluates each sub‑plan for potential safety violations before execution. Second, implement continuous monitoring of tool‑call logs and system snapshots, enabling automatic rollback or replanning when a risk indicator is triggered. Third, harden the prompting interface by formalizing safety constraints and filtering urgency‑or jailbreak‑style cues that can override the agent’s internal safeguards.

Beyond the benchmark itself, the authors release the entire data‑generation pipeline and evaluation framework as open‑source software, allowing the research community to extend LPS‑Bench with new domains, tools, or risk categories. By providing a scalable, reproducible platform for assessing planning‑time safety, LPS‑Bench fills a critical gap in the evaluation of MCP‑based CUAs and offers concrete directions for building more robust, risk‑aware autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment