Spiral RoPE: Rotate Your Rotary Positional Embeddings in the 2D Plane

Rotary Position Embedding (RoPE) is the de facto positional encoding in large language models due to its ability to encode relative positions and support length extrapolation. When adapted to vision transformers, the standard axial formulation decomposes two-dimensional spatial positions into horizontal and vertical components, implicitly restricting positional encoding to axis-aligned directions. We identify this directional constraint as a fundamental limitation of the standard axial 2D RoPE, which hinders the modeling of oblique spatial relationships that naturally exist in natural images. To overcome this limitation, we propose Spiral RoPE, a simple yet effective extension that enables multi-directional positional encoding by partitioning embedding channels into multiple groups associated with uniformly distributed directions. Each group is rotated according to the projection of the patch position onto its corresponding direction, allowing spatial relationships to be encoded beyond the horizontal and vertical axes. Across a wide range of vision tasks including classification, segmentation, and generation, Spiral RoPE consistently improves performance. Qualitative analysis of attention maps further show that Spiral RoPE exhibits more concentrated activations on semantically relevant objects and better respects local object boundaries, highlighting the importance of multi-directional positional encoding in vision transformers.

💡 Research Summary

Paper Overview

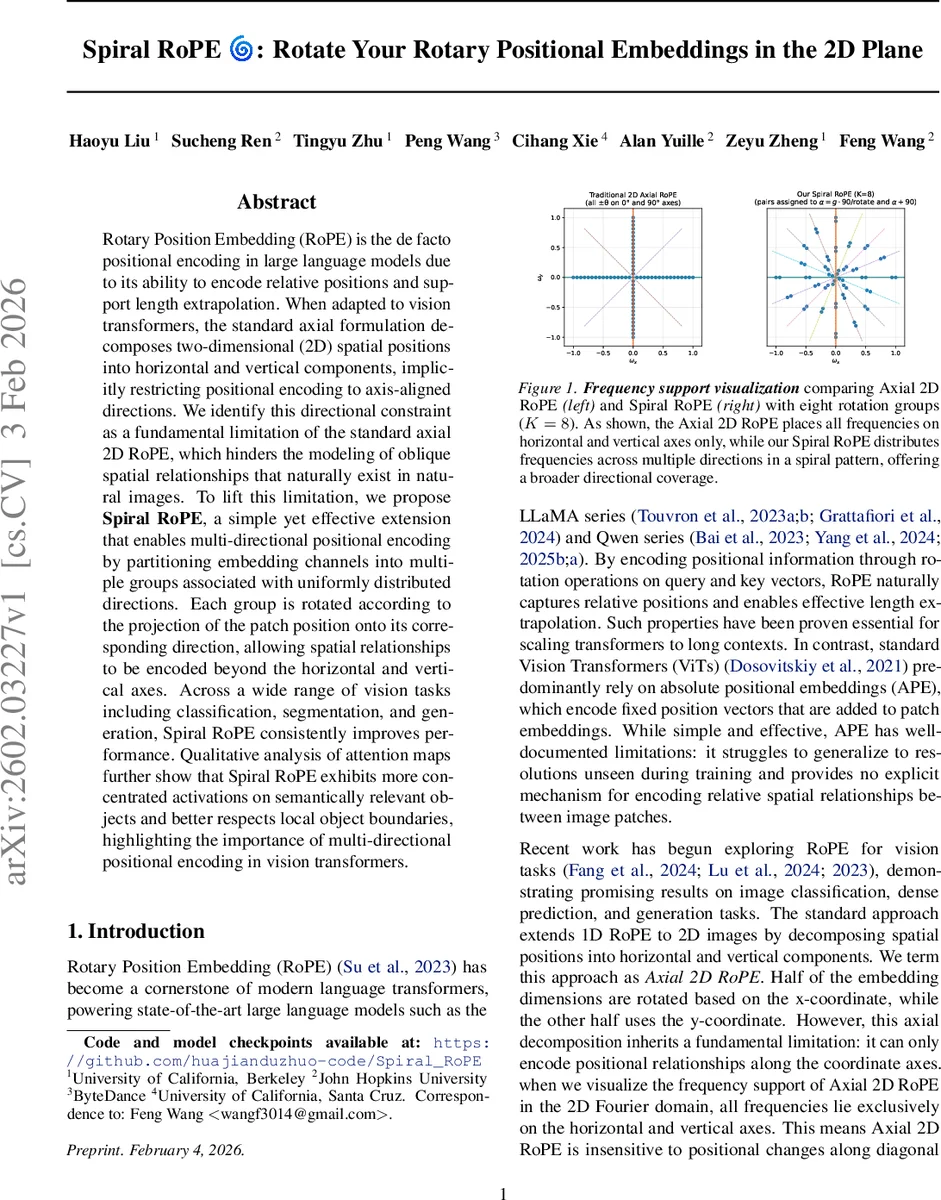

Rotary Position Embedding (RoPE) has become the de‑facto positional encoding for large language models because it encodes relative positions and supports length extrapolation. When RoPE is transferred to vision transformers, the common practice is to split the 2‑D spatial coordinate into an x‑component and a y‑component and apply the 1‑D RoPE independently on each half of the embedding. This “axial” formulation places all rotary frequencies on the horizontal and vertical axes in the Fourier domain, making the encoding blind to oblique or diagonal relationships that are abundant in natural images.

Key Insight

The authors identify this directional constraint as a fundamental limitation: axial RoPE can only represent positional changes along the coordinate axes. Visualizing the frequency support shows that only (±θ, 0) and (0, ±θ) vectors are used, and a Fourier reconstruction experiment demonstrates severe artifacts when trying to recover circular patterns.

Spiral RoPE Proposal

Spiral RoPE removes the axis‑only restriction by partitioning the embedding dimension into K equal groups, each associated with a uniformly sampled direction ϕₖ ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment