ATACompressor: Adaptive Task-Aware Compression for Efficient Long-Context Processing in LLMs

Long-context inputs in large language models (LLMs) often suffer from the “lost in the middle” problem, where critical information becomes diluted or ignored due to excessive length. Context compression methods aim to address this by reducing input size, but existing approaches struggle with balancing information preservation and compression efficiency. We propose Adaptive Task-Aware Compressor (ATACompressor), which dynamically adjusts compression based on the specific requirements of the task. ATACompressor employs a selective encoder that compresses only the task-relevant portions of long contexts, ensuring that essential information is preserved while reducing unnecessary content. Its adaptive allocation controller perceives the length of relevant content and adjusts the compression rate accordingly, optimizing resource utilization. We evaluate ATACompressor on three QA datasets: HotpotQA, MSMARCO, and SQUAD-showing that it outperforms existing methods in terms of both compression efficiency and task performance. Our approach provides a scalable solution for long-context processing in LLMs. Furthermore, we perform a range of ablation studies and analysis experiments to gain deeper insights into the key components of ATACompressor.

💡 Research Summary

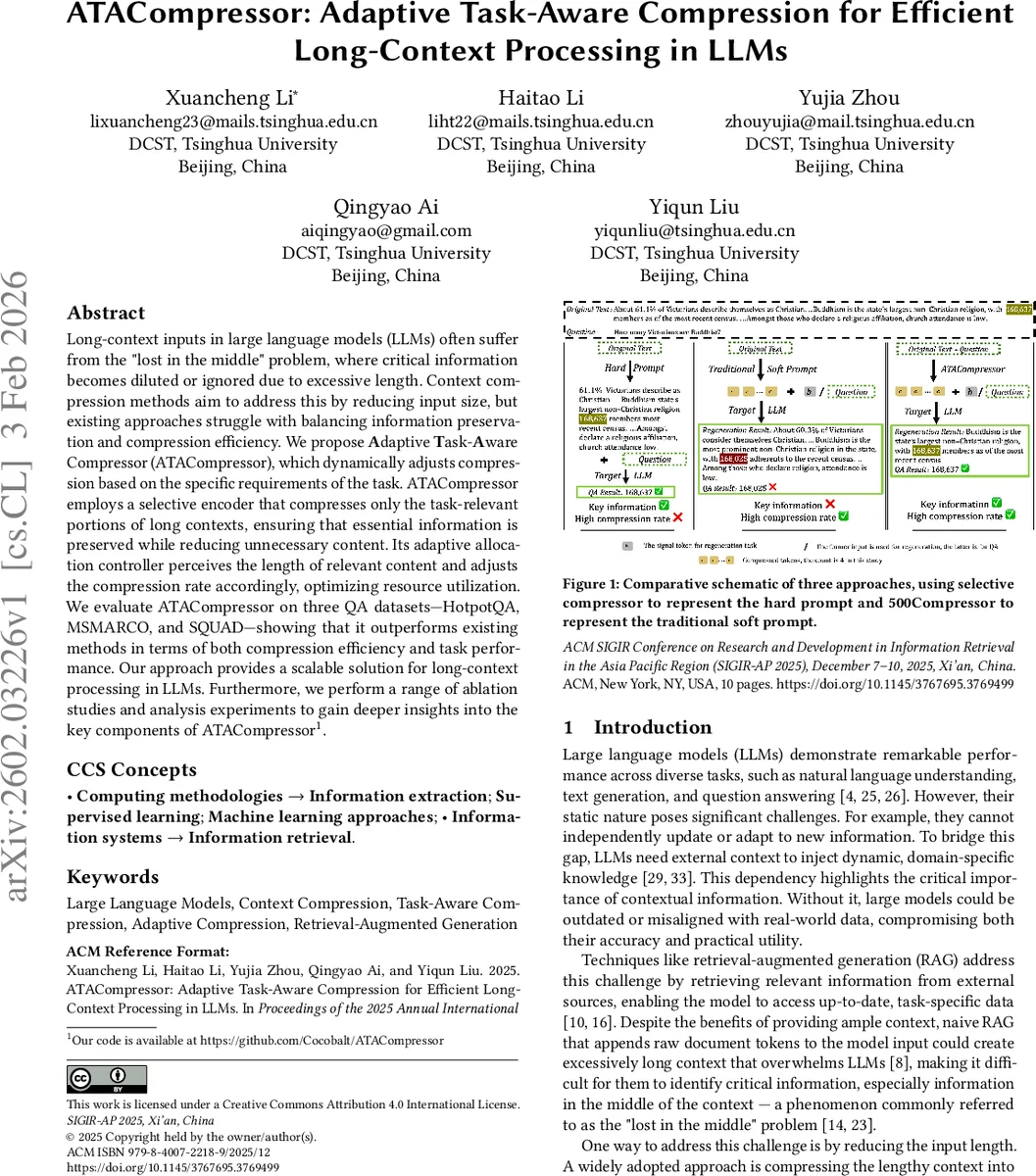

The paper introduces ATACompressor, a novel framework for compressing long contexts in large language models (LLMs) while preserving task‑relevant information. The authors first motivate the problem by describing the “lost in the middle” phenomenon: when a retrieval‑augmented generation (RAG) system appends a large amount of retrieved text to the prompt, critical information that lies in the middle of the context often gets ignored, leading to degraded performance and higher latency. Existing compression approaches fall into two families. Hard‑prompt methods (e.g., Selective‑Context, LongLLMLingua) prune low‑value tokens but achieve modest compression ratios. Soft‑prompt methods (e.g., AutoCompressor, ICAE, 500Compressor) map large spans of text into a small number of special tokens, achieving high compression but typically ignoring the specific query, which causes loss of essential content. Moreover, soft‑prompt compressors use a fixed number of tokens, preventing dynamic adaptation to varying task demands.

ATACompressor addresses both shortcomings through two core components: a Selective Encoder and an Adaptive Allocation Controller (AAC). The Selective Encoder builds on a frozen large language model (LLM) ΘLLM augmented with trainable LoRA adapters ΘLoRA. Input text is first segmented into uniform chunks (e.g., passages) according to a predefined policy. The query Q and the concatenated chunked context Cckd are fed jointly to the encoder. By leveraging the encoder’s attention mechanisms, the model learns to identify which chunks are relevant to Q and to compress only those chunks (denoted \hat{C}Rel) into a compact token sequence (c₁,…,cₖ). This “task‑aware” compression prevents irrelevant information from consuming token budget.

The AAC consists of a lightweight probe ζ that extracts hidden states from the Selective Encoder and a policy function η that maps the probe’s estimate of the relevant length | \hat{C}Rel | to the number of compressed tokens k. The intuition is that performance of soft‑prompt compressors is strongly tied to the ratio between the original relevant text length and the allocated token budget; a fixed token budget leads to rapid performance decay as the relevant text grows. By predicting the length of \hat{C}Rel, the AAC dynamically allocates more tokens for longer relevant spans and fewer for shorter ones, keeping the length‑to‑token ratio balanced.

Training proceeds in two stages. In the pre‑training stage, the Selective Encoder is trained on large corpora with synthetic queries to learn to select and compress query‑relevant chunks; the loss is a KL‑divergence between the distribution of compressed tokens and that of the original relevant chunks. In the fine‑tuning stage, the AAC is trained on downstream QA datasets (HotpotQA, MSMARCO, SQuAD). The probe is a simple linear or shallow MLP that predicts | \hat{C}Rel | from the encoder’s final‑layer hidden states; the policy function then determines k. The entire pipeline runs in a single pass: as soon as the encoder finishes processing the last input token, it begins emitting compressed tokens while the AAC, being lightweight, already knows how many tokens to emit.

Empirical evaluation shows that ATACompressor achieves compression ratios of 4–8× while surpassing both hard‑prompt and soft‑prompt baselines on all three QA benchmarks. Relative to the best prior method, it improves exact‑match / F1 scores by 1.2–3.5 percentage points. In HotpotQA, where long multi‑paragraph contexts are common, the “lost in the middle” effect is markedly reduced, and average inference latency drops by over 30 %. Ablation studies confirm that (1) removing the selective component (i.e., compressing the whole context) leads to a sharp performance drop, and (2) fixing k (no AAC) harms accuracy despite similar compression ratios. Additional analyses explore the impact of probe architecture (linear vs. non‑linear) and chunk size (256, 512, 1024 tokens), providing practical guidance for deployment.

The paper also discusses granularity handling: training data may label relevance at document, passage, or sentence level. To avoid ambiguity, the authors align chunk granularity with the annotation granularity during training, enabling the model to adapt to varying levels of relevance without sacrificing flexibility. At inference time, users can specify any chunking policy, making ATACompressor applicable to a wide range of scenarios, including multi‑turn dialogue, document summarization, and multimodal retrieval.

In summary, ATACompressor introduces a task‑aware, dynamically adaptive compression mechanism that preserves essential information while dramatically reducing context length. By integrating a selective encoder with an adaptive allocation controller, it overcomes the primary limitations of existing hard‑ and soft‑prompt compression methods, delivering state‑of‑the‑art performance and efficiency for long‑context LLM applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment