DynSplit-KV: Dynamic Semantic Splitting for KVCache Compression in Efficient Long-Context LLM Inference

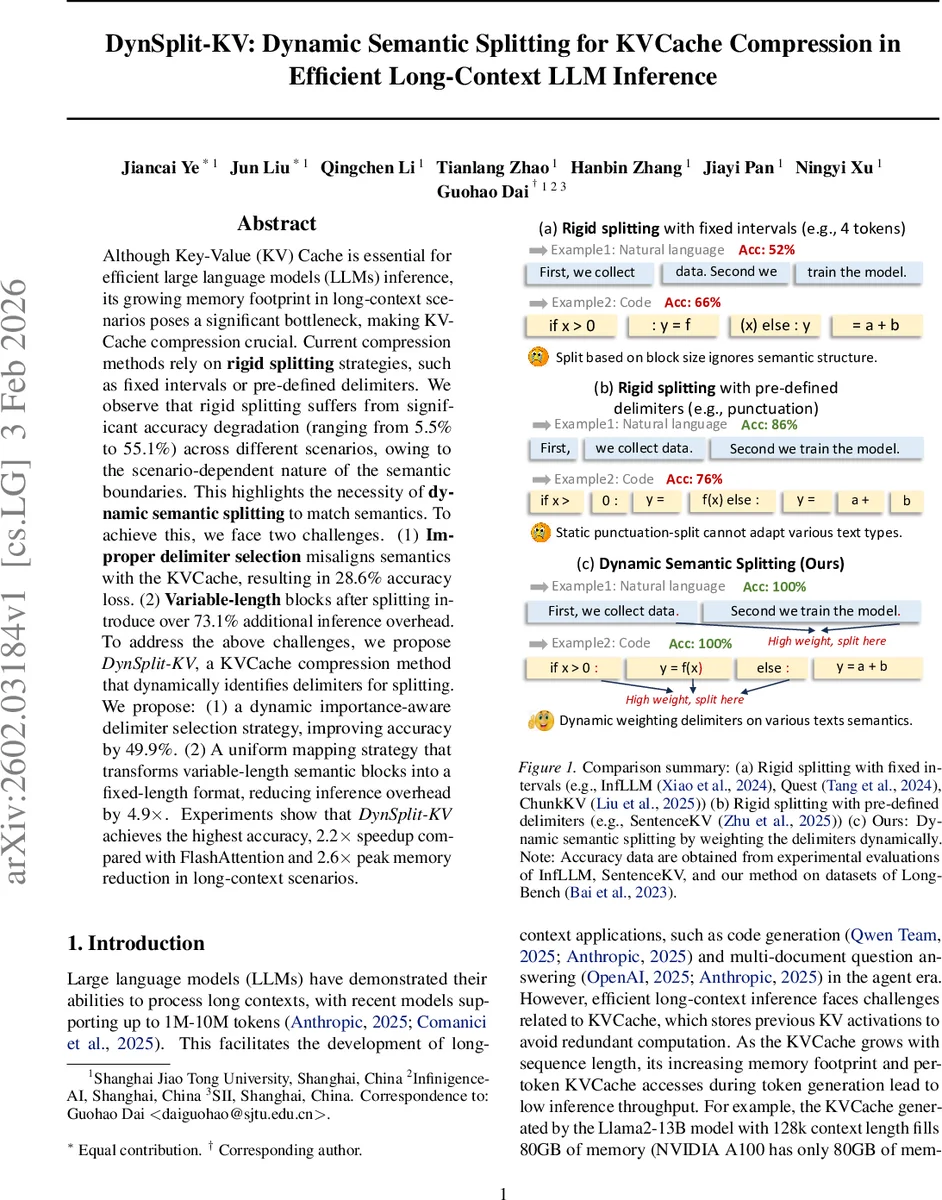

Although Key-Value (KV) Cache is essential for efficient large language models (LLMs) inference, its growing memory footprint in long-context scenarios poses a significant bottleneck, making KVCache compression crucial. Current compression methods rely on rigid splitting strategies, such as fixed intervals or pre-defined delimiters. We observe that rigid splitting suffers from significant accuracy degradation (ranging from 5.5% to 55.1%) across different scenarios, owing to the scenario-dependent nature of the semantic boundaries. This highlights the necessity of dynamic semantic splitting to match semantics. To achieve this, we face two challenges. (1) Improper delimiter selection misaligns semantics with the KVCache, resulting in 28.6% accuracy loss. (2) Variable-length blocks after splitting introduce over 73.1% additional inference overhead. To address the above challenges, we propose DynSplit-KV, a KVCache compression method that dynamically identifies delimiters for splitting. We propose: (1) a dynamic importance-aware delimiter selection strategy, improving accuracy by 49.9%. (2) A uniform mapping strategy that transforms variable-length semantic blocks into a fixed-length format, reducing inference overhead by 4.9x. Experiments show that DynSplit-KV achieves the highest accuracy, 2.2x speedup compared with FlashAttention and 2.6x peak memory reduction in long-context scenarios.

💡 Research Summary

Large language models (LLMs) have pushed the limits of context length to millions of tokens, but the KV‑Cache that stores past key‑value pairs grows linearly with sequence length, becoming the dominant memory consumer and latency source during inference. For example, a 13‑billion‑parameter Llama‑2 model with a 128 k token context fills the full 80 GB of an A100 GPU. Existing KV‑Cache compression techniques fall into two categories: (1) fixed‑interval splitting, which chops the cache into equal‑size blocks regardless of semantics, and (2) pre‑defined delimiter splitting, which uses punctuation or line breaks to approximate sentence boundaries. Both approaches ignore the scenario‑dependent nature of semantic boundaries, leading to severe accuracy drops ranging from 5.5 % to 55.1 % across tasks such as natural‑language QA, code generation, and multi‑document retrieval.

The paper identifies two concrete challenges. First, an ill‑chosen delimiter misaligns semantic units with the KV‑Cache, causing up to a 28.6 % loss in downstream accuracy. Second, variable‑length blocks produced by semantic splitting introduce irregular memory layouts that prevent efficient block‑wise scoring; the KV‑selection stage then incurs more than 73 % extra compute overhead. To overcome these issues, the authors propose DynSplit‑KV, which consists of two novel components: (i) DD‑Select, a dynamic importance‑aware delimiter selection strategy, and (ii) V2F, a uniform mapping that converts variable‑length blocks into fixed‑length representations.

Delimiter importance scoring. For each candidate delimiter position i, the method defines three regions: (a) a retained region Oᵢ consisting of the R tokens immediately before i, (b) a discarded region Dᵢ containing all earlier tokens, and (c) a future window Fᵢ covering the next W tokens after i. Using the multi‑head, multi‑layer attention matrices A(l,h) from the transformer, the importance score is computed as

sᵢ = Σₗ,ₕ Σ_{q∈Fᵢ} Σ_{k∈Oᵢ} A(l,h){q,k} − α·Σₗ,ₕ Σ{q∈Fᵢ} Σ_{k∈Dᵢ} A(l,h)_{q,k}.

The first term measures how much the future tokens attend to the recent context (a sign of a good semantic boundary), while the second term penalizes long‑range dependencies. Empirically, the same delimiter can have a 45 %–55 % score variance between code and prose, and up to 49 % variance across different model families, confirming that static delimiters are insufficient.

DD‑Select. Given a target chunk size C and a tolerance Δ, DD‑Select slides a window around the ideal end sₑ = s_c + C (where s_c is the current start). For each delimiter e inside

Comments & Academic Discussion

Loading comments...

Leave a Comment