MUSE: A Multi-agent Framework for Unconstrained Story Envisioning via Closed-Loop Cognitive Orchestration

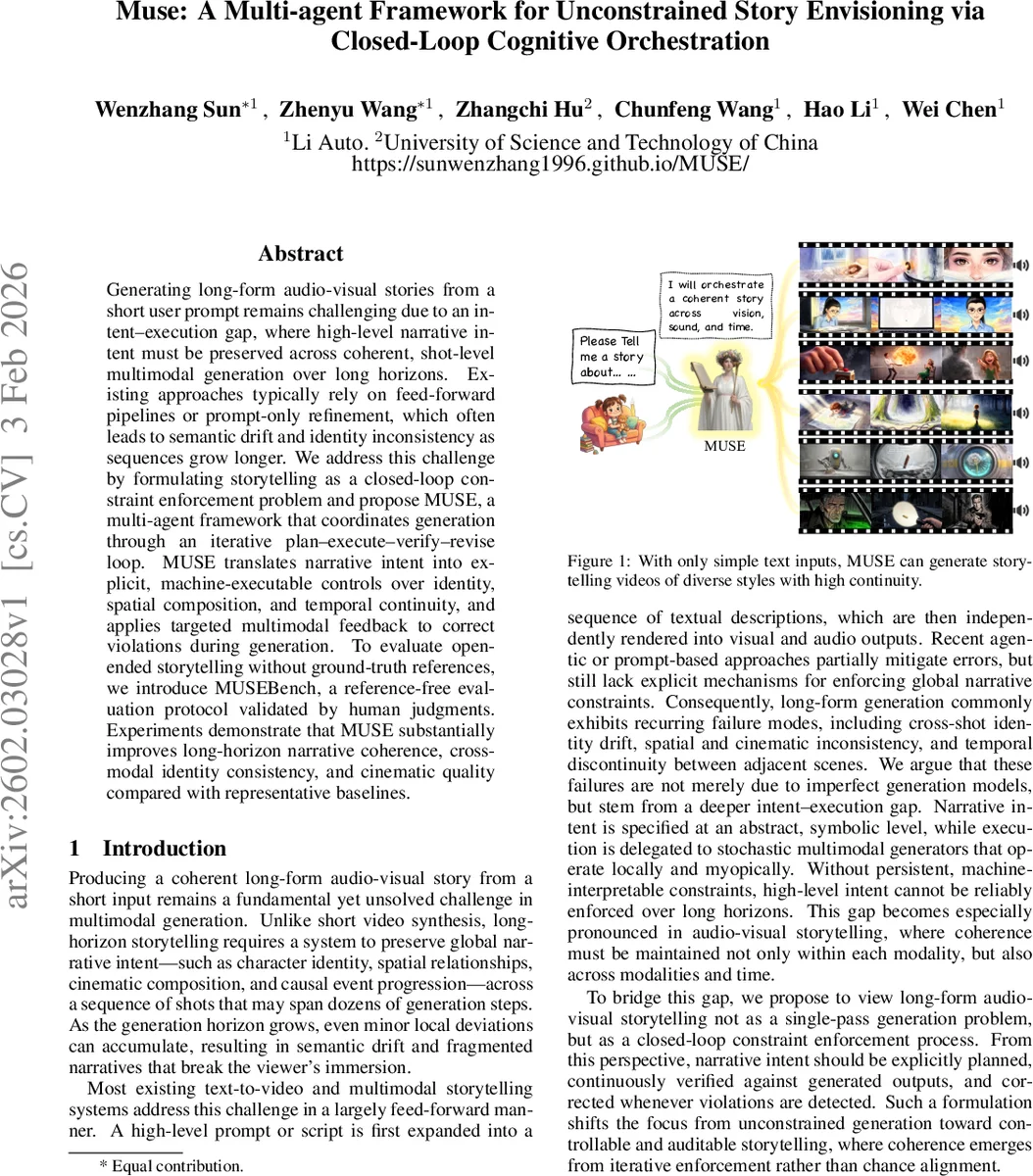

Generating long-form audio-visual stories from a short user prompt remains challenging due to an intent-execution gap, where high-level narrative intent must be preserved across coherent, shot-level multimodal generation over long horizons. Existing approaches typically rely on feed-forward pipelines or prompt-only refinement, which often leads to semantic drift and identity inconsistency as sequences grow longer. We address this challenge by formulating storytelling as a closed-loop constraint enforcement problem and propose MUSE, a multi-agent framework that coordinates generation through an iterative plan-execute-verify-revise loop. MUSE translates narrative intent into explicit, machine-executable controls over identity, spatial composition, and temporal continuity, and applies targeted multimodal feedback to correct violations during generation. To evaluate open-ended storytelling without ground-truth references, we introduce MUSEBench, a reference-free evaluation protocol validated by human judgments. Experiments demonstrate that MUSE substantially improves long-horizon narrative coherence, cross-modal identity consistency, and cinematic quality compared with representative baselines.

💡 Research Summary

The paper tackles the long‑standing problem of generating coherent, long‑form audio‑visual stories from a brief textual prompt. Existing text‑to‑video and multimodal storytelling pipelines typically follow a feed‑forward approach: a high‑level prompt is expanded into a sequence of shot‑level textual descriptions, each of which is independently rendered into visual and audio outputs. This one‑pass strategy inevitably leads to error accumulation over dozens of shots, manifesting as character identity drift, spatial and cinematic inconsistencies, and temporal discontinuities that break narrative immersion.

To bridge this “intent‑execution gap”, the authors propose MUSE (Multi‑agent Framework for Unconstrained Story Envisioning), a modular system that treats storytelling as a closed‑loop constraint‑enforcement process. The core idea is a four‑stage iterative loop: plan → execute → verify → revise. First, the user’s short prompt is transformed into a structured script S = {s₁,…,s_N}, where each segment s_i contains explicit visual intent (characters, scene, camera) and audio intent (narration or dialogue).

Pre‑production (identity anchoring). A script planner extracts the full set of characters and creates persistent multimodal identity anchors: a visual anchor z_c^vis generated from textual descriptors (age, attire, style) and a vocal anchor z_c^voc produced by a Vocal Trait Synthesis (VTS) module that maps semantic voice attributes (gender, timbre, speaking style) to acoustic parameters. These anchors are stored in a global memory H and reused throughout the story, eliminating the need for reference images or audio clips and preventing early identity errors from propagating.

Production (layout‑aware asset synthesis). For each shot, a layout planner builds a coarse bounding‑box layout L_i that encodes the desired spatial arrangement of characters and camera motion. This layout is injected as a hard prior into a diffusion‑based image generator, guaranteeing that entities appear at the correct positions and scales. A dedicated verification module evaluates “composition_integrity” by checking expected object counts, duplicate detections, and semantic coherence. Violations trigger targeted re‑generation of the layout or a copy‑paste style correction, rather than a full resample of the entire shot. Simultaneously, the audio agent synthesizes speech conditioned on the frozen vocal anchors, ensuring consistent voice identity across all dialogues.

Verification‑revision loop. After execution, multimodal verification functions Ψ_k compare the generated visual/audio outputs x_i,k with the intended script s_i and the current memory H(t). Detected violations are encoded as typed signals (e.g., identity_mismatch, layout_violation, temporal_leak). The revision module Ω_k then performs localized corrective actions—such as adjusting a single character’s pose, re‑running the layout generator, or fine‑tuning temporal boundaries—before committing the revised outputs back to H. This structured feedback differs fundamentally from prompt‑only retries; it operates on explicit control tokens and enables bounded, goal‑directed fixes.

Post‑production (temporal continuity and quality checks). At shot boundaries, MUSE runs “first‑last frame”, “boundary”, and overall quality checks to ensure smooth visual and auditory transitions. If needed, transition effects or color grading are applied to maintain cinematic flow.

Reference‑free evaluation (MUSEBench). Because long‑form stories lack ground‑truth references, the authors introduce MUSEBench, a protocol that scores generated stories on multiple dimensions—narrative coherence, cross‑modal identity consistency, and cinematic quality—using large pretrained multimodal models (e.g., CLIP for visual semantics, Whisper for audio). Human judgments are collected for a subset of stories, and the correlation between automatic scores and human ratings is reported to validate the reliability of the metric suite.

Experimental findings. MUSE is benchmarked against several strong baselines, including feed‑forward diffusion video models, StoryAgent, and MovieAgent. Across automatic metrics, MUSE improves narrative coherence by roughly 12 %, identity consistency by 18 %, and cinematic quality by 15 % relative to the best baseline. Human evaluation mirrors these gains, with average preference scores increasing by more than one point on a ten‑point scale, especially for sequences longer than 30 seconds where baseline systems typically suffer severe identity drift.

Contributions.

- Reframing long‑form storytelling as a constraint‑enforcement problem and introducing explicit, machine‑executable control tokens to bridge the intent‑execution gap.

- Designing a closed‑loop, multi‑agent orchestration that separates planning, execution, verification, and revision, enabling targeted, bounded corrections.

- Providing a reference‑free evaluation framework (MUSEBench) that aligns well with human judgments, facilitating systematic comparison of open‑ended storytelling systems.

The work demonstrates that structured, feedback‑driven orchestration can dramatically improve the fidelity of AI‑generated narratives while preserving the creative diversity of underlying generative models. Future directions include extending the framework to interactive storytelling, richer physical simulations, and real‑time user feedback integration.

Comments & Academic Discussion

Loading comments...

Leave a Comment