Zero2Text: Zero-Training Cross-Domain Inversion Attacks on Textual Embeddings

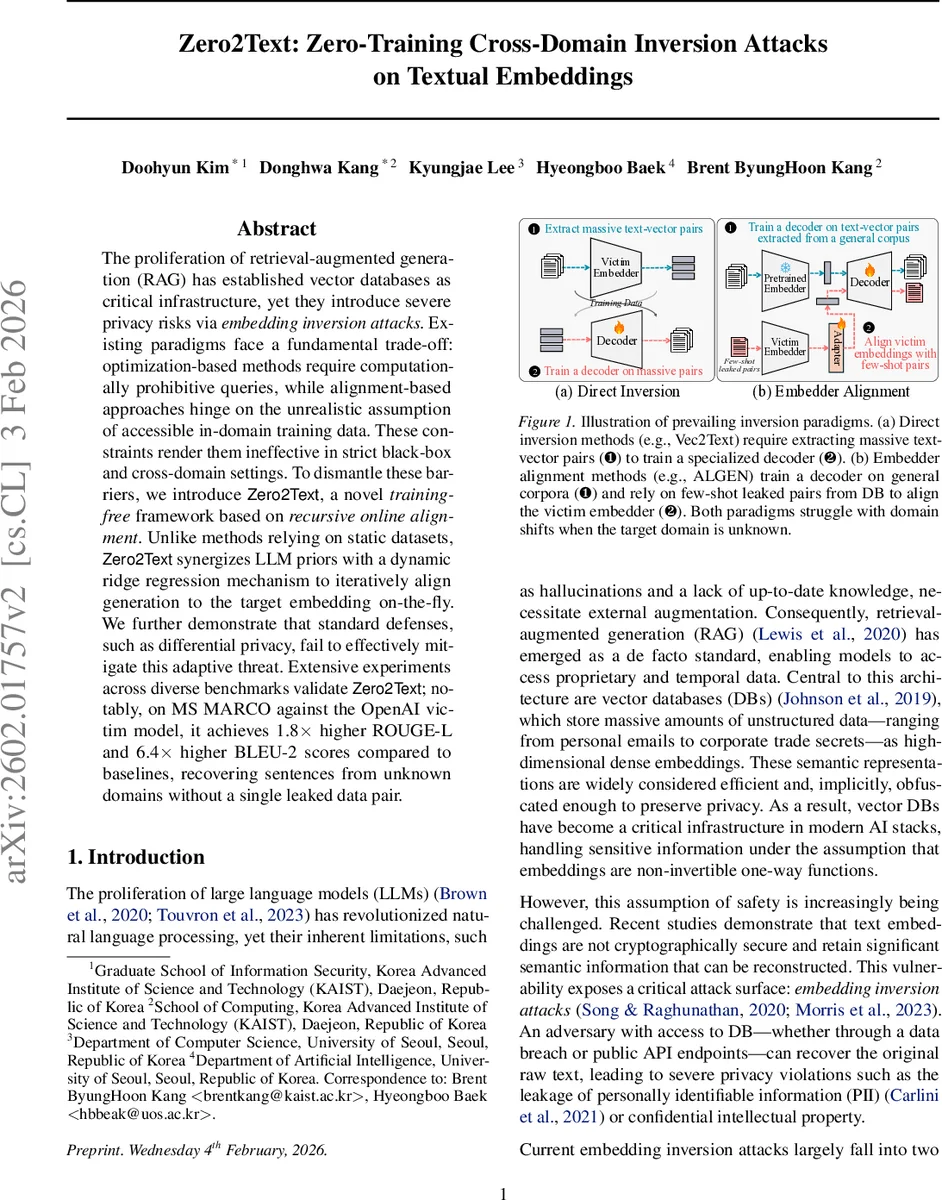

The proliferation of retrieval-augmented generation (RAG) has established vector databases as critical infrastructure, yet they introduce severe privacy risks via embedding inversion attacks. Existing paradigms face a fundamental trade-off: optimization-based methods require computationally prohibitive queries, while alignment-based approaches hinge on the unrealistic assumption of accessible in-domain training data. These constraints render them ineffective in strict black-box and cross-domain settings. To dismantle these barriers, we introduce Zero2Text, a novel training-free framework based on recursive online alignment. Unlike methods relying on static datasets, Zero2Text synergizes LLM priors with a dynamic ridge regression mechanism to iteratively align generation to the target embedding on-the-fly. We further demonstrate that standard defenses, such as differential privacy, fail to effectively mitigate this adaptive threat. Extensive experiments across diverse benchmarks validate Zero2Text; notably, on MS MARCO against the OpenAI victim model, it achieves 1.8x higher ROUGE-L and 6.4x higher BLEU-2 scores compared to baselines, recovering sentences from unknown domains without a single leaked data pair.

💡 Research Summary

Zero2Text addresses a critical privacy vulnerability in modern retrieval‑augmented generation (RAG) pipelines, where dense text embeddings stored in vector databases are assumed to be non‑invertible. Existing inversion attacks fall into two categories. Direct inversion methods (e.g., Vec2Text, GEIA) train a dedicated decoder on massive collections of embedding‑text pairs, often requiring millions of samples that are unrealistic to obtain in practice. Embedder‑alignment methods (e.g., ALGEN, TEIA) alleviate data requirements by pre‑training a decoder on a general corpus and then aligning the victim embedder with a small leaked set (typically 1–8 K pairs). Both approaches share a hidden assumption: the domain of the target embeddings matches the domain of the training or alignment data. In real‑world deployments, embeddings originate from heterogeneous, often highly specialized domains, causing severe performance degradation for these methods.

Zero2Text proposes a fundamentally different, training‑free paradigm that eliminates any reliance on auxiliary datasets. The core idea is to combine the linguistic priors of a large language model (LLM) with an online ridge‑regression based projection that is updated iteratively for each target embedding. The attack proceeds as follows:

-

LLM‑guided candidate generation – Starting from a BOS token, a pretrained LLM (e.g., Qwen‑3‑0.6B or Llama) predicts a probability distribution over the vocabulary. Instead of taking the top‑K tokens, the algorithm selects a diverse set of Kₛ tokens whose pairwise cosine similarity (computed on token embeddings) stays below a predefined threshold Tₕw. This diversity step prevents mode collapse. Beam search retains the best K_B sequences for the next iteration.

-

Online projection optimization – For each generated candidate sentence, the attacker obtains a local embedding eᵢ using a public embedding model. A subset of candidates (Kₐ·γₜ, where γ < 1 decays over iterations) is sent to the victim API to retrieve the true embedding \tilde{e}ᵢ. Using all accumulated pairs (Eₜ, \tilde{E}ₜ), the attacker solves a ridge‑regression problem

\

Comments & Academic Discussion

Loading comments...

Leave a Comment