Aggregation Queries over Unstructured Text: Benchmark and Agentic Method

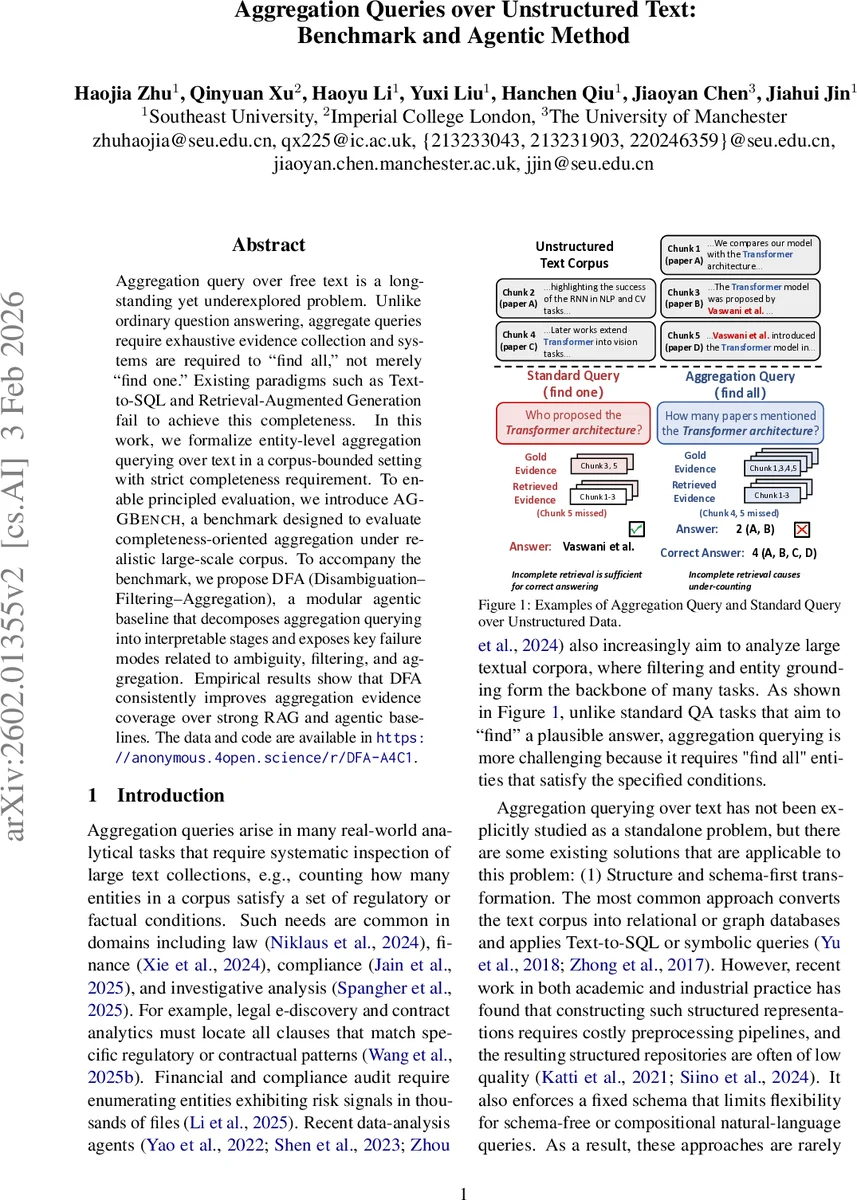

Aggregation query over free text is a long-standing yet underexplored problem. Unlike ordinary question answering, aggregate queries require exhaustive evidence collection and systems are required to “find all,” not merely “find one.” Existing paradigms such as Text-to-SQL and Retrieval-Augmented Generation fail to achieve this completeness. In this work, we formalize entity-level aggregation querying over text in a corpus-bounded setting with strict completeness requirement. To enable principled evaluation, we introduce AGGBench, a benchmark designed to evaluate completeness-oriented aggregation under realistic large-scale corpus. To accompany the benchmark, we propose DFA (Disambiguation–Filtering–Aggregation), a modular agentic baseline that decomposes aggregation querying into interpretable stages and exposes key failure modes related to ambiguity, filtering, and aggregation. Empirical results show that DFA consistently improves aggregation evidence coverage over strong RAG and agentic baselines. The data and code are available in \href{https://anonymous.4open.science/r/DFA-A4C1}.

💡 Research Summary

This paper tackles a largely overlooked problem in natural‑language processing: executing aggregation queries over unstructured text with a strict “find‑all” requirement. Unlike conventional question answering (QA) that seeks a single plausible answer, aggregation queries must retrieve the complete set of entities that satisfy a set of compositional conditions. The authors formalize this task at the entity level, defining a query q as a pair (T, Φ) where T is the target entity type and Φ = {ϕ₁,…,ϕₘ} is a collection of Boolean predicates expressed in natural language. An entity e satisfies q if it belongs to type T and every predicate ϕᵢ evaluates to true based on explicit textual evidence in the corpus C. The corpus is partitioned into chunks, and the exact answer is the union of per‑chunk results, after deduplication.

To evaluate methods under realistic conditions, the authors introduce AGGBench, a new benchmark designed specifically for completeness‑oriented aggregation. The benchmark construction follows a three‑stage pipeline: (1) a domain‑focused “core” subset of documents is curated; frequent entity types are automatically extracted and manually filtered, and for each type high‑frequency descriptive phrases are mined to create candidate conditions. Human curators then write natural‑language aggregation queries (e.g., “How many datasets are used for multi‑hop question answering?”). (2) Evidence‑grounded annotation is performed on the core subset using a two‑step process: an LLM first predicts whether each chunk contains evidence that a candidate entity satisfies the query, dramatically pruning the search space; human annotators then verify and correct the remaining predictions, ensuring that every entity‑condition pair is linked to concrete textual evidence. (3) The corpus is expanded with a large collection of unrelated documents to simulate a sparse‑evidence retrieval environment. The added documents are filtered via BM25 similarity to the core set to avoid introducing new satisfying entities, preserving the validity of the ground‑truth annotations while drastically lowering the overall evidence density (down to 0.85 %). AGGBench comprises 362 aggregation queries with fully grounded entity annotations.

Building on this benchmark, the paper proposes DFA (Disambiguation‑Filtering‑Aggregation), a modular agentic baseline that explicitly addresses the three core challenges of aggregation: ambiguity, incomplete retrieval, and final aggregation. The Disambiguation module parses the natural‑language query, resolves ambiguous terminology, and produces a canonical representation (entity type + structured conditions). The Filtering module performs iterative retrieval: it starts with a top‑k retrieval window, checks whether the retrieved evidence covers all currently known entities, and if not, expands the search window and repeats, while storing already discovered entities in a history memory to avoid duplication. This “completeness‑aware” loop is designed to overcome the fixed‑k limitation of standard RAG pipelines. Finally, the Aggregation module consolidates evidence across chunks, de‑duplicates entities, and applies the logical operators (AND/OR) to produce the final answer set. The design makes each failure mode (ambiguity, filtering error, aggregation error) observable and thus amenable to targeted improvement.

The authors evaluate DFA across several large language model backbones (e.g., GPT‑3.5, Llama‑2, Claude) and compare it against strong baselines: a vanilla Retrieval‑Augmented Generation system, a standard Rank‑Then‑Read pipeline, and other recent agentic frameworks such as ReAct and Self‑Ask. Two primary metrics are reported: Evidence Recall (the proportion of true answer entities for which at least one supporting passage is retrieved) and Answer Accuracy (whether the returned set exactly matches the ground truth). DFA consistently outperforms all baselines, achieving up to a 5× increase in evidence recall and improving answer accuracy by 15–30 % absolute points, especially in the expanded, evidence‑sparse setting. Error analysis reveals that remaining failures stem mainly from (1) semantic ambiguity in the conditions, (2) lexical variation causing missed matches during filtering, and (3) difficulties in deduplicating entities that appear across many chunks. Because DFA’s architecture isolates these stages, the authors argue that future work can target each component individually.

In addition to the empirical contributions, the paper provides a theoretical analysis (Appendix A.1) showing why existing approximations—such as fixed‑k retrieval or schema‑first pipelines that first convert text to a relational model—are inherently incomplete for aggregation tasks. The authors also discuss the trade‑off between annotation cost and realistic evaluation, justifying their hybrid LLM‑human annotation approach.

Overall, the work makes three substantive contributions: (1) a formal definition of entity‑level aggregation querying over text with a strict completeness requirement, (2) the AGGBench benchmark that enables systematic, large‑scale evaluation of completeness‑oriented methods, and (3) the DFA agentic framework that sets a strong baseline and offers a clear, modular roadmap for future research. By highlighting the “find‑all” problem and providing both a dataset and a solution, the paper opens a new research direction at the intersection of information retrieval, large language models, and automated data analysis, with immediate relevance to domains such as legal e‑discovery, financial compliance, and scientific literature mining.

Comments & Academic Discussion

Loading comments...

Leave a Comment