AIR-VLA: Vision-Language-Action Systems for Aerial Manipulation

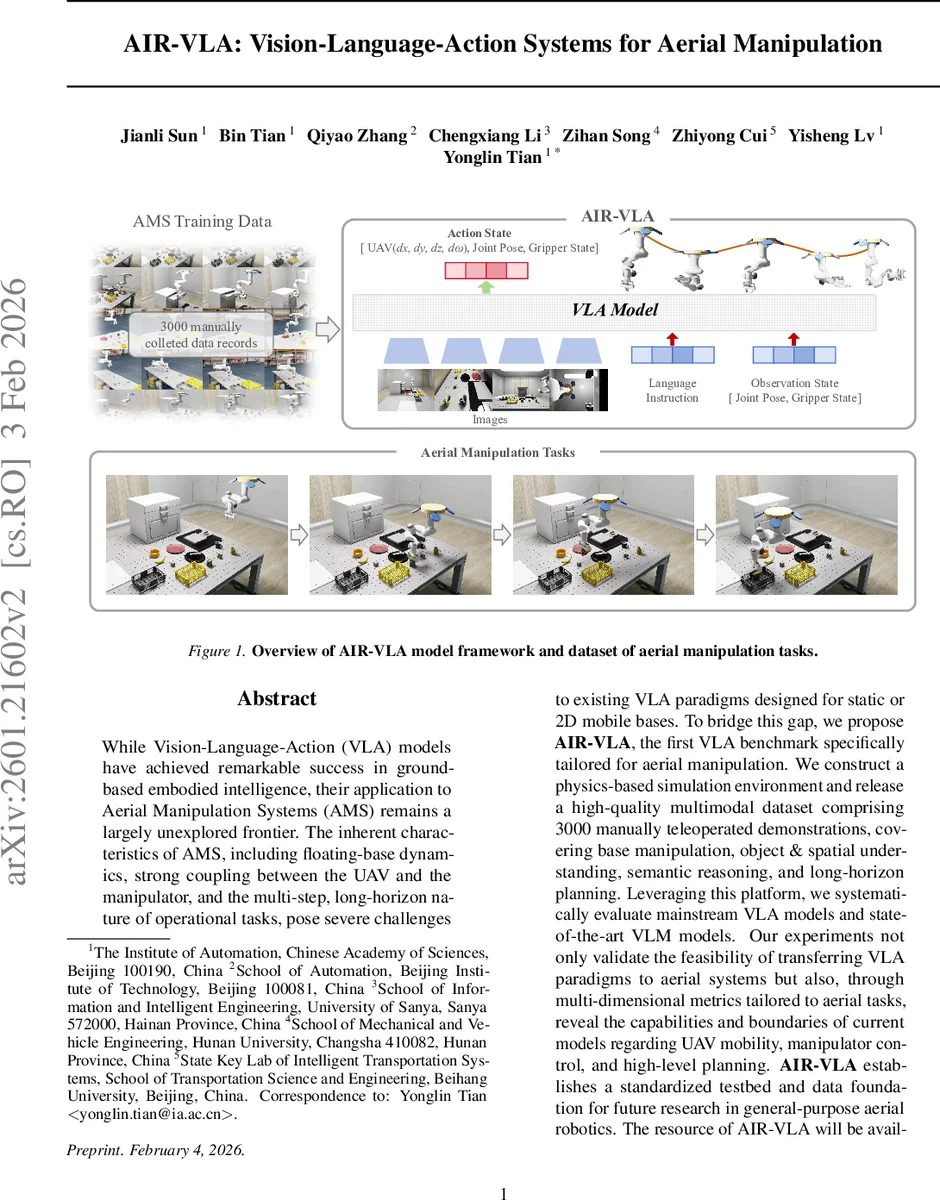

While Vision-Language-Action (VLA) models have achieved remarkable success in ground-based embodied intelligence, their application to Aerial Manipulation Systems (AMS) remains a largely unexplored frontier. The inherent characteristics of AMS, including floating-base dynamics, strong coupling between the UAV and the manipulator, and the multi-step, long-horizon nature of operational tasks, pose severe challenges to existing VLA paradigms designed for static or 2D mobile bases. To bridge this gap, we propose \textbf{AIR-VLA}, the first VLA benchmark specifically tailored for aerial manipulation. We construct a physics-based simulation environment and release a high-quality multimodal dataset comprising 3000 manually teleoperated demonstrations, covering base manipulation, object & spatial understanding, semantic reasoning, and long-horizon planning. Leveraging this platform, we systematically evaluate mainstream VLA models and state-of-the-art VLM models. Our experiments not only validate the feasibility of transferring VLA paradigms to aerial systems but also, through multi-dimensional metrics tailored to aerial tasks, reveal the capabilities and boundaries of current models regarding UAV mobility, manipulator control, and high-level planning. \textbf{AIR-VLA} establishes a standardized testbed and data foundation for future research in general-purpose aerial robotics. The resource of AIR-VLA will be available at https://github.com/SpencerSon2001/AIR-VLA.

💡 Research Summary

The paper introduces AIR‑VLA, the first Vision‑Language‑Action benchmark specifically designed for Aerial Manipulation Systems (AMS), which combine a multi‑rotor UAV with a 7‑DoF robotic arm. Recognizing that existing VLA research is confined to ground‑based platforms with planar navigation, the authors address three core challenges unique to aerial manipulation: (1) the floating‑base dynamics that couple UAV motion with arm movements, (2) the need for 3‑D spatial reasoning across a volumetric workspace, and (3) the long‑horizon, multi‑step nature of realistic aerial tasks.

To tackle these challenges, the authors build a high‑fidelity simulation environment on NVIDIA Isaac Sim, leveraging the PhysX 5 engine and Omniverse USD pipeline to model aerodynamic disturbances, contact dynamics, and realistic lighting. Within this environment they collect 3000 manually tele‑operated demonstrations using a gamepad interface. Each episode averages 475 timesteps (~10 seconds), far longer than typical tabletop or ground‑mobile benchmarks, thereby capturing temporal dependencies essential for long‑horizon planning.

The dataset is multimodal: it includes front‑down RGB‑D images for global perception, wrist‑mounted RGB‑D images for fine‑grained arm‑side observation, a third‑person camera, and full proprioceptive data (UAV 4‑D pose, velocity, angular velocity, arm joint angles, and gripper state). Natural‑language instructions vary in style, length, and ambiguity, covering four task suites:

- Base Manipulation – tests low‑level UAV‑Arm coordination, precise hovering, and contact handling.

- Object & Spatial – evaluates fine‑grained visual‑spatial grounding (color, shape, material, relative positions).

- Semantic Understanding – probes robustness to unstructured, implicit language.

- Long‑Horizon – requires multi‑step reasoning, alternating between wide‑range navigation and precise manipulation; failure of any sub‑step leads to overall task failure.

A two‑layer evaluation framework is proposed. For VLA models, closed‑loop simulations measure UAV positioning accuracy, manipulator efficacy, safety (collision and overspeed), and task progression. For VLM‑based high‑level planners, a multi‑dimensional metric set assesses sub‑task sequencing, 3‑D perception, object grounding, and atomic skill selection. This moves beyond simple success‑rate metrics to capture both low‑level control fidelity and high‑level logical consistency.

Benchmarking results on mainstream VLA models (RT‑1, OpenVLA, π‑0 series) reveal that while they can handle basic flight and grasping (≈70‑85 % success), performance collapses on complex spatial reasoning and long‑horizon tasks (≤30 % success). Notably, UAV positional errors exceeding 5 cm cause a steep drop in manipulation success, highlighting the sensitivity of aerial systems to precise control. VLM planners manage sub‑task ordering reasonably but struggle with accurate 3‑D object grounding and fine‑grained skill selection.

The authors conclude that current VLA architectures lack the integrated dynamics‑aware control and long‑term logical planning needed for AMS. They suggest three research directions: (i) pre‑training on large‑scale, high‑dimensional state‑action datasets; (ii) domain adaptation techniques to bridge simulation‑to‑real gaps; and (iii) incorporation of additional proprioceptive modalities such as force/torque sensing for tighter closed‑loop control.

In summary, AIR‑VLA provides a standardized testbed, a rich multimodal dataset, and a comprehensive evaluation suite that together open a new research frontier for general‑purpose aerial robotics. By exposing the limitations of existing VLA models in the aerial domain, the benchmark sets a clear agenda for future work aiming to achieve truly embodied intelligence that can perceive, understand language, and act robustly in three‑dimensional, dynamic environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment