DeepUrban: Interaction-Aware Trajectory Prediction and Planning for Automated Driving by Aerial Imagery

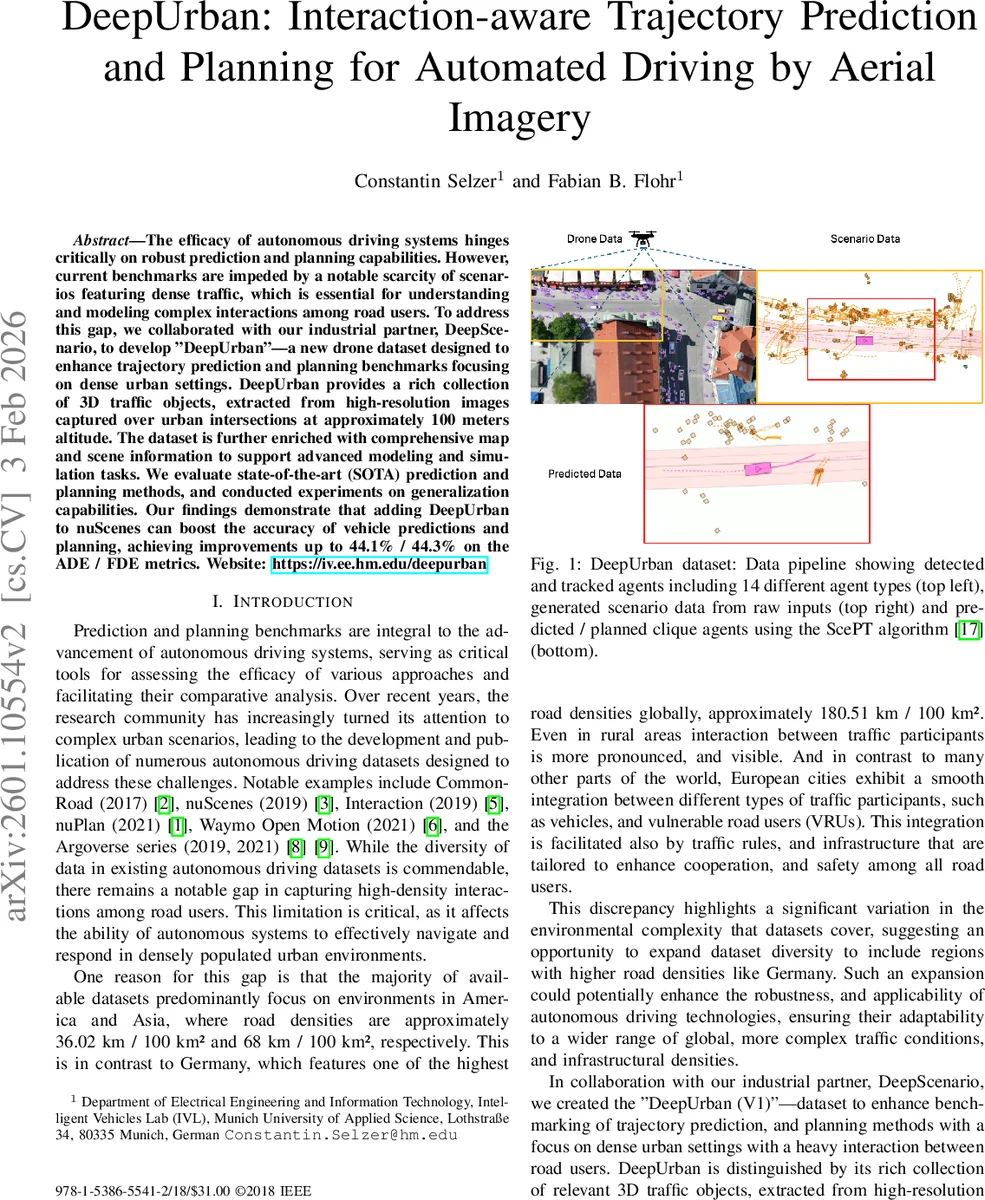

The efficacy of autonomous driving systems hinges critically on robust prediction and planning capabilities. However, current benchmarks are impeded by a notable scarcity of scenarios featuring dense traffic, which is essential for understanding and modeling complex interactions among road users. To address this gap, we collaborated with our industrial partner, DeepScenario, to develop DeepUrban-a new drone dataset designed to enhance trajectory prediction and planning benchmarks focusing on dense urban settings. DeepUrban provides a rich collection of 3D traffic objects, extracted from high-resolution images captured over urban intersections at approximately 100 meters altitude. The dataset is further enriched with comprehensive map and scene information to support advanced modeling and simulation tasks. We evaluate state-of-the-art (SOTA) prediction and planning methods, and conducted experiments on generalization capabilities. Our findings demonstrate that adding DeepUrban to nuScenes can boost the accuracy of vehicle predictions and planning, achieving improvements up to 44.1 % / 44.3% on the ADE / FDE metrics. Website: https://iv.ee.hm.edu/deepurban

💡 Research Summary

The paper addresses a critical gap in autonomous‑driving research: the lack of high‑density traffic scenarios in publicly available prediction and planning benchmarks. Existing datasets such as nuScenes, Waymo Open Motion, or Argoverse are largely collected in the United States or Asia, where road user density is considerably lower than in many European cities. To fill this void, the authors collaborate with the industrial partner DeepScenario and introduce DeepUrban, a drone‑captured dataset focused on dense urban intersections in Germany and one site in the United States.

DeepUrban consists of high‑resolution aerial imagery recorded from approximately 100 m altitude, covering roughly 150 m × 150 m per scene. A proprietary computer‑vision pipeline reconstructs the static environment in 3D, detects and tracks all dynamic agents, and produces a full 3D annotation set. Four locations are included: three German sites (Munich Tal, Sindelfingen Breuninger, Stuttgart University) and one US site (San Francisco). The final release contains 5 604 filtered scenarios, each 20 seconds long, sampled at 12.5 Hz (resampled to 10 Hz for compatibility). Over 208 k trajectories are provided, spanning 14 agent types (cars, trucks, buses, bicycles, e‑scooters, pedestrians, etc.). Each scenario also ships with OpenDRIVE, Lanelet2, and VectorMap files, enabling seamless integration into simulation and map‑based planning pipelines.

A key design choice is the “multi‑ego” formulation: any vehicle that moves at least 5 m within the 20‑second window is treated as an ego agent. Consequently, a single scene can generate dozens of ego‑centric prediction tasks, eliminating the occlusion problems inherent to ego‑vehicle‑mounted sensors and allowing researchers to evaluate interaction‑aware models under realistic, densely populated conditions. Scenarios are split 80/10/10 for training, validation, and testing, and are integrated into the TrajData dataloader, which already supports nuScenes, nuPlan, Waymo, etc., providing a unified API for cross‑dataset experiments.

For benchmarking, the authors adopt ScePT (Scene Prediction Transformer), a state‑of‑the‑art interaction‑aware prediction and planning framework. ScePT builds a spatio‑temporal scene graph where nodes are agents and edges are defined by a Euclidean distance threshold (computed with a constant‑velocity model). The graph is partitioned into cliques using the Louvain clustering algorithm, allowing a Conditional Variational Auto‑Encoder (CVAE) to generate multi‑modal predictions per clique. A Model Predictive Controller (MPC) then consumes these predictions to produce feasible ego trajectories.

Experimental results demonstrate two important findings. First, training ScePT solely on DeepUrban already yields substantial gains over training on nuScenes alone, with reductions of more than 20 % in Average Displacement Error (ADE) and Final Displacement Error (FDE). Second, when the DeepUrban scenarios are added to the nuScenes training set, vehicle prediction performance improves dramatically: ADE and FDE decrease by 44.1 % and 44.3 % respectively, and the collision‑rate metric improves by 49.6 %. These improvements confirm that dense, interaction‑rich data substantially boost model generalization and safety in complex urban environments.

The authors acknowledge limitations: the current dataset covers only about 12 hours of recording, lacks adverse weather conditions, and relies on a proprietary labeling pipeline that is not fully open‑source. They propose future work to expand recording duration, diversify weather and lighting conditions, and release the annotation tools to the community.

In summary, DeepUrban provides a novel, drone‑derived 3D traffic dataset with rich map information and unprecedented density of vulnerable road user interactions. By integrating it into existing data loaders and demonstrating sizable performance gains for a leading interaction‑aware model, the paper makes a compelling case that high‑density urban data are essential for advancing reliable autonomous‑driving prediction and planning systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment