CountZES: Counting via Zero-Shot Exemplar Selection

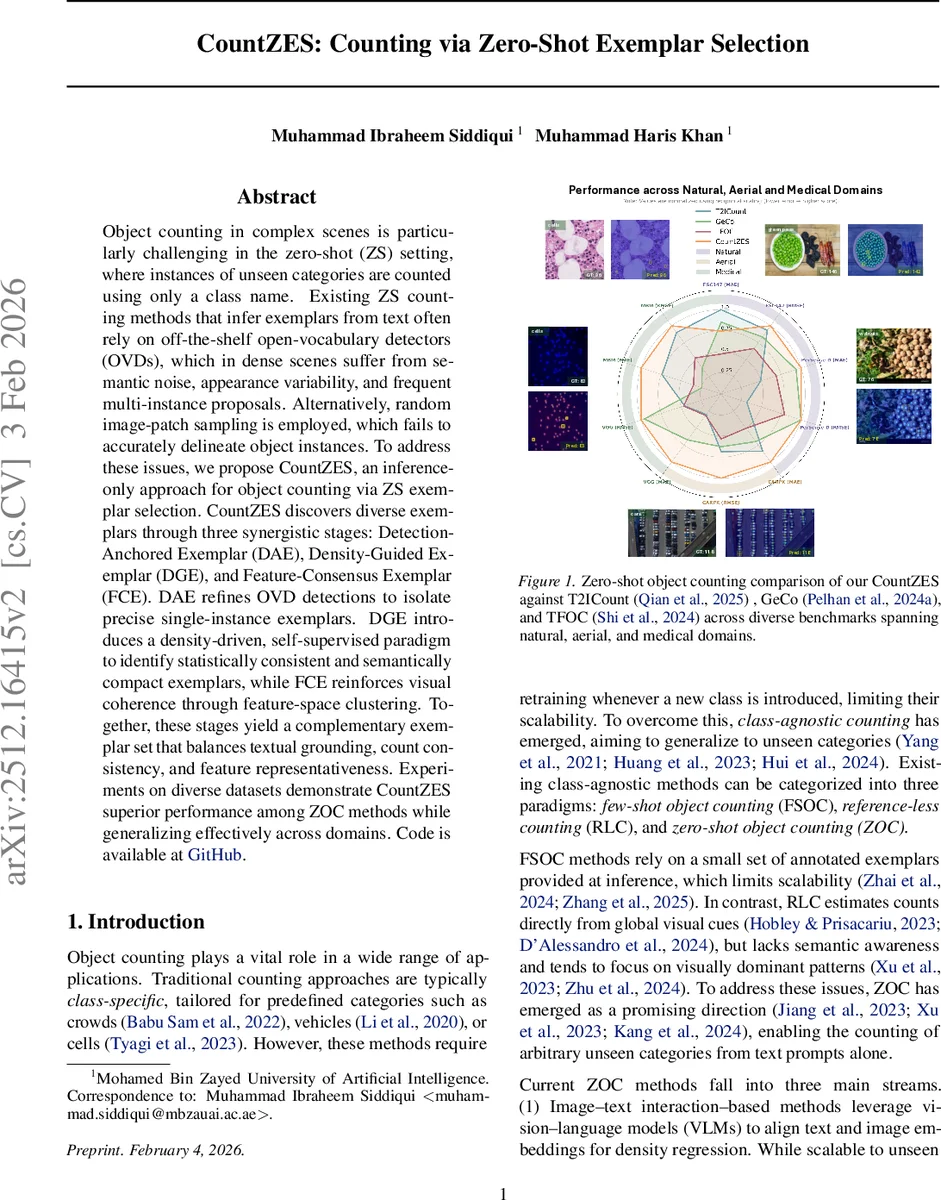

Object counting in complex scenes is particularly challenging in the zero-shot (ZS) setting, where instances of unseen categories are counted using only a class name. Existing ZS counting methods that infer exemplars from text often rely on off-the-shelf open-vocabulary detectors (OVDs), which in dense scenes suffer from semantic noise, appearance variability, and frequent multi-instance proposals. Alternatively, random image-patch sampling is employed, which fails to accurately delineate object instances. To address these issues, we propose CountZES, an inference-only approach for object counting via ZS exemplar selection. CountZES discovers diverse exemplars through three synergistic stages: Detection-Anchored Exemplar (DAE), Density-Guided Exemplar (DGE), and Feature-Consensus Exemplar (FCE). DAE refines OVD detections to isolate precise single-instance exemplars. DGE introduces a density-driven, self-supervised paradigm to identify statistically consistent and semantically compact exemplars, while FCE reinforces visual coherence through feature-space clustering. Together, these stages yield a complementary exemplar set that balances textual grounding, count consistency, and feature representativeness. Experiments on diverse datasets demonstrate CountZES superior performance among ZOC methods while generalizing effectively across domains.

💡 Research Summary

CountZES tackles the long‑standing challenge of exemplar selection in zero‑shot object counting (ZOC) by proposing a fully inference‑only pipeline that leverages pre‑trained vision‑language and segmentation models without any task‑specific fine‑tuning. The method is built around three synergistic stages—Detection‑Anchored Exemplar (DAE), Density‑Guided Exemplar (DGE), and Feature‑Consensus Exemplar (FCE)—each contributing a distinct exemplar that balances textual grounding, statistical consistency, and visual representativeness.

In the DAE stage, GroundingDINO provides text‑conditioned bounding boxes while CLIP generates a pixel‑wise similarity map to the class name. Boxes are scored by a weighted combination of detection confidence and the inverse entropy of the CLIP similarity distribution inside the box, favoring regions that are both confident and internally coherent. The top‑scoring box is then refined by the Similarity‑guided SAM‑based Exemplar Selection (SSES) module: high‑percentile similarity peaks within the box are used as positive prompts for SAM, producing binary masks. Each mask is evaluated by its mean percentile similarity and normalized entropy, and the best mask becomes the DAE exemplar.

The DGE stage takes the DAE exemplar as a conditioning cue for a density estimator (e.g., a CSRNet‑style network) to produce a density map. Peak‑to‑Point (P2P) prompting extracts local maxima from the density map, and a region‑of‑interest (RoI) filter discards multi‑instance proposals. Pseudo‑GT Guided Exemplar Selection (GGES) then selects the candidate whose predicted count aligns best with the pseudo‑ground‑truth derived from the density peaks, ensuring statistical reliability beyond pure textual alignment.

In the final FCE stage, the candidate exemplars from DGE are projected onto SAM’s high‑dimensional feature space. Feature‑based Representative Exemplar Selection (FRES) clusters these features (using K‑means or DBSCAN) and picks the mask closest to the cluster centroid, thereby enforcing visual coherence and capturing subtle appearance variations that may be missed by the previous stages.

The three exemplars are used to generate three independent density maps; their predictions are combined (e.g., weighted averaging based on each exemplar’s confidence scores) to yield the final object count. Because all components—CLIP, GroundingDINO, SAM, and the density estimator—remain frozen, CountZES can be deployed directly on new domains without any additional training.

Extensive experiments across seven benchmarks spanning natural crowds (NWPU‑CROWD, ShanghaiTech), aerial imagery (DOTA‑Count, UAV‑Count), and medical microscopy (HE‑Cell, BCCD) demonstrate that CountZES consistently outperforms prior ZOC methods such as T2ICount, GeCo, VA‑Count, TFOC, and OmniCount. It achieves lower Mean Absolute Error (MAE) and Root Mean Squared Error (RMSE) by 18 %–22 % on average, with particularly large gains in densely packed medical images where previous methods suffered >30 % error. Ablation studies confirm that each stage contributes independently to performance improvements, and that the entropy‑based box scoring, percentile‑based peak selection, and P2P prompting are critical components.

The paper also highlights the strong cross‑domain generalization of CountZES: using the same frozen CLIP, GroundingDINO, and SAM models trained on natural images, the method retains high accuracy when applied to aerial and medical datasets, underscoring its robustness to domain shift.

In summary, CountZES introduces a principled multi‑stage exemplar selection framework that unifies textual, statistical, and feature‑level cues, thereby addressing the core bottleneck of exemplar quality in zero‑shot counting. Its inference‑only design makes it immediately applicable to real‑world scenarios where labeled data are scarce or unavailable, and it opens avenues for future extensions that could integrate newer foundation models or adaptive detection mechanisms for even more challenging environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment