LogHD: Robust Compression of Hyperdimensional Classifiers via Logarithmic Class-Axis Reduction

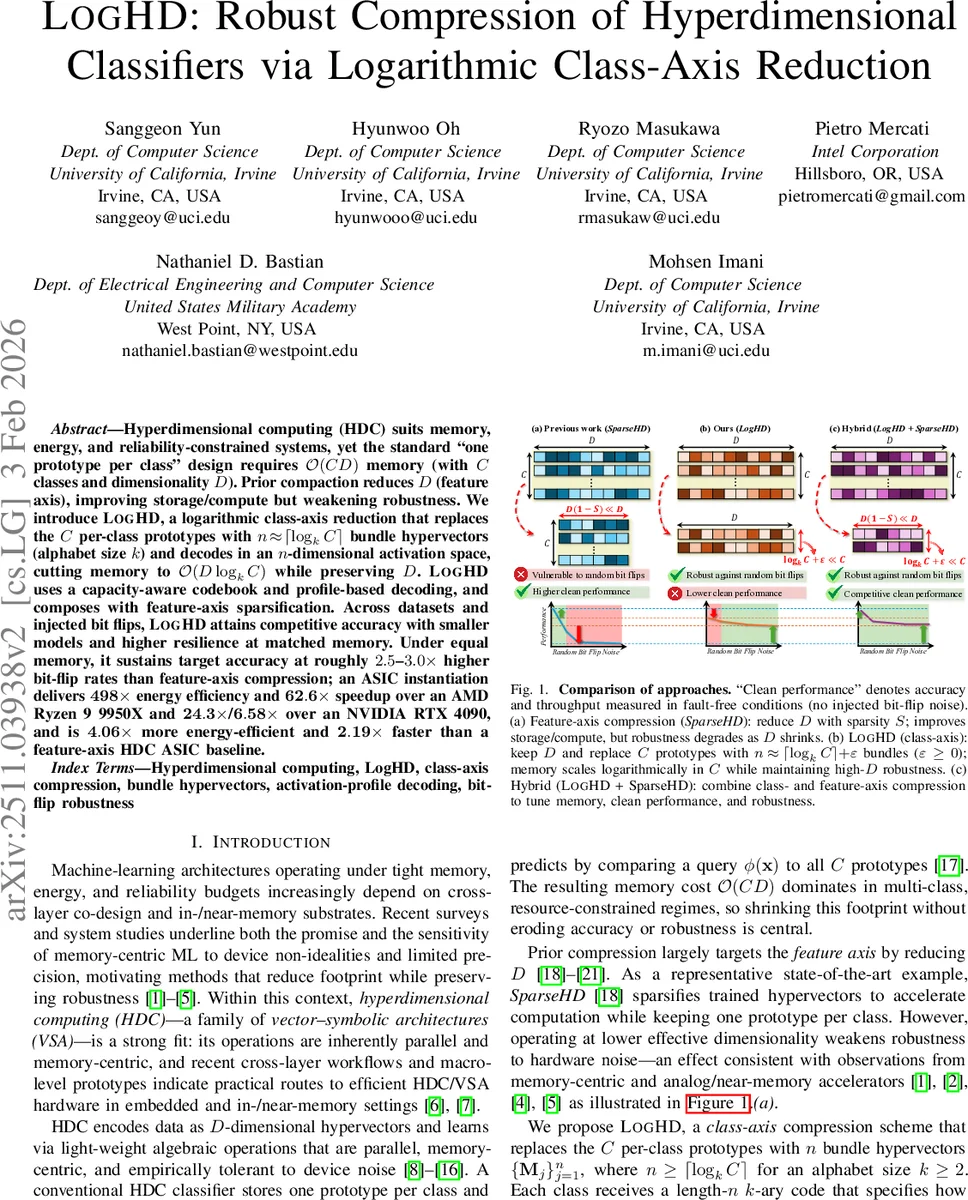

Hyperdimensional computing (HDC) suits memory, energy, and reliability-constrained systems, yet the standard “one prototype per class” design requires $O(CD)$ memory (with $C$ classes and dimensionality $D$). Prior compaction reduces $D$ (feature axis), improving storage/compute but weakening robustness. We introduce LogHD, a logarithmic class-axis reduction that replaces the $C$ per-class prototypes with $n!\approx!\lceil\log_k C\rceil$ bundle hypervectors (alphabet size $k$) and decodes in an $n$-dimensional activation space, cutting memory to $O(D\log_k C)$ while preserving $D$. LogHD uses a capacity-aware codebook and profile-based decoding, and composes with feature-axis sparsification. Across datasets and injected bit flips, LogHD attains competitive accuracy with smaller models and higher resilience at matched memory. Under equal memory, it sustains target accuracy at roughly $2.5$-$3.0\times$ higher bit-flip rates than feature-axis compression; an ASIC instantiation delivers $498\times$ energy efficiency and $62.6\times$ speedup over an AMD Ryzen 9 9950X and $24.3\times$/$6.58\times$ over an NVIDIA RTX 4090, and is $4.06\times$ more energy-efficient and $2.19\times$ faster than a feature-axis HDC ASIC baseline.

💡 Research Summary

Hyperdimensional computing (HDC) has attracted attention for ultra‑low‑power and memory‑centric machine learning, but its canonical “one prototype per class” architecture incurs a memory cost of O(C·D) (C classes, D‑dimensional hypervectors). Prior compression techniques mainly shrink the feature axis (reduce D) through sparsification, quantization, or structured binding, which lowers storage and compute but also erodes the high‑dimensional concentration‑of‑measure effect that gives HDC its robustness to hardware noise such as random bit flips.

The paper introduces LogHD, a novel class‑axis compression method that replaces the C per‑class prototypes with a small set of n bundle hypervectors, where n ≈ ⌈logₖ C⌉ for a user‑chosen alphabet size k ≥ 2. Each class is assigned a unique length‑n k‑ary code. The code symbols are mapped to non‑negative contribution weights g(s)=s^{k‑1}. A greedy minimax‑load algorithm builds a capacity‑aware codebook that balances the total weight assigned to each bundle, preventing any bundle from becoming overloaded. The n bundles are then formed by weighted superposition of the original class prototypes, followed by optional L2‑normalization to keep cosine similarity stable.

During training, the system computes an n‑dimensional activation vector for every training sample by measuring cosine similarity between the sample’s encoded hypervector and each bundle. The mean activation vector for each class (the activation profile P_c) is stored. At inference, a query’s activation vector is compared to all class profiles, and the nearest profile (in Euclidean distance) determines the predicted label. Because only n comparisons are needed, inference complexity drops from O(C) to O(log C). An optional refinement stage performs a few epochs of perceptron‑style updates that nudge each bundle toward the target code‑implied activation, further reducing inter‑class interference.

Key technical contributions include:

- Log‑scale class‑axis compression – memory reduces to O(D·logₖ C) while preserving the original dimensionality D, which retains the statistical robustness inherent to high‑dimensional spaces.

- Capacity‑aware codebook generation – the minimax‑load heuristic ensures balanced utilization of bundles, mitigating the risk of “over‑capacity” bundles that could dominate similarity scores.

- Profile‑based decoding – rather than a simple max‑over‑bundles decision, the method learns per‑class activation profiles, allowing the system to disambiguate overlapping contributions from multiple classes within each bundle.

- Hybrid design – LogHD can be combined with feature‑axis sparsification (e.g., SparseHD) to achieve additional memory savings while offering a tunable trade‑off between compactness and robustness.

Experimental evaluation spans several benchmark datasets (MNIST, CIFAR‑10, ISOLET, etc.). When memory budgets are equal, LogHD sustains target accuracy under roughly 2.5–3× higher random bit‑flip rates than the state‑of‑the‑art feature‑axis compressor SparseHD. In pure accuracy terms, LogHD matches or slightly exceeds SparseHD while using far fewer stored vectors.

Hardware implementation results are striking. An ASIC prototype of LogHD achieves:

- 498× higher energy efficiency and 62.6× faster inference compared with a high‑performance AMD Ryzen 9 9950X CPU.

- 24.3× energy and 6.58× speed improvements over an NVIDIA RTX 4090 GPU.

- When matched against a feature‑axis HDC ASIC baseline (SparseHD), LogHD is 4.06× more energy‑efficient and 2.19× faster.

The hybrid LogHD + SparseHD configuration further reduces memory while delivering intermediate robustness, confirming that class‑axis and feature‑axis compressions are complementary rather than mutually exclusive.

In summary, LogHD offers a fundamentally new avenue for scaling HDC models to large‑class problems on resource‑constrained platforms. By compressing along the class axis logarithmically and preserving the high‑dimensional feature space, it simultaneously achieves dramatic memory and compute reductions, superior energy‑performance trade‑offs, and markedly improved resilience to hardware faults. This makes LogHD especially attractive for edge devices, near‑memory accelerators, and safety‑critical embedded systems where both efficiency and reliability are paramount.

Comments & Academic Discussion

Loading comments...

Leave a Comment