DecoHD: Decomposed Hyperdimensional Classification under Extreme Memory Budgets

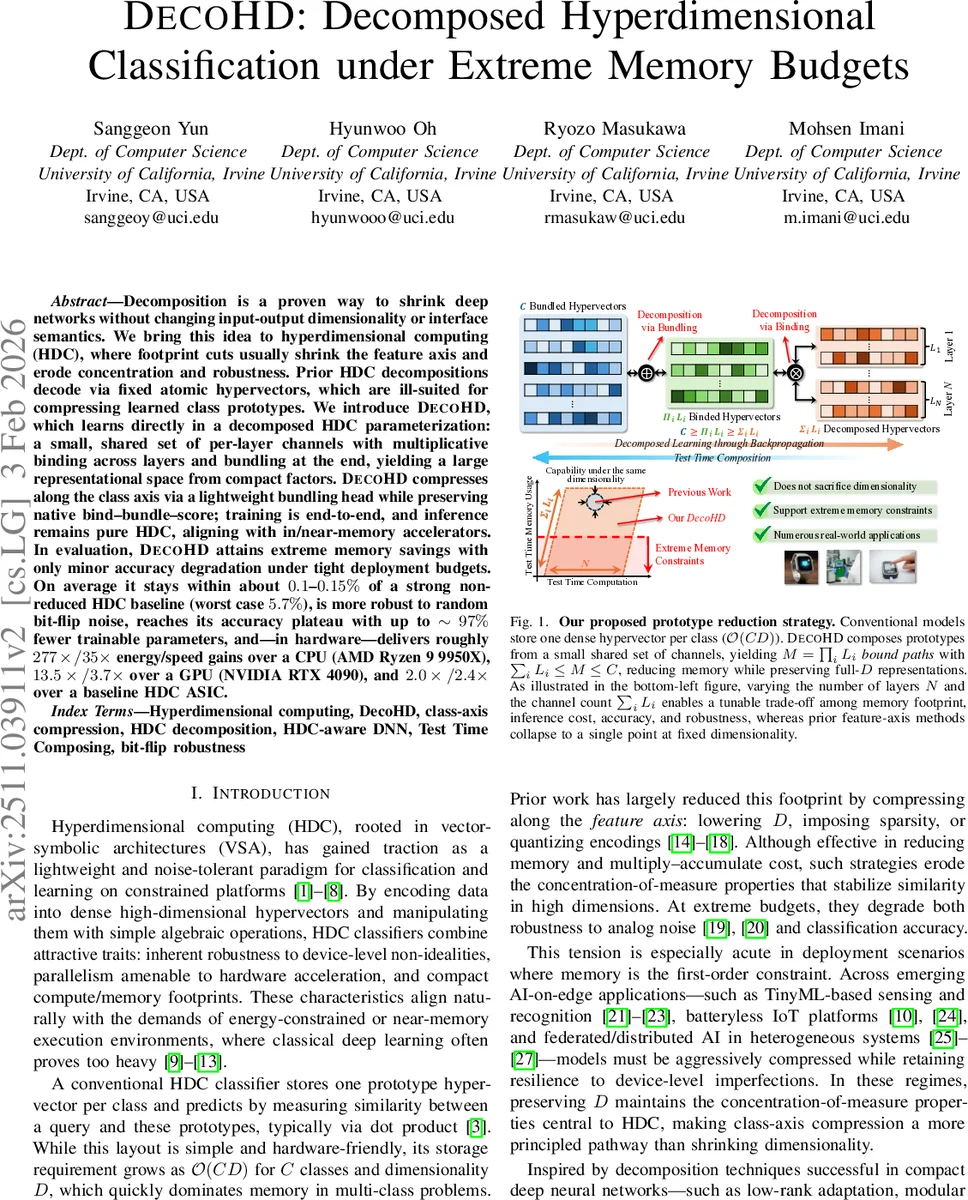

Decomposition is a proven way to shrink deep networks without changing input-output dimensionality or interface semantics. We bring this idea to hyperdimensional computing (HDC), where footprint cuts usually shrink the feature axis and erode concentration and robustness. Prior HDC decompositions decode via fixed atomic hypervectors, which are ill-suited for compressing learned class prototypes. We introduce DecoHD, which learns directly in a decomposed HDC parameterization: a small, shared set of per-layer channels with multiplicative binding across layers and bundling at the end, yielding a large representational space from compact factors. DecoHD compresses along the class axis via a lightweight bundling head while preserving native bind-bundle-score; training is end-to-end, and inference remains pure HDC, aligning with in/near-memory accelerators. In evaluation, DecoHD attains extreme memory savings with only minor accuracy degradation under tight deployment budgets. On average it stays within about 0.1-0.15% of a strong non-reduced HDC baseline (worst case 5.7%), is more robust to random bit-flip noise, reaches its accuracy plateau with up to ~97% fewer trainable parameters, and–in hardware–delivers roughly 277x/35x energy/speed gains over a CPU (AMD Ryzen 9 9950X), 13.5x/3.7x over a GPU (NVIDIA RTX 4090), and 2.0x/2.4x over a baseline HDC ASIC.

💡 Research Summary

DecoHD introduces a novel way to compress hyperdimensional computing (HDC) classifiers by factorizing the class‑axis rather than the feature‑axis. Traditional HDC stores a dense D‑dimensional prototype for each of the C classes, leading to O(C·D) memory consumption. When memory is the primary constraint—e.g., on battery‑less IoT nodes, TinyML devices, or near‑memory accelerators—this approach quickly becomes infeasible. Prior compression techniques shrink the hypervector dimensionality D, sparsify representations, or quantize encodings, but these methods erode the concentration‑of‑measure properties that give HDC its robustness and accuracy.

DecoHD’s key insight is to replace the C prototypes with a small, shared set of per‑layer “channels”. The model consists of N layers; layer i contains L_i learnable channels. Each channel is generated from a low‑dimensional latent vector a^{(i)}_ℓ∈ℝ^d (d≪D) via a frozen random projection R^{(i)}∈ℝ^{d×D}, yielding a full‑D hypervector A^{(i)}ℓ = a^{(i)}ℓ R^{(i)}. For a given input x, a fixed encoder ϕ maps it to a D‑dimensional hypervector h = ϕ(x). A “path” is formed by selecting one channel from each layer, and the path hypervector is obtained by successive element‑wise multiplication (binding) Z_m(h) = h ⊗ A^{(1)}{m1} ⊗ … ⊗ A^{(N)}{mN}. Because each layer offers L_i choices, the total number of distinct paths is M = Π_i L_i, which can be orders of magnitude larger than the number of channels.

Class‑specific prototypes are then constructed by a weighted sum (bundling) of all path hypervectors: Y_c(h) = Σ_{m=1}^M W_{c,m} Z_m(h), where W∈ℝ^{C×M} is a lightweight trainable matrix. The final logits are computed by the native HDC dot product s_c = ⟨Y_c(h), h⟩, and the model is trained end‑to‑end with cross‑entropy loss. Crucially, only the low‑dimensional latents {a^{(i)}_ℓ} and the bundling weights W are updated; the encoder and all random projectors remain fixed. This keeps the learnable parameter count tiny (up to 97 % fewer than a conventional HDC model) while preserving the holographic properties of the high‑dimensional space.

At inference time the channels are materialized once, and the M paths are streamed sequentially. Each path is bound on‑the‑fly, its contribution is immediately accumulated into class scores, and the intermediate hypervector is discarded. This streaming strategy keeps peak memory at O(D) (a single hypervector) and can even avoid storing the class bundles by updating scores directly: s_c ← s_c + W_{c,m} ⟨Z_m(h), h⟩. Thus DecoHD can operate under extreme memory budgets without sacrificing the pure HDC primitive set (binding, bundling, dot product), making it a drop‑in replacement for existing HDC accelerators.

Empirical evaluation on four public datasets (ISOLET, UCIHAR, PAMAP2, PAGE) demonstrates that DecoHD achieves accuracy within 0.1–0.15 % of a strong, non‑reduced HDC baseline when memory is limited to ≤ 0.7× of the original prototype table; the worst‑case degradation is 5.7 %. The method also exhibits superior robustness to random bit‑flip noise, outperforming baseline HDC by 2–3× under the same noise levels. Parameter reduction reaches up to 97 %, and the model reaches its accuracy plateau with far fewer trainable weights.

Hardware‑level benchmarks show dramatic efficiency gains: compared to an AMD Ryzen 9 9950X CPU, DecoHD delivers 277× lower energy consumption and 35× faster inference; versus an NVIDIA RTX 4090 GPU, it achieves 13.5× energy savings and 3.7× speedup; and relative to a conventional HDC ASIC, it offers 2.0× energy reduction and 2.4× speed improvement. Memory usage drops to 0.38× of the original prototype table. By adjusting N and the L_i values, designers can trade off memory footprint against compute cost, tailoring the model to the specific constraints of the target platform.

In summary, DecoHD pioneers “class‑axis compression” for hyperdimensional computing. It retains the full dimensionality D—preserving concentration‑of‑measure and noise tolerance—while dramatically shrinking the storage needed for class prototypes. The approach is fully compatible with existing HDC hardware, requires only native HDC operations, and is well‑suited for TinyML, battery‑less IoT, federated learning, and other edge‑AI scenarios where memory and power are at a premium. DecoHD thus offers a compelling new paradigm for memory‑efficient, robust, and hardware‑friendly AI at the edge.

Comments & Academic Discussion

Loading comments...

Leave a Comment