VioPTT: Violin Technique-Aware Transcription from Synthetic Data Augmentation

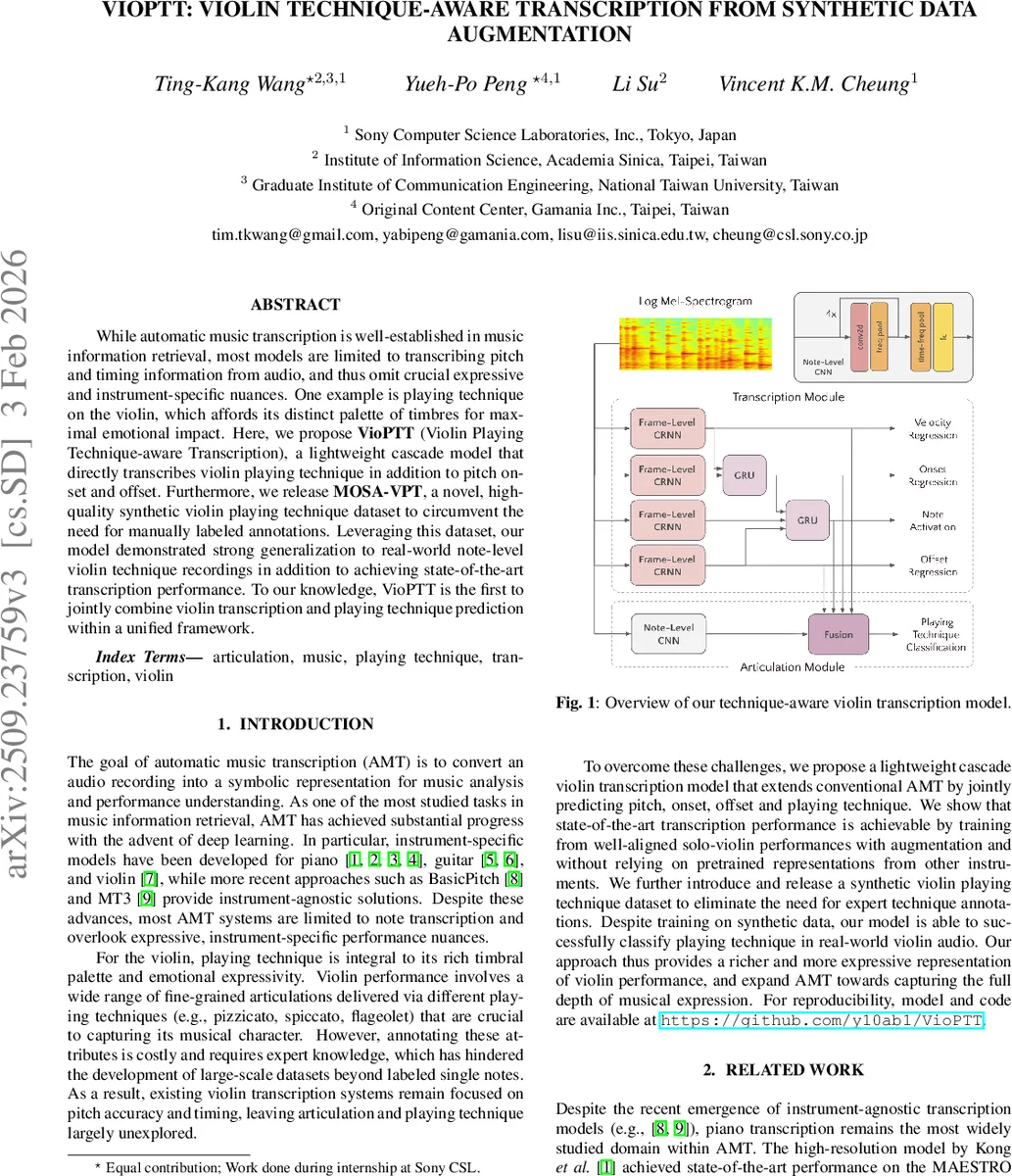

While automatic music transcription is well-established in music information retrieval, most models are limited to transcribing pitch and timing information from audio, and thus omit crucial expressive and instrument-specific nuances. One example is playing technique on the violin, which affords its distinct palette of timbres for maximal emotional impact. Here, we propose VioPTT (Violin Playing Technique-aware Transcription), a lightweight cascade model that directly transcribes violin playing technique in addition to pitch onset and offset. Furthermore, we release MOSA-VPT, a novel, high-quality synthetic violin playing technique dataset to circumvent the need for manually labeled annotations. Leveraging this dataset, our model demonstrated strong generalization to real-world note-level violin technique recordings in addition to achieving state-of-the-art transcription performance. To our knowledge, VioPTT is the first to jointly combine violin transcription and playing technique prediction within a unified framework.

💡 Research Summary

The paper introduces VioPTT (Violin Playing Technique‑aware Transcription), a novel cascade architecture that simultaneously performs note‑level transcription (pitch, onset, offset, velocity) and predicts the playing technique for each note. While most automatic music transcription (AMT) systems focus solely on pitch and timing, they ignore expressive nuances such as bowing or plucking styles that are essential to the violin’s timbral palette. To address the lack of large‑scale annotated data, the authors create MOSA‑VPT, a synthetic dataset of 76 hours of solo‑violin audio rendered from MIDI scores with four distinct techniques: détaché, flageolet, spiccato, and pizzicato. The synthesis pipeline uses DAW‑Dreamer and a high‑quality VST instrument (Synchron Solo Violin), automatically controlling key‑switches and continuous controllers to embed technique information, while disabling room effects to reduce domain shift.

The VioPTT model consists of two modules. The transcription module adopts the high‑resolution CRNN architecture originally proposed for piano (Kong et al.), consisting of four convolutional blocks followed by two bidirectional GRU layers, and predicts frame‑wise onset, offset, velocity (regression) and a binary pitch activation vector covering the 88‑note violin range. The articulation module receives two 128‑dimensional embeddings: one derived from the raw log‑mel spectrogram (four convolutional blocks with pooling and dropout) and another from the transcription features (projected onset, offset, frame, velocity). After concatenation, a fully‑connected layer outputs logits for five classes (four techniques plus “no‑technique”). Training minimizes a weighted sum of binary cross‑entropy (frame, onset, offset), mean‑squared error (velocity), and categorical cross‑entropy (technique).

Data augmentation is applied at two levels. First, pitch‑and‑timing augmentation perturbs audio by ±0.1 semitones, adds +5 dB gain, inserts random band‑pass filters, and applies moderate reverb. Second, the synthetic technique generation itself provides a form of augmentation by exposing the model to a wide variety of articulations.

Experiments evaluate (1) pitch and timing transcription on the URMP and Bach10 violin subsets, comparing models trained from scratch, fine‑tuned from piano‑pretrained checkpoints, and with/without augmentation; and (2) technique classification on the real‑world RWC instrument subset. Results show that augmentation improves transcription metrics, achieving F1‑onset scores of 93.1 % on URMP and matching or surpassing the state‑of‑the‑art MUSC system despite using roughly 30 % less real data. Piano pretraining yields only modest gains, suggesting limited transferability due to timbral differences.

For technique classification, the full VioPTT (using all transcription features) attains a macro accuracy of 77.22 % on RWC, outperforming the multi‑label baseline MERTech (53.36 %). Ablation studies reveal that removing offset information causes the largest drop (to 59.71 %), while excluding frame or onset features also degrades performance but to a lesser extent. Per‑class analysis shows that flageolet benefits from harmonic cues, détaché improves when binary frame information is omitted, and pizzicato relies heavily on the full feature set.

Training is efficient: the transcription module converges after 10 000 steps (batch size 5, 10‑second inputs) and the articulation module after 1 000 steps (batch size 128, 2‑second inputs) on a single RTX 4090 GPU, using a cosine‑annealing schedule with an initial learning rate of 5 × 10⁻⁴.

The authors’ contributions are threefold: (1) releasing MOSA‑VPT, a large, annotation‑free synthetic dataset that democratizes technique‑aware transcription research; (2) designing a lightweight cascade model that unifies note transcription and technique prediction without relying on external pretrained representations; and (3) providing a thorough analysis of how transcription cues affect technique classification. The work demonstrates that synthetic, perfectly aligned data can bridge the annotation gap and that expressive, technique‑aware transcription is feasible for real‑world violin recordings. Future extensions could apply the same pipeline to other string or wind instruments, paving the way toward comprehensive, expressive music transcription systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment