DSKC: Domain Style Modeling with Adaptive Knowledge Consolidation for Exemplar-free Lifelong Person Re-Identification

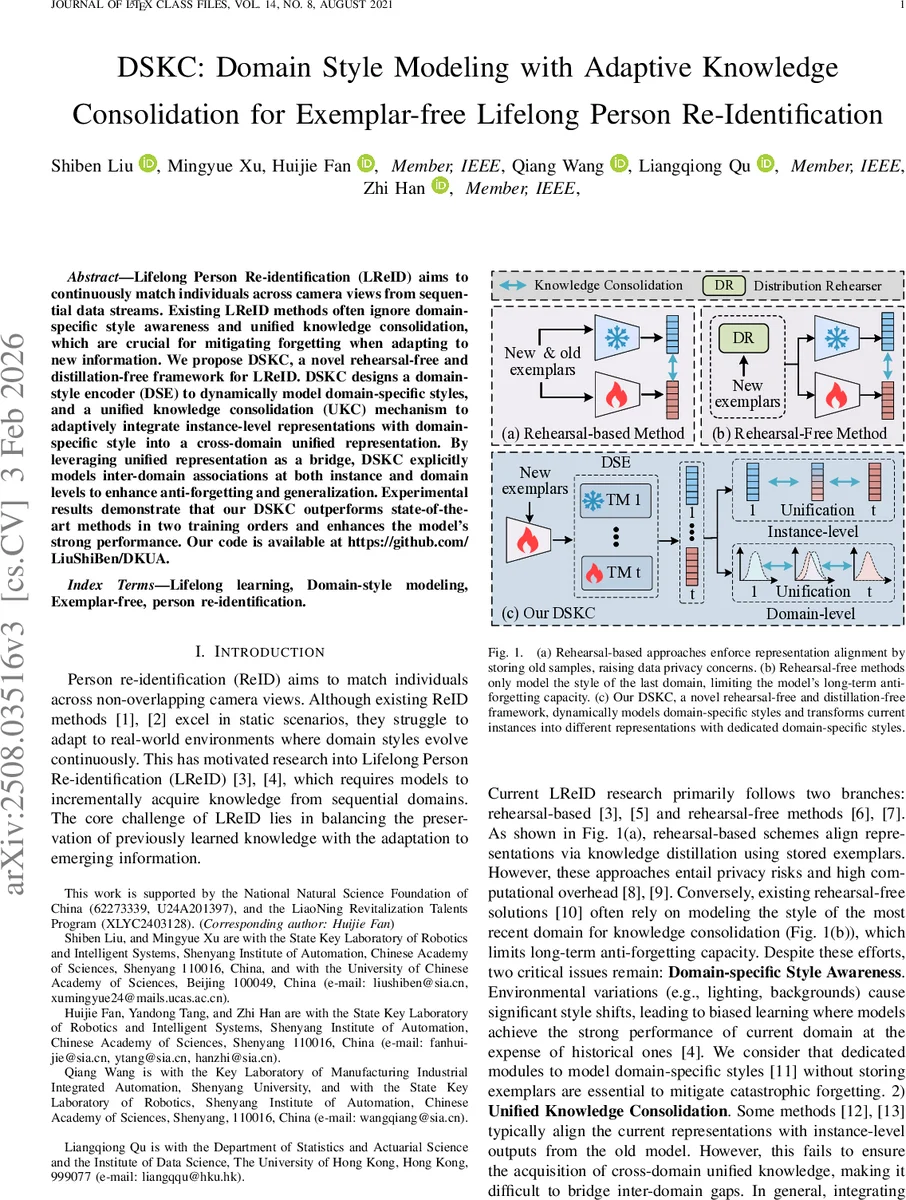

Lifelong Person Re-identification (LReID) aims to continuously match individuals across camera views from sequential data streams. Existing LReID methods often ignore domain-specific style awareness and unified knowledge consolidation, which are crucial for mitigating forgetting when adapting to new information. We propose DSKC, a novel rehearsal-free and distillation-free framework for LReID. DSKC designs a domain-style encoder (DSE) to dynamically model domain-specific styles, and a unified knowledge consolidation (UKC) mechanism to adaptively integrate instance-level representations with domain-specific style into a cross-domain unified representation. By leveraging unified representation as a bridge, DSKC explicitly models inter-domain associations at both instance and domain levels to enhance anti-forgetting and generalization. Experimental results demonstrate that our DSKC outperforms state-of-the-art methods in two training orders and enhances the model’s strong performance. Our code is available at https://github.com/LiuShiBen/DKUA.

💡 Research Summary

The paper introduces DSKC, a novel framework for Lifelong Person Re‑Identification (LReID) that operates without storing exemplars or using knowledge distillation. Existing LReID approaches fall into two categories: rehearsal‑based methods that keep past images and align representations via distillation, which raise privacy concerns and incur high computational overhead; and rehearsal‑free methods that typically preserve only the most recent domain’s style, limiting long‑term anti‑forgetting. DSKC addresses both shortcomings by jointly modeling domain‑specific styles and consolidating a unified cross‑domain representation.

The architecture consists of four main components. First, a Domain‑Style Encoder (DSE) transforms the backbone feature of each input image into a set of domain‑specific representations. This is achieved through a series of Transfer Modules (TMs), each comprising multi‑head self‑attention, a feed‑forward network, and a linear layer. For the current training domain t, a new TM f_t^M is learned while all previous TMs {f_i^M}_{i<t} are frozen, ensuring that past domain styles are retained without storing any data.

Second, Unified Knowledge Learning (UKL) integrates the domain‑specific representations {θ_i} into a single unified representation θ. Weighting coefficients ω are dynamically generated from the backbone feature via a mean‑linear‑softmax pipeline (ω = softmax(Linear(Mean(f_B(x))))). The unified representation is then a weighted sum θ = Σ_i ω_i θ_i. UKL is trained with the standard ReID losses – cross‑entropy and triplet loss – to preserve discriminative power. To prevent the unified vector from drifting due to domain bias, a Knowledge Alignment (KA) loss is added: the cosine similarity between the current domain’s θ_t and each previous θ_i is converted to a distance (1‑cos) and averaged, encouraging θ_t to stay close to the historical center.

Third, Unified Knowledge Association (UKA) uses the unified representation as a bridge to explicitly align heterogeneous domain‑specific vectors. For each previous domain, a softmax‑normalized similarity distribution A_{u,i} = softmax(Cos(θ_t,θ)/λ) (λ=0.1) is computed. The KL divergence between the current distribution A_{u,t} and each historical distribution A_{u,i} is minimized, pulling all domain‑specific vectors toward a common manifold and strengthening domain‑invariant learning.

Fourth, Domain‑Based Style Transfer (DST) further regularizes the global distribution of the current domain, preventing it from deviating excessively from the overall style space. DST recombines existing domain‑specific vectors or applies style‑transfer operations to map the current inputs into multiple style spaces, thereby reducing domain shift during incremental learning.

The authors evaluate DSKC on four large‑scale ReID benchmarks—Market‑1501, CUHK‑SYSU, DukeMTMC, and MSMT17—using two different training orders (forward and reverse). Performance is measured on both “Seen” domains (those encountered during training) and “Unseen” domains (VIPeR, GRID, etc.) to assess generalization. Across all datasets, DSKC outperforms state‑of‑the‑art rehearsal‑based and rehearsal‑free methods by 2–4 % mAP and 3–5 % Rank‑1, with especially notable gains on unseen domains, demonstrating superior anti‑forgetting and cross‑domain generalization. Because no exemplars are stored, memory consumption is an order of magnitude lower than rehearsal‑based baselines, and the computational cost is modest since only one new TM is added per domain.

In summary, DSKC contributes: (1) a dynamic domain‑style encoder that captures per‑domain visual styles without data storage; (2) an adaptive unified knowledge learning module that fuses these styles into a cross‑domain representation while aligning them via cosine‑based regularization; (3) a unified knowledge association mechanism that enforces consistency among domain‑specific vectors through KL‑based bridging; and (4) a style‑transfer component that stabilizes the global distribution. The code is publicly released, facilitating reproducibility. The work opens a new direction for lifelong visual recognition by showing that explicit style modeling combined with adaptive knowledge consolidation can achieve exemplar‑free continual learning with strong performance and efficiency. Future extensions may explore multimodal sensors, fully unsupervised settings, or online label‑free adaptation.

Comments & Academic Discussion

Loading comments...

Leave a Comment