An Overview of Low-Rank Structures in the Training and Adaptation of Large Models

The substantial computational demands of modern large-scale deep learning present significant challenges for efficient training and deployment. Recent research has revealed a widespread phenomenon wherein deep networks inherently learn low-rank structures in their weights and representations during training. This tutorial paper provides a comprehensive review of advances in identifying and exploiting these low-rank structures, bridging mathematical foundations with practical applications. We present two complementary theoretical perspectives on the emergence of low-rankness: viewing it through the optimization dynamics of gradient descent throughout training, and understanding it as a result of implicit regularization effects at convergence. Practically, these theoretical perspectives provide a foundation for understanding the success of techniques such as Low-Rank Adaptation (LoRA) in fine-tuning, inspire new parameter-efficient low-rank training strategies, and explain the effectiveness of masked training approaches like dropout and masked self-supervised learning.

💡 Research Summary

This tutorial paper surveys a rapidly emerging line of research that reveals a pervasive low‑rank phenomenon in modern deep neural networks. Across a wide variety of architectures—deep linear models, multilayer perceptrons, convolutional networks such as VGG, and vision transformers—the singular values of weight updates, gradients, and activations concentrate on a small subset of directions while the remaining spectrum stays near zero. The authors organize the discussion around two complementary theoretical lenses.

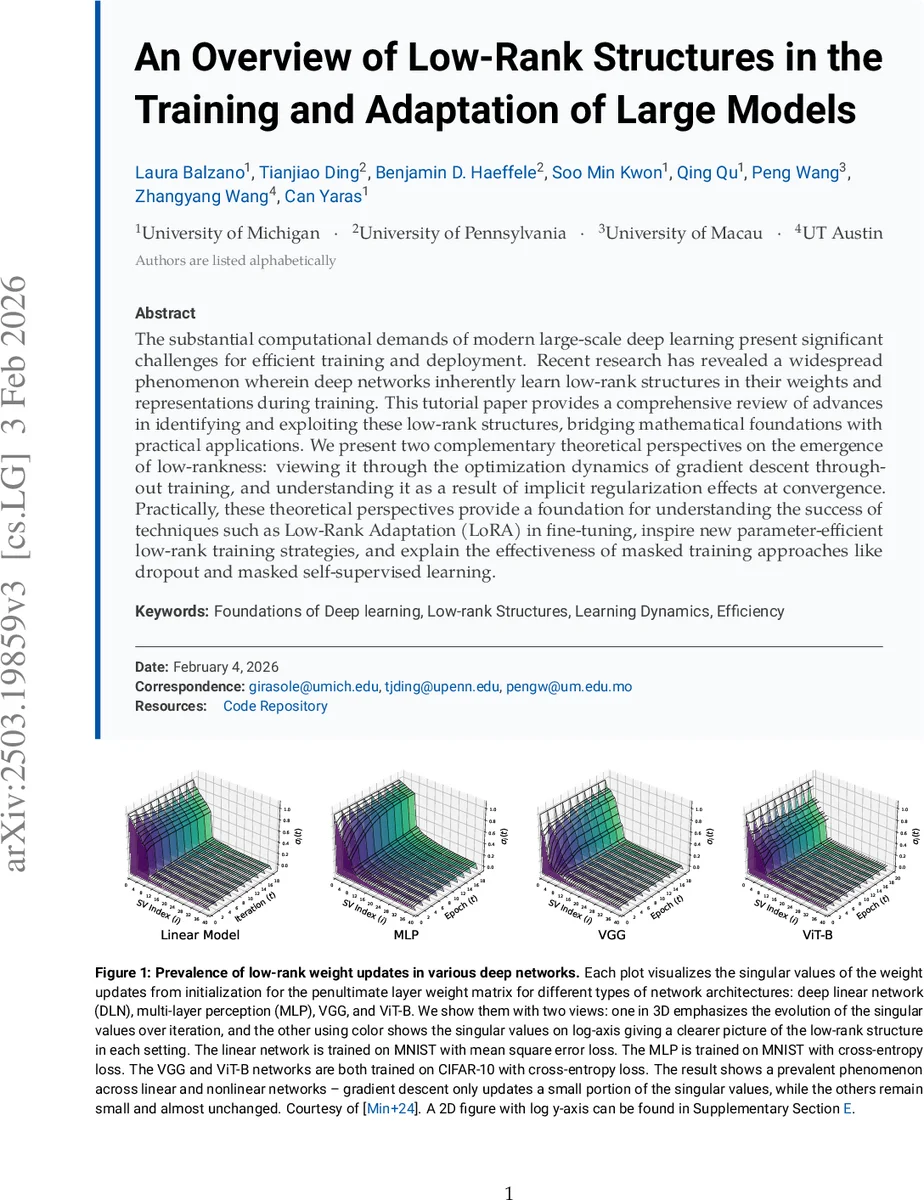

1. Low‑rank structure in the dynamics of gradient‑based optimization. By analyzing the continuous‑time limit of gradient descent on deep linear networks, they derive differential equations governing singular‑value evolution. The analysis shows that, under typical learning‑rate choices and random initialization, only a few singular values grow appreciably; the rest decay exponentially. Empirical plots (Figure 1) confirm that the same pattern appears in nonlinear networks trained on MNIST and CIFAR‑10. The paper further explains how learning‑rate scheduling and rank‑allocation decisions can deliberately shape the effective rank of the update at each iteration.

2. Low‑rank structure as an implicit regularizer at convergence. Even when the objective function contains no explicit low‑rank penalty (λ = 0), the optimization process implicitly biases the solution toward low‑rank matrices. Two mechanisms are highlighted: (a) L2 weight decay, which shrinks all singular values but leaves a few dominant ones, and (b) masked training (dropout, masked self‑supervised learning), which randomly disables neurons or patches during training. The authors prove that the resulting fixed point is equivalent to solving a variational problem with an implicit low‑rank regularizer, and they demonstrate that activations become increasingly low‑rank as dropout probability rises (Figure 3).

Building on these insights, the paper reviews practical techniques that explicitly exploit low‑rankness. The most prominent example is Low‑Rank Adaptation (LoRA), which augments a pretrained weight matrix W with a rank‑r update ΔW = BA (A∈ℝ^{d×r}, B∈ℝ^{r×d}). LoRA dramatically reduces the number of trainable parameters and memory traffic while preserving performance on large language models, vision‑language models, and generative vision models. The tutorial details how to choose the learning rate for the adapter (Section 3.2.1) and how to allocate rank across layers (Section 3.2.2), providing empirical guidelines (r ≈ 4–8 for most transformer layers).

Beyond adapters, the authors propose low‑rank gradient training: after computing the full gradient, they perform a cheap low‑rank approximation (e.g., truncated SVD or random projection) and back‑propagate only this compact representation. This reduces both forward‑ and backward‑pass memory footprints by up to 70 % and enables training of models that would otherwise exceed GPU capacity.

The paper also discusses downstream implications: low‑rank weight matrices can be post‑trained compressed, low‑rank representations facilitate implicit neural representations (INRs) and physics‑informed neural networks (PINNs), and low‑rank factorization can be directly incorporated into the model architecture during pretraining (as demonstrated by DeepSeek‑V3).

Finally, the authors acknowledge that most rigorous theory currently applies to deep linear networks. Extending the spectral dynamics and implicit regularization results to deep nonlinear networks with ReLU, residual connections, layer‑norm, and attention remains an open challenge. They outline several research directions: (i) characterizing the interaction between low‑rank bias and other implicit biases such as margin maximization or spectral bias; (ii) developing provable low‑rank guarantees for networks with non‑elementwise nonlinearities; (iii) designing hardware‑aware low‑rank kernels that exploit tensor‑core acceleration; and (iv) exploring adaptive rank schedules that evolve during training.

In summary, the tutorial bridges rigorous mathematical foundations with concrete algorithmic practices, showing that low‑rank structures are not a curiosity but a fundamental property of over‑parameterized deep learning. By understanding how these structures emerge—through optimization dynamics and implicit regularization—researchers can design more efficient training, fine‑tuning, and compression pipelines, ultimately reducing the computational and energy costs of today’s large‑scale AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment