Problem Solved? Information Extraction Design Space for Layout-Rich Documents using LLMs

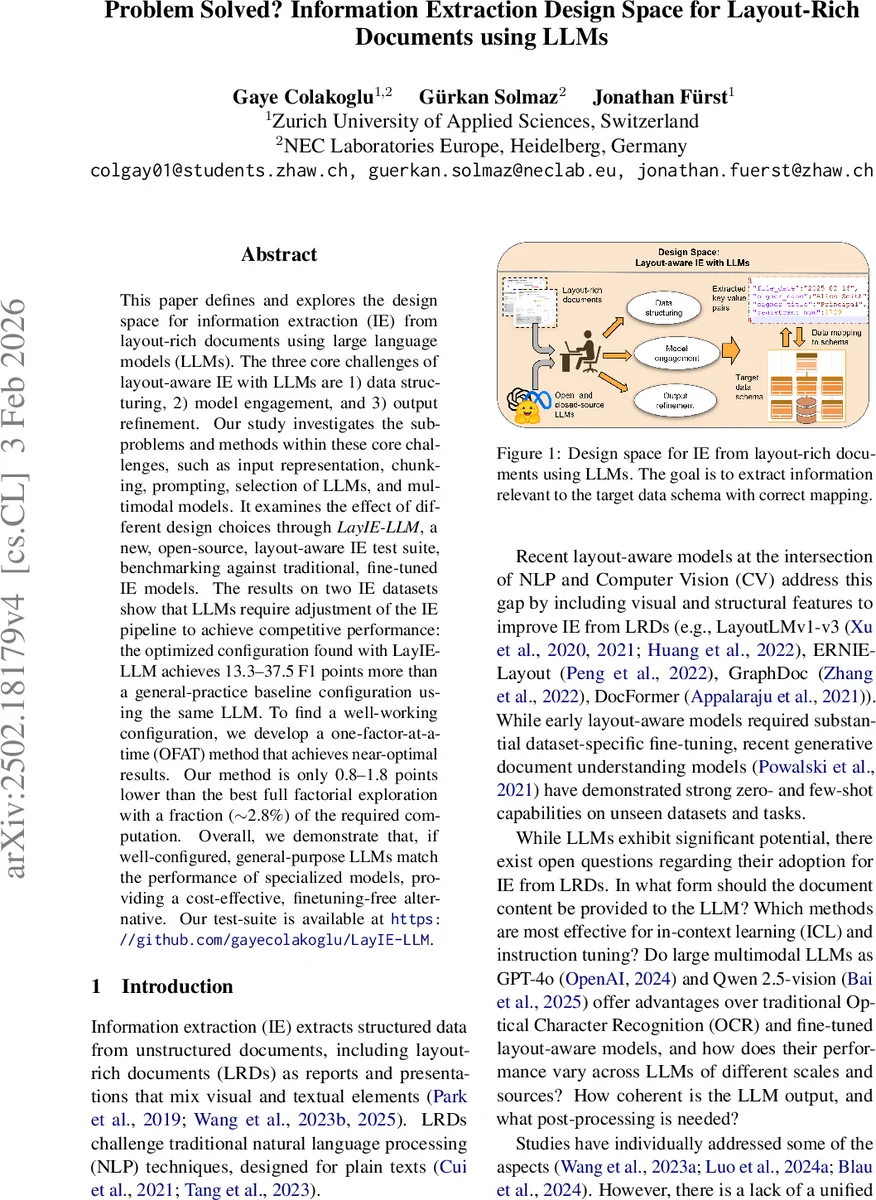

This paper defines and explores the design space for information extraction (IE) from layout-rich documents using large language models (LLMs). The three core challenges of layout-aware IE with LLMs are 1) data structuring, 2) model engagement, and 3) output refinement. Our study investigates the sub-problems and methods within these core challenges, such as input representation, chunking, prompting, selection of LLMs, and multimodal models. It examines the effect of different design choices through LayIE-LLM, a new, open-source, layout-aware IE test suite, benchmarking against traditional, fine-tuned IE models. The results on two IE datasets show that LLMs require adjustment of the IE pipeline to achieve competitive performance: the optimized configuration found with LayIE-LLM achieves 13.3–37.5 F1 points more than a general-practice baseline configuration using the same LLM. To find a well-working configuration, we develop a one-factor-at-a-time (OFAT) method that achieves near-optimal results. Our method is only 0.8–1.8 points lower than the best full factorial exploration with a fraction (2.8%) of the required computation. Overall, we demonstrate that, if well-configured, general-purpose LLMs match the performance of specialized models, providing a cost-effective, finetuning-free alternative. Our test-suite is available at https://github.com/gayecolakoglu/LayIE-LLM.

💡 Research Summary

This paper systematically defines and explores the design space for information extraction (IE) from layout‑rich documents (LRDs) using large language models (LLMs). The authors identify three core challenges—Data Structuring, Model Engagement, and Output Refinement—and investigate a comprehensive set of variables within each. To enable reproducible experimentation, they introduce LayIE‑LLM, an open‑source test suite that covers OCR extraction, Markdown conversion, chunking (1024, 2048, 4096 tokens), prompt engineering (few‑shot with 0, 1, 3, 5 examples, Chain‑of‑Thought), model selection (text‑only GPT‑3.5, GPT‑4o, LLaMA‑3‑70B; multimodal GPT‑4o, Qwen‑2.5‑vision), decoding, schema mapping, and data cleaning.

The experimental protocol evaluates each factor individually using a One‑Factor‑At‑Time (OFAT) approach and compares it against an exhaustive factorial search (432 configurations). OFAT achieves near‑optimal performance—only 0.8–1.8 F1 points below the best brute‑force result—while requiring merely 2.8 % of the computational budget. Two benchmark datasets are used: the visually rich VRDU corpus (with three template types and three learning scenarios) and FUNSD (question‑answer style key‑value pairs).

Key findings include: (1) Properly tuned LLM pipelines can match or exceed the performance of specialized fine‑tuned layout models such as LayoutLMv3 and ERNIE‑Layout. On VRDU, the optimized LLM configuration improves F1 by 13.3 points over a generic baseline; on FUNSD the gain is 37.5 points. (2) Multimodal LLMs (GPT‑4o, Qwen‑2.5‑vision) deliver the highest absolute scores but incur higher token usage, API costs, and reduced transparency. Text‑only LLMs, when combined with optimal chunking, prompting, and post‑processing, achieve comparable results at lower cost. (3) The three post‑processing stages—JSON decoding, schema mapping (handling key name variations), and regex‑based data cleaning (standardizing dates, names, etc.)—are crucial for bridging the gap between raw model output and the target schema, especially when multiple chunks generate overlapping predictions. (4) The OFAT methodology proves to be a practical, cost‑effective strategy for navigating the high‑dimensional design space without exhaustive search.

The authors contribute: (i) a formal definition of the IE design space for LRDs, (ii) the LayIE‑LLM benchmark suite with all code and results publicly released, (iii) empirical evidence that a lightweight factor‑wise search can locate near‑optimal configurations, and (iv) a demonstration that general‑purpose LLMs can serve as a finetuning‑free, cost‑effective alternative to domain‑specific models when the pipeline is carefully engineered.

In summary, the paper shows that successful IE from layout‑rich documents with LLMs hinges on thoughtful data structuring, targeted prompting, and rigorous output refinement. While multimodal LLMs offer a performance edge, text‑only models remain competitive when paired with the right pipeline. The work provides a valuable roadmap for researchers and practitioners aiming to deploy LLM‑based document understanding systems in real‑world settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment