DiffVax: Optimization-Free Image Immunization Against Diffusion-Based Editing

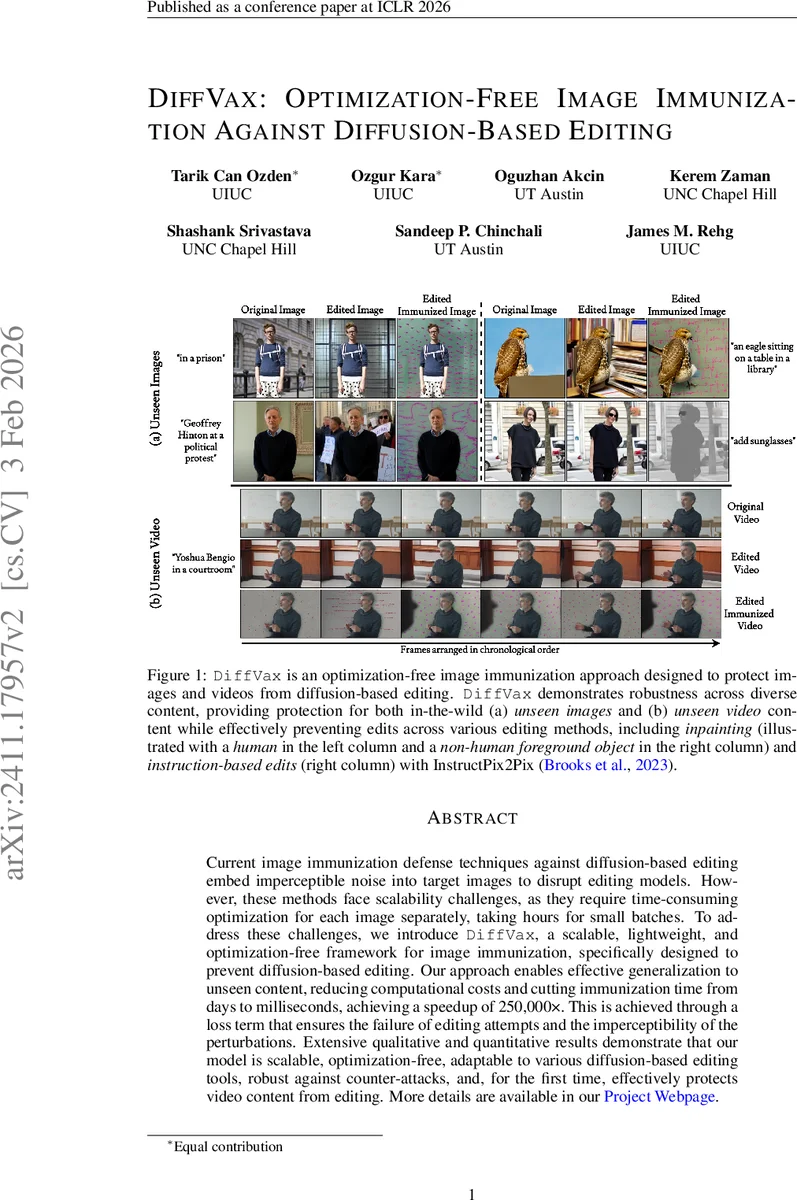

Current image immunization defense techniques against diffusion-based editing embed imperceptible noise into target images to disrupt editing models. However, these methods face scalability challenges, as they require time-consuming optimization for each image separately, taking hours for small batches. To address these challenges, we introduce DiffVax, a scalable, lightweight, and optimization-free framework for image immunization, specifically designed to prevent diffusion-based editing. Our approach enables effective generalization to unseen content, reducing computational costs and cutting immunization time from days to milliseconds, achieving a speedup of 250,000x. This is achieved through a loss term that ensures the failure of editing attempts and the imperceptibility of the perturbations. Extensive qualitative and quantitative results demonstrate that our model is scalable, optimization-free, adaptable to various diffusion-based editing tools, robust against counter-attacks, and, for the first time, effectively protects video content from editing. More details are available in https://diffvax.github.io/ .

💡 Research Summary

DiffVax introduces a fundamentally new approach to image (and video) immunization against diffusion‑based editing. Existing defenses such as PhotoGuard and DAYN rely on per‑image optimization, requiring minutes of GPU time and large memory footprints for each image, which makes them unsuitable for large‑scale deployment. DiffVax replaces this costly step with a learned “immunizer” network that can generate imperceptible perturbations in a single forward pass, reducing inference time to roughly 70 ms per image—a speed‑up of about 250,000×—and cutting memory usage to under 2 GB.

The core of the method is a two‑stage training pipeline. In stage 1, a UNet++‑style model f(·;θ) receives an input image I and a binary mask M (defining the region to protect) and outputs an immunization noise ε_im. The noise is masked (ε_im ⊙ M) and added to the original image, producing the immunized image I_im = clamp(I + ε_im ⊙ M). An L_noise term (L1 norm over the masked region) forces the perturbation to stay below a perceptual threshold, ensuring visual invisibility. In stage 2, the immunized image is fed to a pre‑trained diffusion editing model (primarily Stable Diffusion inpainting, but also InstructPix2Pix and text‑guided style transfer) together with a malicious prompt P and the same mask M. The resulting edited output I_im,edit is compared to the intended edit using a combination of CLIP‑based semantic loss and pixel‑level structural loss, forming L_edit. The total loss L = λ₁·L_noise + λ₂·L_edit drives the immunizer to produce noise that both looks benign and reliably sabotages any subsequent editing attempt.

Training is performed on a large, diverse dataset (a subset of LAION‑5B) with randomly sampled masks and prompts, allowing the immunizer to learn a generic mapping from any image to a protective perturbation. Once trained, the model can be deployed as a lightweight service: given any new image, it instantly outputs the required noise without any gradient computation.

Extensive experiments demonstrate that DiffVax achieves near‑perfect protection across multiple editing tools. Editing success rates drop below 5 % while preserving high visual fidelity (average PSNR ≈ 31 dB, SSIM ≈ 0.96). The method is robust to common counter‑measures such as JPEG compression (quality 70‑90) and Gaussian denoising (σ = 1‑5); the protective effect degrades only marginally under these transformations. Importantly, the approach generalizes across models: the same immunizer trained on Stable Diffusion inpainting successfully thwarts InstructPix2Pix and other text‑guided edits without retraining.

A major contribution is the extension to video. By applying the same mask and generated noise consistently across frames, DiffVax immunizes entire video sequences in under 0.1 s of preprocessing for a 30 fps clip. Temporal consistency is maintained, and any attempted diffusion‑based edit fails uniformly across frames, effectively neutralizing video manipulation—a capability not previously demonstrated due to the prohibitive cost of per‑frame optimization.

Overall, DiffVax satisfies the four essential criteria for a practical defense: (1) scalability via optimization‑free inference, (2) low memory and computational overhead, (3) robustness to standard post‑processing attacks, and (4) generalization to unseen content and editing models, including video. These properties make it a viable candidate for integration into large‑scale image hosting platforms, social‑media pipelines, and digital rights management systems, offering proactive protection against malicious diffusion‑based deepfakes and edits.

Comments & Academic Discussion

Loading comments...

Leave a Comment