Simulating Human Audiovisual Search Behavior

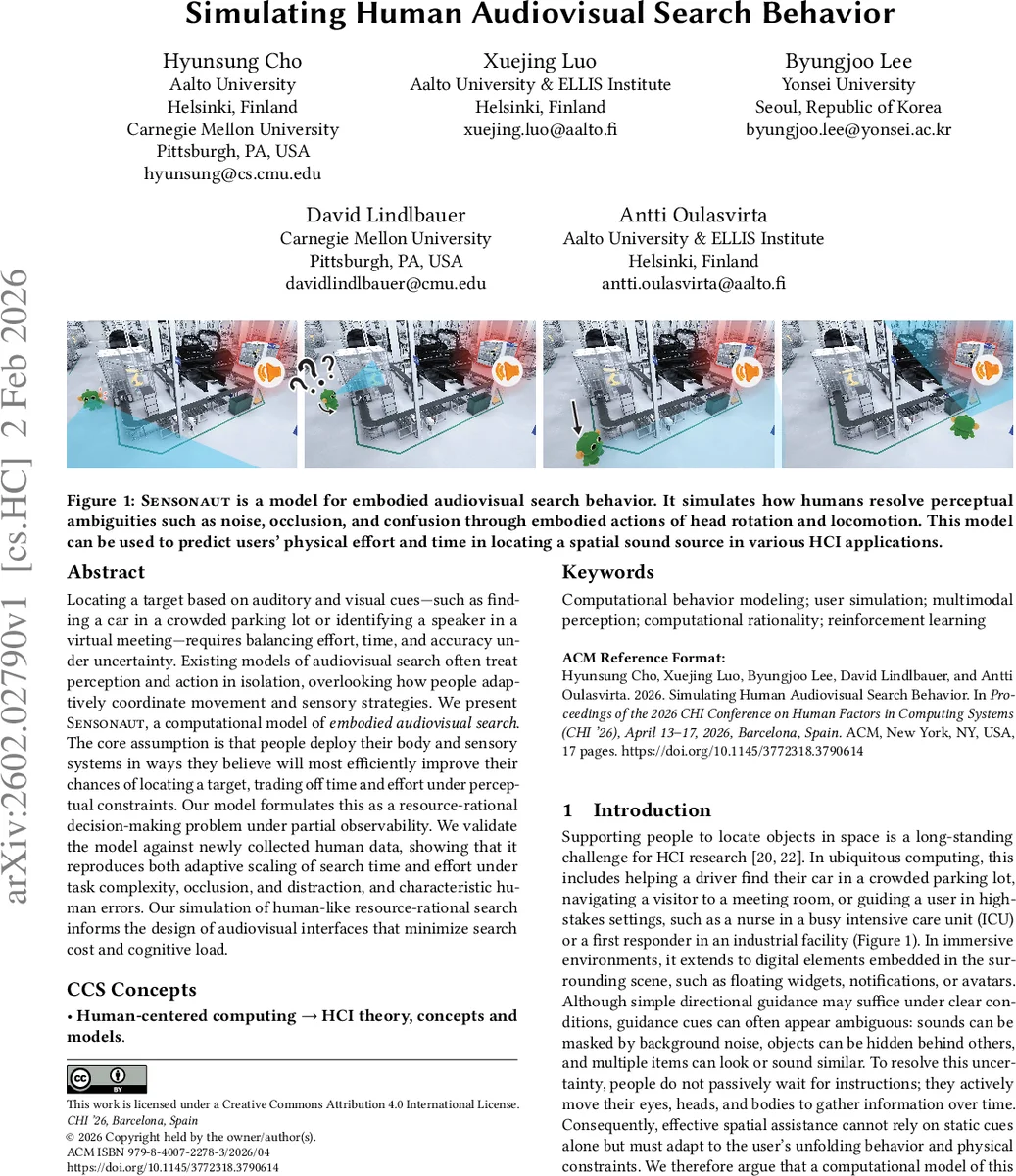

Locating a target based on auditory and visual cues$\unicode{x2013}$such as finding a car in a crowded parking lot or identifying a speaker in a virtual meeting$\unicode{x2013}$requires balancing effort, time, and accuracy under uncertainty. Existing models of audiovisual search often treat perception and action in isolation, overlooking how people adaptively coordinate movement and sensory strategies. We present Sensonaut, a computational model of embodied audiovisual search. The core assumption is that people deploy their body and sensory systems in ways they believe will most efficiently improve their chances of locating a target, trading off time and effort under perceptual constraints. Our model formulates this as a resource-rational decision-making problem under partial observability. We validate the model against newly collected human data, showing that it reproduces both adaptive scaling of search time and effort under task complexity, occlusion, and distraction, and characteristic human errors. Our simulation of human-like resource-rational search informs the design of audiovisual interfaces that minimize search cost and cognitive load.

💡 Research Summary

The paper introduces Sensonaut, a computational model that captures how humans search for spatial targets using both auditory and visual cues while accounting for embodied actions and resource constraints. The authors argue that existing models either focus on low‑level perception or abstract cognitive processes, neglecting the interplay between sensory integration and physical movement that characterizes real‑world search tasks such as finding a car in a crowded lot or locating a speaker in a virtual meeting.

Sensonaut formalizes audiovisual search as a Partially Observable Markov Decision Process (POMDP). The state is represented by a belief distribution over a polar grid (range × azimuth) indicating the probability that the target resides at each location. At each timestep the agent receives noisy auditory observations (interaural time differences and level differences) that are available even when the target is out of view, and visual observations that provide precise information only when the target is within the field of view. These observations are combined via Bayesian updating to produce a posterior belief.

Action selection is cast as a resource‑rational decision problem: the agent maximizes expected net utility, defined as the reward for correctly committing to a target location minus the costs associated with actions (head turns, forward steps, waiting, collisions). A discount factor γ controls the trade‑off between immediate and future costs. The cost model assigns small penalties to head rotations, larger penalties to locomotion, severe penalties to collisions, and a time cost to passive waiting, reflecting both physiological effort and risk.

The optimal policy π* is approximated using reinforcement learning (proximal policy optimization). Training occurs in a simulated environment that systematically varies task difficulty through numbers of occluding objects, distractor sounds, and initial target direction. The learned policy exhibits human‑like strategies: it preferentially uses low‑cost head turns to gather information, resorts to forward movement only when belief uncertainty remains high, and commits once confidence surpasses a cost‑adjusted threshold.

To validate the model, the authors conducted a controlled VR experiment with 48 participants. Participants searched for a sound‑emitting target under three manipulated factors: visual occlusion (presence of obstacles), auditory distraction (number of competing sound sources), and target initial azimuth (front, side, rear). Measured outcomes included search time, total head rotation, total forward distance, and error types (front‑back confusions, cross‑modal misattributions). Sensonaut accurately reproduced the main trends: search time increased with task complexity, head rotation dominated early exploration, forward steps were used sparingly, and error patterns matched human data.

The contributions are threefold: (1) a resource‑rational POMDP model that unifies multisensory cue integration with embodied action costs, (2) an RL‑based implementation that generates human‑like search trajectories and error profiles, and (3) a publicly released dataset of human audiovisual search behavior. The authors acknowledge limitations, notably the current 2‑D polar representation that omits vertical movement and the simplified cost functions that do not capture fatigue or nuanced risk aversion. Future work is suggested to extend the model to full 3‑D environments, personalize cost parameters, and integrate Sensonaut into adaptive interface designs that dynamically guide users based on predicted search effort. Overall, the study demonstrates that a resource‑rational, embodied approach can bridge perception and action, offering a powerful tool for designing more efficient audiovisual human‑computer interfaces.

Comments & Academic Discussion

Loading comments...

Leave a Comment