LEMON: Local Explanations via Modality-aware OptimizatioN

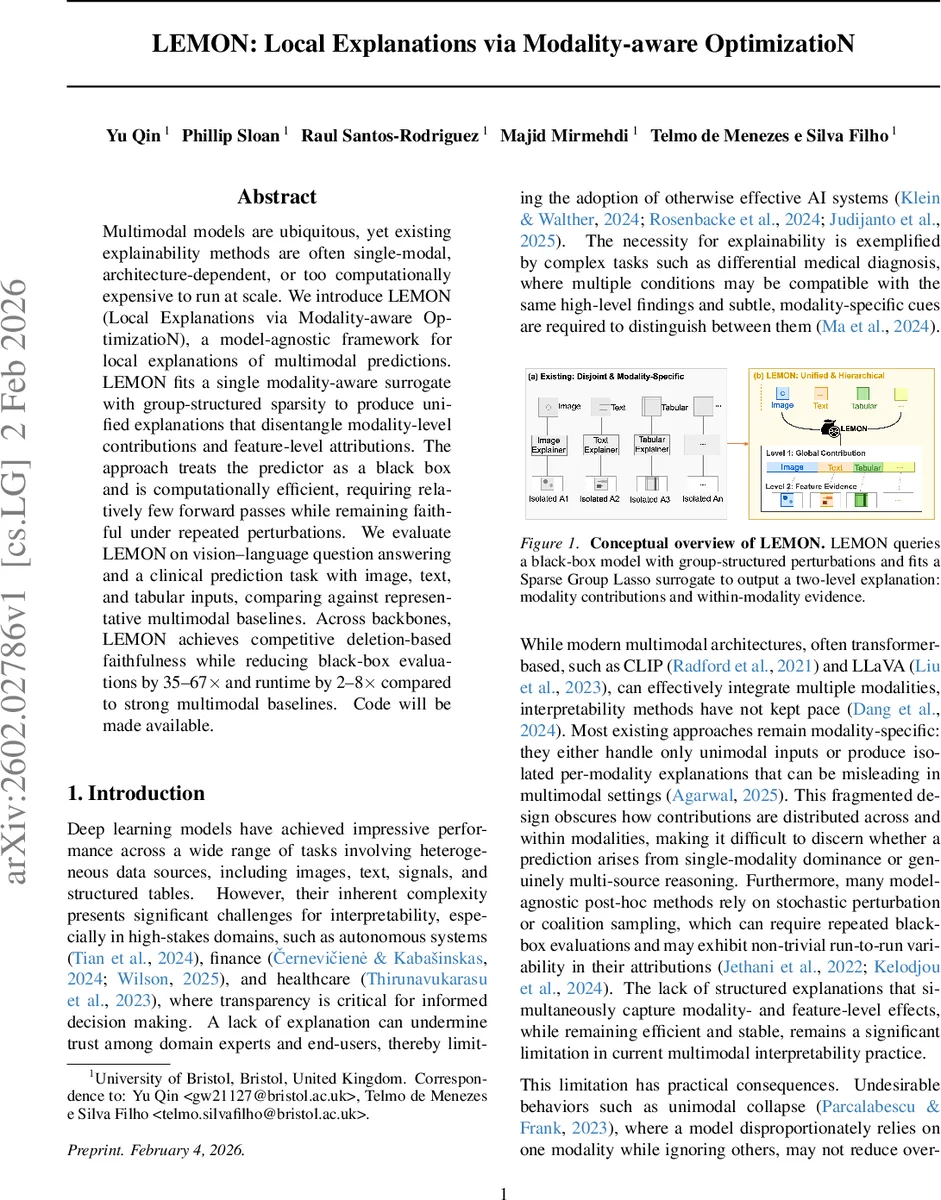

Multimodal models are ubiquitous, yet existing explainability methods are often single-modal, architecture-dependent, or too computationally expensive to run at scale. We introduce LEMON (Local Explanations via Modality-aware OptimizatioN), a model-agnostic framework for local explanations of multimodal predictions. LEMON fits a single modality-aware surrogate with group-structured sparsity to produce unified explanations that disentangle modality-level contributions and feature-level attributions. The approach treats the predictor as a black box and is computationally efficient, requiring relatively few forward passes while remaining faithful under repeated perturbations. We evaluate LEMON on vision-language question answering and a clinical prediction task with image, text, and tabular inputs, comparing against representative multimodal baselines. Across backbones, LEMON achieves competitive deletion-based faithfulness while reducing black-box evaluations by 35-67 times and runtime by 2-8 times compared to strong multimodal baselines.

💡 Research Summary

LEMON (Local Explanations via Modality‑aware OptimizatioN) is a model‑agnostic framework designed to generate local, hierarchical explanations for multimodal black‑box predictors. The authors identify three major shortcomings in existing XAI methods for multimodal systems: (1) most approaches are unimodal or architecture‑dependent, (2) they either lack a unified view of modality‑level contributions or require costly post‑hoc aggregation, and (3) perturbation‑based methods such as Shapley‑value estimators demand a large number of model queries, making them impractical at scale.

To address these issues, LEMON extends the classic LIME paradigm by fitting a single surrogate model that incorporates group‑structured sparsity via a Sparse Group Lasso (SGL) regularizer. The pipeline consists of four steps. First, an interpretation interface partitions each raw modality into interpretable units (e.g., super‑pixels for images, tokens or phrases for text, columns or time windows for tabular/temporal data). These units are indexed globally and grouped by modality, providing the structure required for the SGL penalty. Second, a local neighbourhood is constructed by repeatedly sampling binary masks, applying modality‑specific masking (replacing masked units with a predefined baseline such as a mean image or a

Comments & Academic Discussion

Loading comments...

Leave a Comment