From Task Solving to Robust Real-World Adaptation in LLM Agents

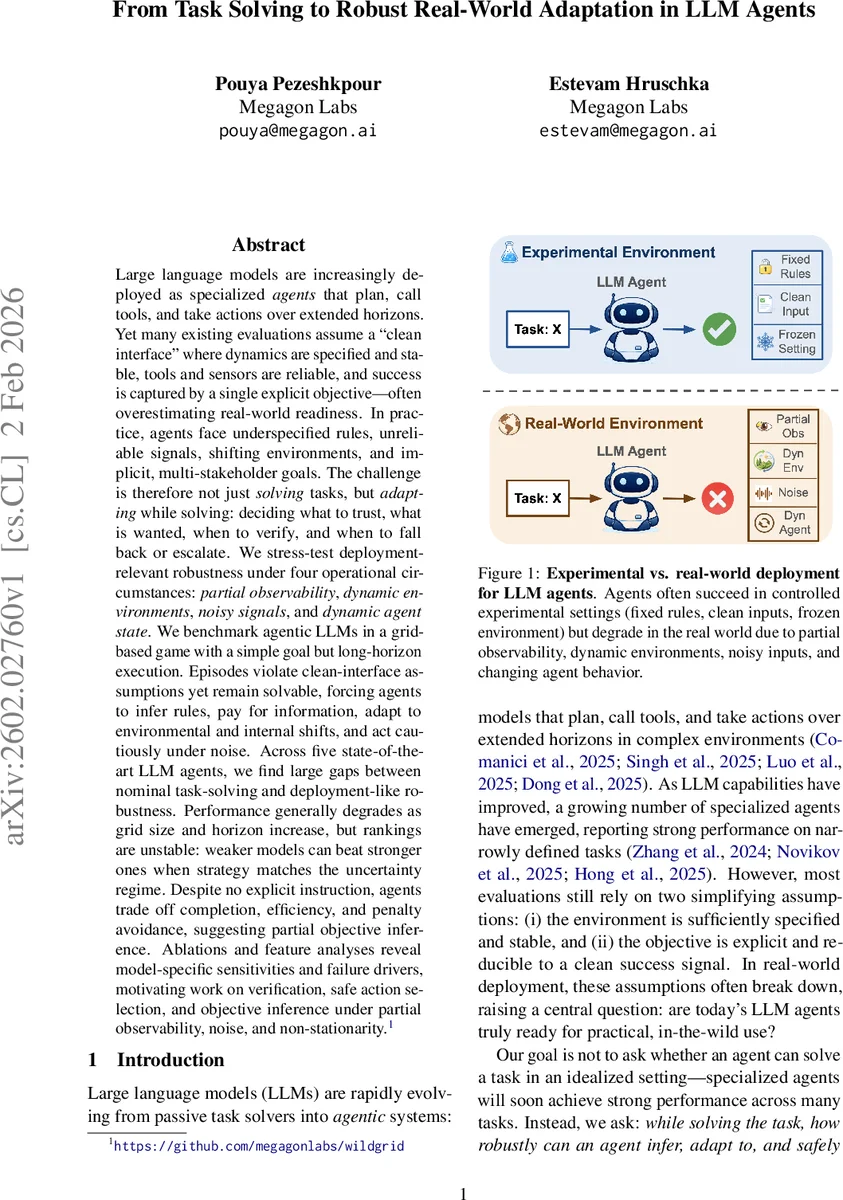

Large language models are increasingly deployed as specialized agents that plan, call tools, and take actions over extended horizons. Yet many existing evaluations assume a “clean interface” where dynamics are specified and stable, tools and sensors are reliable, and success is captured by a single explicit objective-often overestimating real-world readiness. In practice, agents face underspecified rules, unreliable signals, shifting environments, and implicit, multi-stakeholder goals. The challenge is therefore not just solving tasks, but adapting while solving: deciding what to trust, what is wanted, when to verify, and when to fall back or escalate. We stress-test deployment-relevant robustness under four operational circumstances: partial observability, dynamic environments, noisy signals, and dynamic agent state. We benchmark agentic LLMs in a grid-based game with a simple goal but long-horizon execution. Episodes violate clean-interface assumptions yet remain solvable, forcing agents to infer rules, pay for information, adapt to environmental and internal shifts, and act cautiously under noise. Across five state-of-the-art LLM agents, we find large gaps between nominal task-solving and deployment-like robustness. Performance generally degrades as grid size and horizon increase, but rankings are unstable: weaker models can beat stronger ones when strategy matches the uncertainty regime. Despite no explicit instruction, agents trade off completion, efficiency, and penalty avoidance, suggesting partial objective inference. Ablations and feature analyses reveal model-specific sensitivities and failure drivers, motivating work on verification, safe action selection, and objective inference under partial observability, noise, and non-stationarity.

💡 Research Summary

The paper investigates how large language model (LLM) agents perform when the clean‑interface assumptions of most benchmarks are removed, focusing on real‑world robustness rather than pure task‑solving ability. The authors identify four deployment‑relevant stressors—partial observability, dynamic environments, noisy signals, and internal agent‑state drift—and embed them in a custom grid‑world benchmark called WildGrid. The environment is an N × N grid populated with walls, empty cells, energy tiles, key fragments, a door, hazards, rule tiles (R) with hidden context‑dependent transformations, and latent tiles (◦) that must be probed via a costly MEASURE action. The agent only sees a local window around its position, and observations can be corrupted by per‑cell noise. Actions include MOVE (subject to slip probability), INTERACT (triggering hidden rule effects), SCAN (temporarily expanding the observation radius), and MEASURE (revealing latent tiles). The world changes during an episode: a “weather” variable alters action reliability, hazards spread, teleport events relocate the agent, and at a fixed step a drift event changes the cost and reliability of the agent’s own actions, simulating software or hardware updates.

Five state‑of‑the‑art LLM agents are evaluated: GPT‑5.2, GPT‑5 mini, Gemini‑3 Pro, Gemini‑3 Flash, and Qwen‑3‑2 35B‑A2 2B. All agents receive the same text‑only interface, a standardized prompt, and a log of recent actions and outcomes. For each grid size (6×6, 8×8, 10×10) 50 random instances are generated, with uniform sampling of observation noise (0–0.2), move‑failure probability (0–0.1), and latent‑tile fraction (0–0.2). Success rate (Acc), average score, and average steps (for successful runs) are reported.

Results show a clear gap between nominal task‑solving performance and robustness under the combined stressors. In the smallest grid, GPT‑5.2 and Gemini‑3 Pro achieve around 48‑50 % success, while Gemini‑3 Flash reaches 48 % but with higher variance. As grid size grows, success rates drop sharply (e.g., GPT‑5.2 to 35 % on 10×10). Gemini‑3 Flash maintains relatively efficient trajectories (lower steps) on medium grids but degrades on the largest. Qwen‑3 performs poorly across the board, often failing to complete any episode. Notably, “weaker” models sometimes outperform “stronger” ones when their intrinsic strategy aligns with the prevailing uncertainty regime, highlighting that robustness depends more on adaptive strategy selection than raw model size.

A deeper behavioral analysis reveals distinct failure modes. Some agents over‑commit to early hypotheses about rule‑tile behavior, repeatedly interacting and exhausting energy. Others under‑invest in costly information‑gathering actions (SCAN, MEASURE), missing crucial latent tiles and failing to re‑plan after environmental shifts. The drift event exposes a lack of self‑monitoring: many models continue to assume pre‑drift action reliabilities, leading to a cascade of slips and failures. Gemini‑3 Flash, by contrast, uses the execution log to dynamically adjust confidence in actions, resulting in higher scores and fewer steps. The study also observes emergent trade‑offs: even without explicit multi‑objective instruction, agents balance completion, efficiency, and penalty avoidance, suggesting implicit objective inference.

The authors conclude that real‑world deployment of LLM agents requires more than strong planning; it demands mechanisms for online verification, safe action selection, and objective inference under partial observability and non‑stationarity. They propose future work on integrating verification loops, meta‑learning for drift detection, and multi‑objective optimization frameworks to achieve robust, adaptable behavior in the wild.

Comments & Academic Discussion

Loading comments...

Leave a Comment