Dynamic Mix Precision Routing for Efficient Multi-step LLM Interaction

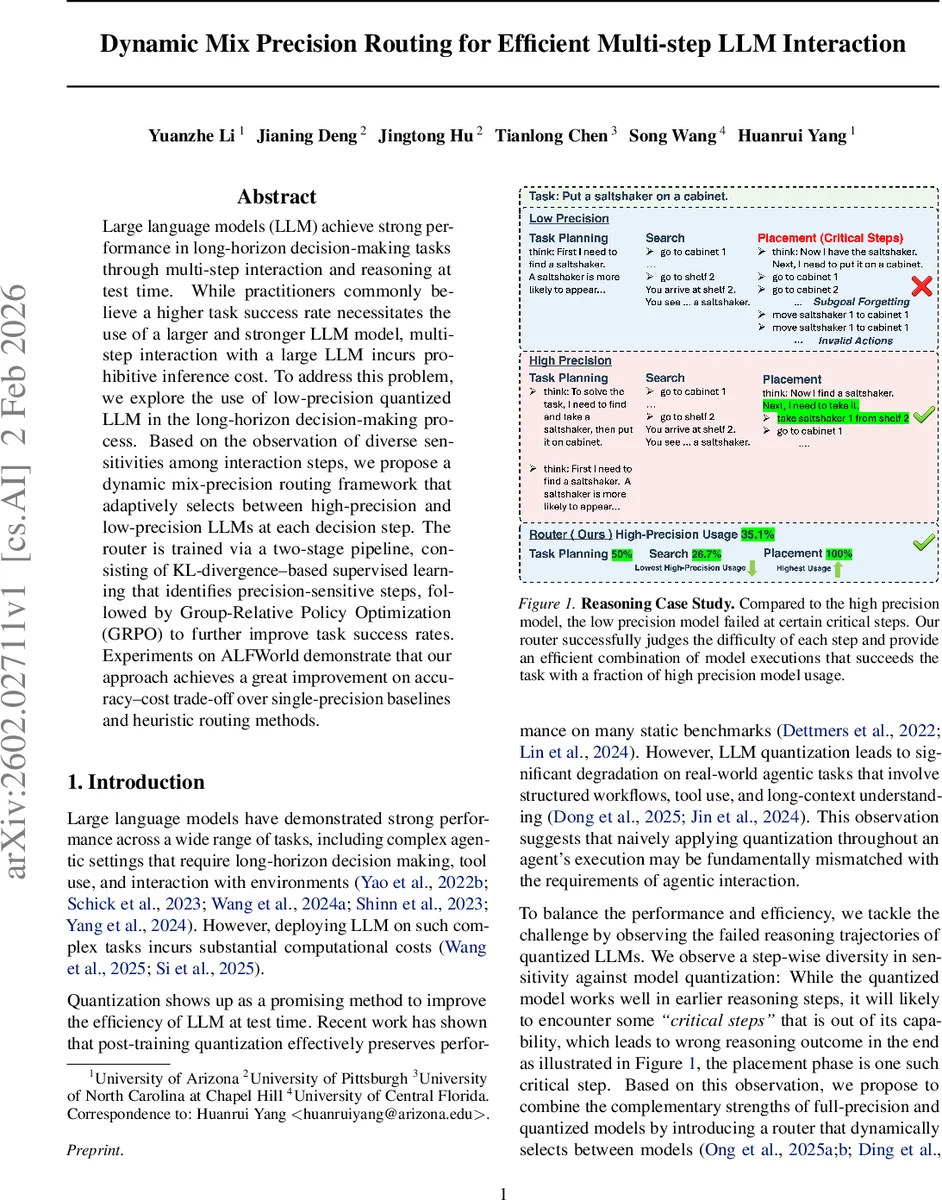

Large language models (LLM) achieve strong performance in long-horizon decision-making tasks through multi-step interaction and reasoning at test time. While practitioners commonly believe a higher task success rate necessitates the use of a larger and stronger LLM model, multi-step interaction with a large LLM incurs prohibitive inference cost. To address this problem, we explore the use of low-precision quantized LLM in the long-horizon decision-making process. Based on the observation of diverse sensitivities among interaction steps, we propose a dynamic mix-precision routing framework that adaptively selects between high-precision and low-precision LLMs at each decision step. The router is trained via a two-stage pipeline, consisting of KL-divergence-based supervised learning that identifies precision-sensitive steps, followed by Group-Relative Policy Optimization (GRPO) to further improve task success rates. Experiments on ALFWorld demonstrate that our approach achieves a great improvement on accuracy-cost trade-off over single-precision baselines and heuristic routing methods.

💡 Research Summary

**

The paper tackles the high inference cost of using large language models (LLMs) for long‑horizon, interactive decision‑making tasks such as those found in embodied text environments (e.g., ALFWorld). While a single high‑precision model (e.g., bf16) yields strong performance, it is computationally expensive; conversely, a low‑precision quantized model (e.g., 3‑bit) is cheap but suffers catastrophic failures on a few critical reasoning steps. The authors observe that the divergence between high‑ and low‑precision models is highly skewed: most steps have negligible KL‑divergence, but a small tail of steps exhibits large divergence that aligns with “critical” decisions where a low‑precision model would otherwise derail the entire episode.

To exploit this observation, they propose a Dynamic Mix‑Precision Routing framework that decides, at each interaction step, whether to invoke the high‑precision or low‑precision version of the same base model. The routing policy (R_\theta(s_t)) maps the current textual observation (task description, environment state, and history) to a model index. The policy is a lightweight two‑layer Transformer encoder that processes a sequence of step‑level embeddings (pre‑computed by a frozen text encoder) and outputs a categorical distribution over precision levels via a softmax on the hidden state of the most recent valid token.

Training proceeds in two stages:

-

KL‑Supervised Training (KL‑ST) – High‑precision rollouts are collected on successful episodes. For each step, the KL‑divergence between the action distributions of the low‑precision and high‑precision models is computed. The divergence values are transformed into binary labels (high‑precision needed vs. low‑precision sufficient) by thresholding the empirical cumulative distribution. The router is then trained with a weighted cross‑entropy loss to predict these labels, providing a stable initialization that captures step‑wise precision sensitivity.

-

Group‑Relative Policy Optimization (GRPO) – After KL‑ST, the router is fine‑tuned using a reinforcement‑learning objective that directly maximizes task success while penalizing inference cost. GRPO operates on groups of trajectories, using relative returns within each group as advantage estimates, thus avoiding the need for a value function—a crucial benefit in sparse‑reward, long‑horizon settings. The overall objective is (\max_\theta \mathbb{E}

Comments & Academic Discussion

Loading comments...

Leave a Comment