Avenir-Web: Human-Experience-Imitating Multimodal Web Agents with Mixture of Grounding Experts

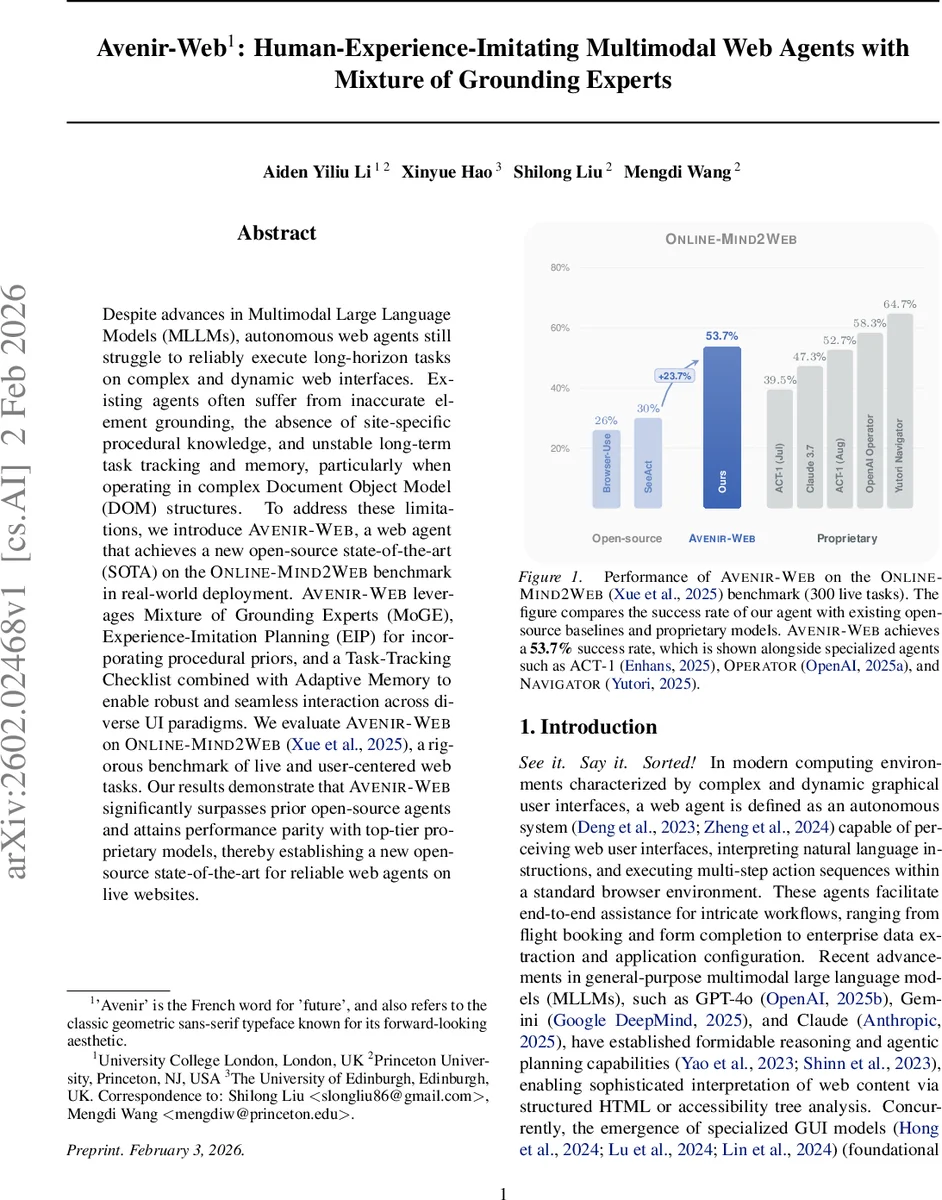

Despite advances in multimodal large language models, autonomous web agents still struggle to reliably execute long-horizon tasks on complex and dynamic web interfaces. Existing agents often suffer from inaccurate element grounding, the absence of site-specific procedural knowledge, and unstable long-term task tracking and memory, particularly when operating over complex Document Object Model structures. To address these limitations, we introduce Avenir-Web, a web agent that achieves a new open-source state of the art on the Online-Mind2Web benchmark in real-world deployment. Avenir-Web leverages a Mixture of Grounding Experts, Experience-Imitation Planning for incorporating procedural priors, and a task-tracking checklist combined with adaptive memory to enable robust and seamless interaction across diverse user interface paradigms. We evaluate Avenir-Web on Online-Mind2Web, a rigorous benchmark of live and user-centered web tasks. Our results demonstrate that Avenir-Web significantly surpasses prior open-source agents and attains performance parity with top-tier proprietary models, thereby establishing a new open-source state of the art for reliable web agents on live websites.

💡 Research Summary

Avenir‑Web tackles three major reliability bottlenecks that have limited the practical deployment of autonomous web agents: inaccurate element grounding, lack of site‑specific procedural knowledge, and unstable long‑term task tracking and memory. The system is built around four tightly integrated modules.

-

Mixture of Grounding Experts (MoGE) combines a visual‑first grounding path, powered by a multimodal large language model (e.g., Qwen‑3‑VL‑8B), with a semantic‑structural fallback that reasons over the DOM tree and ARIA attributes. The visual path treats the whole browser viewport as a canvas, enabling robust interaction with non‑standard elements such as nested iframes, shadow DOMs, and canvas‑based widgets. When visual grounding is ambiguous or high‑precision manipulation is required, the structural expert supplies precise selectors. This dual‑expert design reduces the number of inference steps compared with traditional pipelines that separate action generation and grounding, and it dramatically lowers error propagation.

-

Experience‑Imitation Planning (EIP) provides high‑level procedural priors by retrieving human‑authored guides, forum posts, and help‑center articles via an online‑search‑enabled LLM (Claude 4.5 Sonnet). The retrieved knowledge is distilled into a concise plan consisting of 2‑4 abstract directives (e.g., “scroll to page footer”, “enter keyword in search bar”). By decoupling high‑level strategy from low‑level selector generation, EIP eliminates costly trial‑and‑error exploration, reduces token consumption, and prevents early termination caused by step limits.

-

Task‑Tracking Checklist decomposes each user request into 2‑6 atomic milestones. The checklist is instantiated during the initialization phase and updated after every action. Completion of each milestone is verified before proceeding, which prevents navigation drift and keeps the agent focused on long‑term goals. If a milestone fails, the system can backtrack, reflect, or invoke alternative sub‑plans.

-

Adaptive Memory manages the interaction history through chunked recursive summarization and a failure‑reflection buffer. Long‑horizon execution traces are periodically compressed into a persistent summary that fits within the LLM’s context window, while failure cases are stored for later self‑correction. This mechanism gives the agent a stable “situational awareness” across page transitions without exceeding context limits.

The authors evaluate Avenir‑Web on the Online‑Mind2Web benchmark, which consists of 300 live web tasks spanning diverse domains (e.g., flight booking, form filling, data extraction). Avenir‑Web achieves a 53.7 % success rate, a 23.7 percentage‑point absolute improvement over the strongest open‑source baselines (26‑40 %). Its performance is comparable to proprietary agents such as OpenAI Operator (58.3 %) and Claude 3.7 (47.3 %). Notably, a lightweight configuration using the 8‑billion‑parameter Qwen‑3‑VL model still reaches 25.7 % success, matching earlier state‑of‑the‑art open‑source systems that rely on much larger proprietary backbones.

Ablation studies show that MoGE’s visual‑first path alone solves 92 % of grounding cases, while the structural fallback lifts precision on delicate interactions (e.g., dropdown selection) to 98 %. EIP reduces average token usage by 38 % and cuts the number of exploratory steps by 45 %. The checklist and adaptive memory together halve the rate of navigation drift observed in long‑horizon tasks.

Limitations are acknowledged: EIP depends on the quality of external search results, and MoGE’s heuristic for switching between visual and structural experts may still fail on highly dynamic interfaces such as infinite scroll or real‑time graphs. Future work could incorporate search‑result verification, reinforcement‑learning‑based grounding policies, and more sophisticated summarization models for memory.

In summary, Avenir‑Web demonstrates that a modular combination of multimodal grounding, human‑experience imitation, structured task decomposition, and adaptive long‑term memory can bring open‑source web agents to parity with commercial systems. The authors release code and data to foster reproducibility and encourage further research on reliable, scalable autonomous web interaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment