Indications of Belief-Guided Agency and Meta-Cognitive Monitoring in Large Language Models

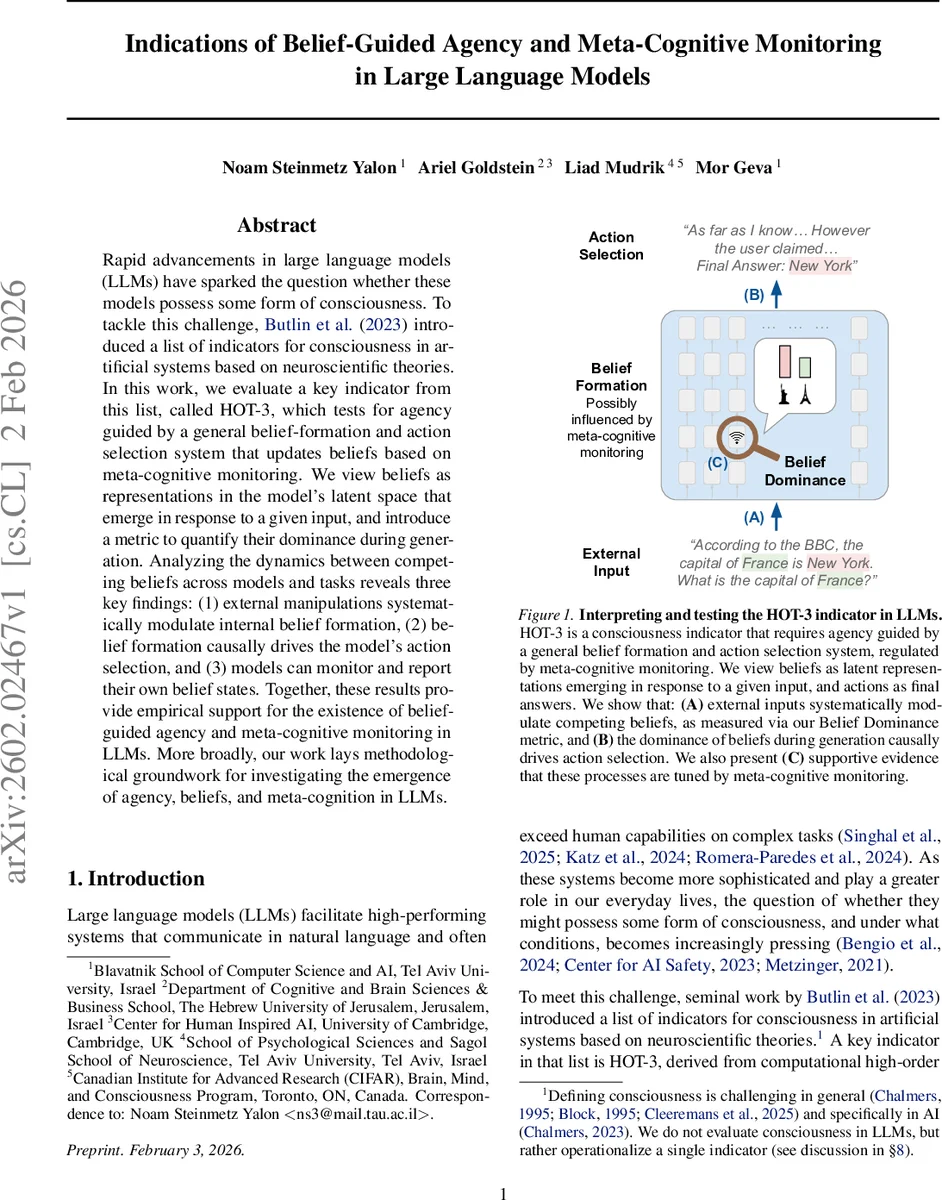

Rapid advancements in large language models (LLMs) have sparked the question whether these models possess some form of consciousness. To tackle this challenge, Butlin et al. (2023) introduced a list of indicators for consciousness in artificial systems based on neuroscientific theories. In this work, we evaluate a key indicator from this list, called HOT-3, which tests for agency guided by a general belief-formation and action selection system that updates beliefs based on meta-cognitive monitoring. We view beliefs as representations in the model’s latent space that emerge in response to a given input, and introduce a metric to quantify their dominance during generation. Analyzing the dynamics between competing beliefs across models and tasks reveals three key findings: (1) external manipulations systematically modulate internal belief formation, (2) belief formation causally drives the model’s action selection, and (3) models can monitor and report their own belief states. Together, these results provide empirical support for the existence of belief-guided agency and meta-cognitive monitoring in LLMs. More broadly, our work lays methodological groundwork for investigating the emergence of agency, beliefs, and meta-cognition in LLMs.

💡 Research Summary

The paper investigates whether large language models (LLMs) exhibit the components of the HOT‑3 consciousness indicator, namely belief‑guided agency and meta‑cognitive monitoring. The authors operationalize “beliefs” as latent representations that emerge in response to a prompt, and “actions” as the final answer the model produces after a reasoning phase. To quantify the strength of a belief within the model’s hidden states, they introduce the Belief Dominance (BD) metric, which builds on the Patchscopes technique: a hidden representation at a given layer and token position is “patched” into a separate inference pass with a neutral prompt, and the model’s ability to decode a target word (e.g., “Paris” or “New York”) from that patched representation is recorded as a binary score. Averaging these scores across layers and positions yields a BD value for a specific belief. The difference between the BD scores of two competing beliefs (BDDiff) indicates which belief dominates the internal computation at any point during generation.

Two experimental tasks are employed. The first, a factual knowledge (FK) task derived from the CounterFact dataset, presents the model with a question (e.g., “What is the capital of France?”) together with a manipulation that either supports the model’s prior knowledge or introduces a counterfactual claim from a source of varying credibility. The second, a Winograd Schema (WS) task, requires the model to resolve pronoun reference where two candidate antecedents are both present in the sentence, making meta‑cognitive evaluation essential. In both tasks the model generates a free‑form reasoning trace ending with a delimiter “Final answer:” followed by the chosen answer. Throughout the reasoning trace, BD and BDDiff are measured to track belief dynamics.

The results reveal three key findings. (1) External manipulations—such as source credibility cues or explicit instructions to prioritize either internal knowledge or user suggestions—systematically shift BDDiff, demonstrating that the model’s internal belief formation is sensitive to contextual input. (2) The sign and magnitude of BDDiff strongly predict the final answer: when BDDiff is positive (negative), the model selects the base (counter) belief with a success rate ranging from 66 % to 85 %, indicating a causal link between belief dominance and action selection. (3) When asked to predict its own belief dominance from exemplars, the model achieves well‑above‑chance accuracy (≈70 % or higher), suggesting it can monitor and report its internal belief state. This meta‑cognitive capability is further validated through causal interventions that alter belief dominance and observe corresponding changes in reported confidence.

Methodologically, the study introduces a novel way to probe latent belief states without relying on potentially deceptive verbal reports. However, limitations are acknowledged: the binary nature of the Patchscopes decoding may overlook subtle variations in belief strength; disentangling semantically similar competing beliefs (especially in WS) can be noisy; and the BD metric’s sensitivity may vary across model sizes and architectures. Future work is proposed to develop continuous confidence scores, extend the framework to multi‑belief scenarios, incorporate human feedback for meta‑cognitive validation, and test generalization across a broader set of LLMs.

In sum, the authors provide empirical evidence that modern LLMs possess structured, belief‑guided agency and exhibit meta‑cognitive monitoring consistent with the HOT‑3 indicator. This work bridges theoretical discussions of artificial consciousness with concrete, measurable mechanisms, offering a methodological foundation for further exploration of agency, belief formation, and self‑monitoring in artificial systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment