Implicit neural representation of textures

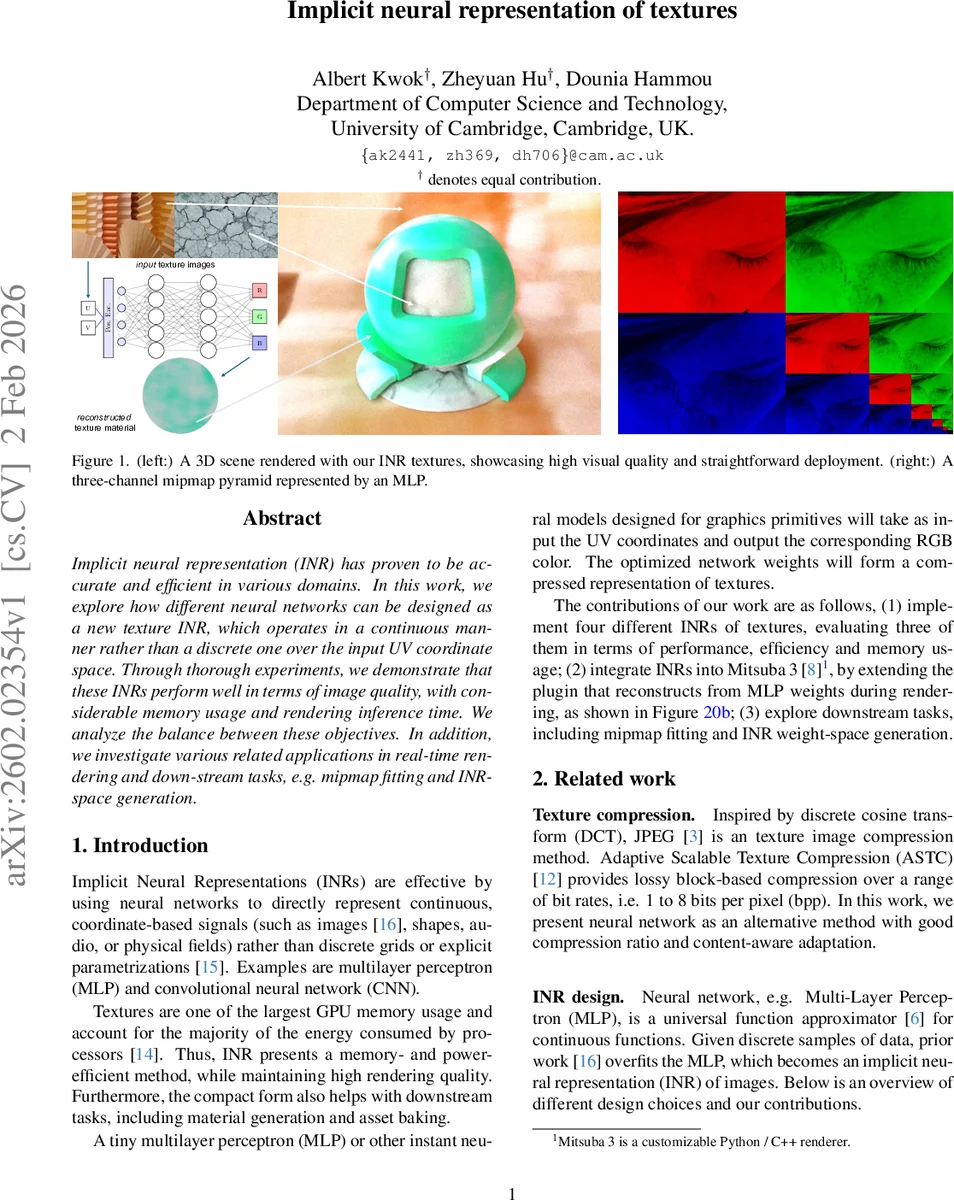

Implicit neural representation (INR) has proven to be accurate and efficient in various domains. In this work, we explore how different neural networks can be designed as a new texture INR, which operates in a continuous manner rather than a discrete one over the input UV coordinate space. Through thorough experiments, we demonstrate that these INRs perform well in terms of image quality, with considerable memory usage and rendering inference time. We analyze the balance between these objectives. In addition, we investigate various related applications in real-time rendering and down-stream tasks, e.g. mipmap fitting and INR-space generation.

💡 Research Summary

This paper investigates the use of implicit neural representations (INRs) for texture storage and rendering, proposing several multilayer perceptron (MLP) based models that map continuous UV coordinates to RGB colors. The authors first construct a benchmark set from the Describable Textures Dataset (DTD), selecting 25 diverse textures based on Laplacian variance to ensure a range of frequencies and complexities. Four network designs are implemented: (1) a plain MLP without any positional encoding, (2) a SIREN network that uses sinusoidal activations, (3) an MLP augmented with Fourier feature encoding, and (4) a variant with multiresolution hash encoding (the latter is described but not evaluated due to limited texture resolution).

Model capacity is varied by changing depth (1–3 hidden layers) and width (128, 256, 512 units). Both Adam and Rprop optimizers are tested, with Adam consistently delivering higher PSNR, SSIM, and perceptual scores. Training runs for 50 epochs on an RTX 5080Ti GPU, achieving roughly 0.5–2 iterations per second, which translates to 50–200 seconds per texture—a cost acceptable for offline preprocessing but not for real‑time learning.

Performance is measured using pixel‑wise metrics (MAE, MSE, PSNR) and perceptual metrics (SSIM, LPIPS, VMAF). Results show that the Fourier‑encoded MLP achieves the best trade‑off between bitrate and quality: LPIPS values approach zero even at modest bits‑per‑pixel, indicating near‑perfect perceptual fidelity. SIREN follows closely, while the plain MLP lags significantly, producing blurry outputs and failing to capture high‑frequency details. The Fourier model sometimes exhibits faint grid‑like artifacts early in training, but these diminish after convergence; SIREN can generate “lumpy” artifacts that persist on highly geometric textures.

When compared against Adaptive Scalable Texture Compression (ASTC), the INR approaches deliver higher perceptual quality (especially LPIPS) at comparable bitrates, while SSIM and PSNR gains are modest but consistent. The authors also integrate their INR into the Mitsuba 3 renderer, demonstrating that a single forward pass of the MLP can replace traditional texture lookup with acceptable rendering times (≈4.7 s for a full image on an Apple M1 CPU at one sample per pixel).

A novel contribution is the “mipmap fitting” experiment: an additional scalar input t∈

Comments & Academic Discussion

Loading comments...

Leave a Comment