DFKI-Speech System for WildSpoof Challenge: A robust framework for SASV In-the-Wild

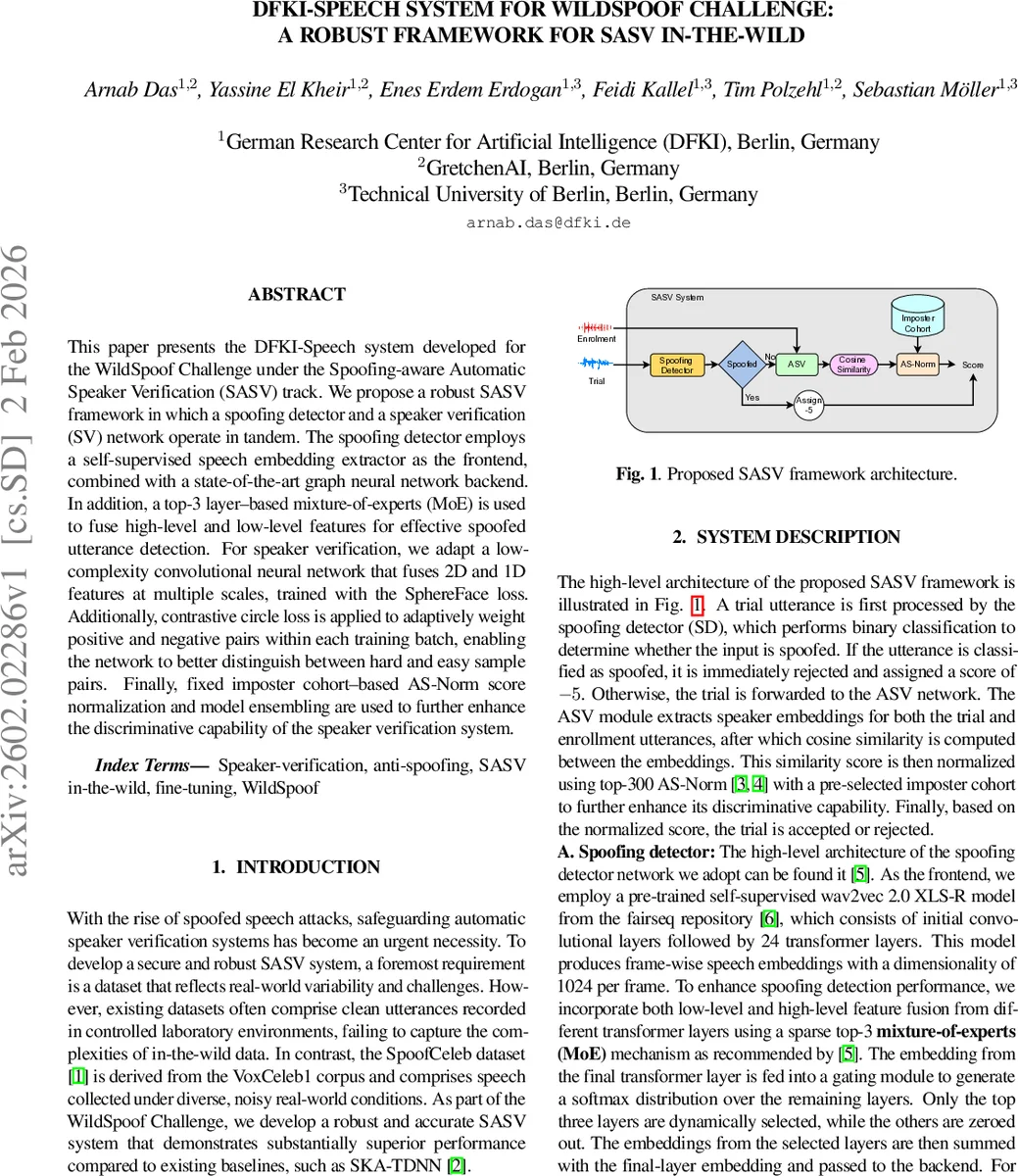

This paper presents the DFKI-Speech system developed for the WildSpoof Challenge under the Spoofing aware Automatic Speaker Verification (SASV) track. We propose a robust SASV framework in which a spoofing detector and a speaker verification (SV) network operate in tandem. The spoofing detector employs a self-supervised speech embedding extractor as the frontend, combined with a state-of-the-art graph neural network backend. In addition, a top-3 layer based mixture-of-experts (MoE) is used to fuse high-level and low-level features for effective spoofed utterance detection. For speaker verification, we adapt a low-complexity convolutional neural network that fuses 2D and 1D features at multiple scales, trained with the SphereFace loss. Additionally, contrastive circle loss is applied to adaptively weight positive and negative pairs within each training batch, enabling the network to better distinguish between hard and easy sample pairs. Finally, fixed imposter cohort based AS Norm score normalization and model ensembling are used to further enhance the discriminative capability of the speaker verification system.

💡 Research Summary

The paper introduces the DFKI‑Speech system, a Spoof‑aware Automatic Speaker Verification (SASV) solution designed for the WildSpoof Challenge, which targets realistic “in‑the‑wild” conditions with diverse noise and channel variations. The proposed architecture tightly couples a spoofing detector and a speaker verification (SV) module, allowing them to operate in tandem while sharing a common decision pipeline.

Spoofing detector – The front‑end uses a pre‑trained wav2vec 2.0‑XL model (10 24‑dimensional embeddings) to extract rich, self‑supervised speech representations. To avoid the computational burden of processing all transformer layers, a top‑3 layer Mixture‑of‑Experts (MoE) mechanism learns sparse weights and selects only the three most informative layers for each utterance. These selected embeddings are fed into a graph neural network (GNN) based AA‑SIST backend, which models inter‑frame relationships and captures subtle artefacts typical of spoofed audio. The detector is trained with a binary cross‑entropy loss to discriminate spoof versus bona‑fide speech.

Speaker verification – The SV front‑end is a low‑complexity convolutional network called ReDimNet. It processes mel‑spectrograms simultaneously as 1‑D sequences (via transformer‑style self‑attention blocks) and 2‑D images (via multi‑scale ConvNeXt blocks). The two streams are merged through scalar‑weighted residual connections, producing a fused embedding that encodes both spectral and temporal speaker characteristics. Training employs a combination of SphereFace loss (angular margin with scale = 30 and margin = 1.5) and Contrastive Circle loss, which dynamically re‑weights positive and negative pairs in each batch, emphasizing hard positives and hard negatives. This dual‑loss strategy pushes embeddings of the same speaker apart from impostors while maintaining compact intra‑speaker clusters.

Score normalization and ensembling – After obtaining a spoofing score and an SV similarity score, the system applies AS‑Norm using a fixed imposter cohort. This normalizes cosine similarities by cohort mean and standard deviation, reducing sensitivity to channel and noise mismatches. Finally, several independently trained instances of the same architecture are ensembled by averaging their normalized scores, which further stabilizes performance.

Experimental setup – The authors train and evaluate on the official WildSpoof evaluation set and the SpoofCeleb dataset (derived from VoxCeleb‑1 with real‑world noise and channel conditions). Audio is resampled to 16 kHz, trimmed/padded to 4 seconds, and augmented with MUSAN background sounds, RIR reverberation, and random gain. Training uses two NVIDIA A100 GPUs, with learning rates of 1e‑6 for the spoof detector and 1e‑4 for the SV network. The SV model is initialized with pretrained weights from a ResNet‑based model trained on VoxCeleb‑2.

Results – Compared with baseline systems (e.g., AS‑V‑DNN, SKA‑T‑DNN), the DFKI‑Speech framework achieves a SASV DCF reduction from 0.036 to 0.032 (≈11 % relative improvement) and lowers SV equal‑error‑rate (EER) from 2.45 % to 2.20 %. Spoof detection DCF drops dramatically from 0.290 to 0.040, demonstrating the effectiveness of the top‑3 MoE and GNN backend. Model ensembling yields a further DCF decrease to around 0.030. Ablation studies confirm that each component contributes meaningfully: replacing wav2vec 2.0‑XL with MFCC degrades DCF to 0.045; removing MoE increases computational load without substantial gain; omitting Circle loss raises SV‑EER to 2.35 %.

Discussion and future work – While the system delivers strong performance, the GNN backend and MoE selection introduce additional computational overhead that may hinder real‑time deployment. Moreover, the detector and verifier are trained separately and combined only at inference time; a joint end‑to‑end training could better exploit cross‑task synergies. Future research directions include lightweight GNN alternatives, reinforcement‑learning‑driven dynamic layer selection, and large‑scale multi‑task training to improve both robustness and efficiency.

In conclusion, the DFKI‑Speech system presents a comprehensive, high‑performing SASV solution for wild‑condition spoofing challenges. By integrating self‑supervised speech embeddings, selective transformer layer fusion, graph‑based spoof detection, multi‑scale speaker verification, angular‑margin and contrastive losses, AS‑Norm, and model ensembling, it surpasses existing baselines and offers a solid foundation for secure, real‑world speaker authentication.

Comments & Academic Discussion

Loading comments...

Leave a Comment