D-CORE: Incentivizing Task Decomposition in Large Reasoning Models for Complex Tool Use

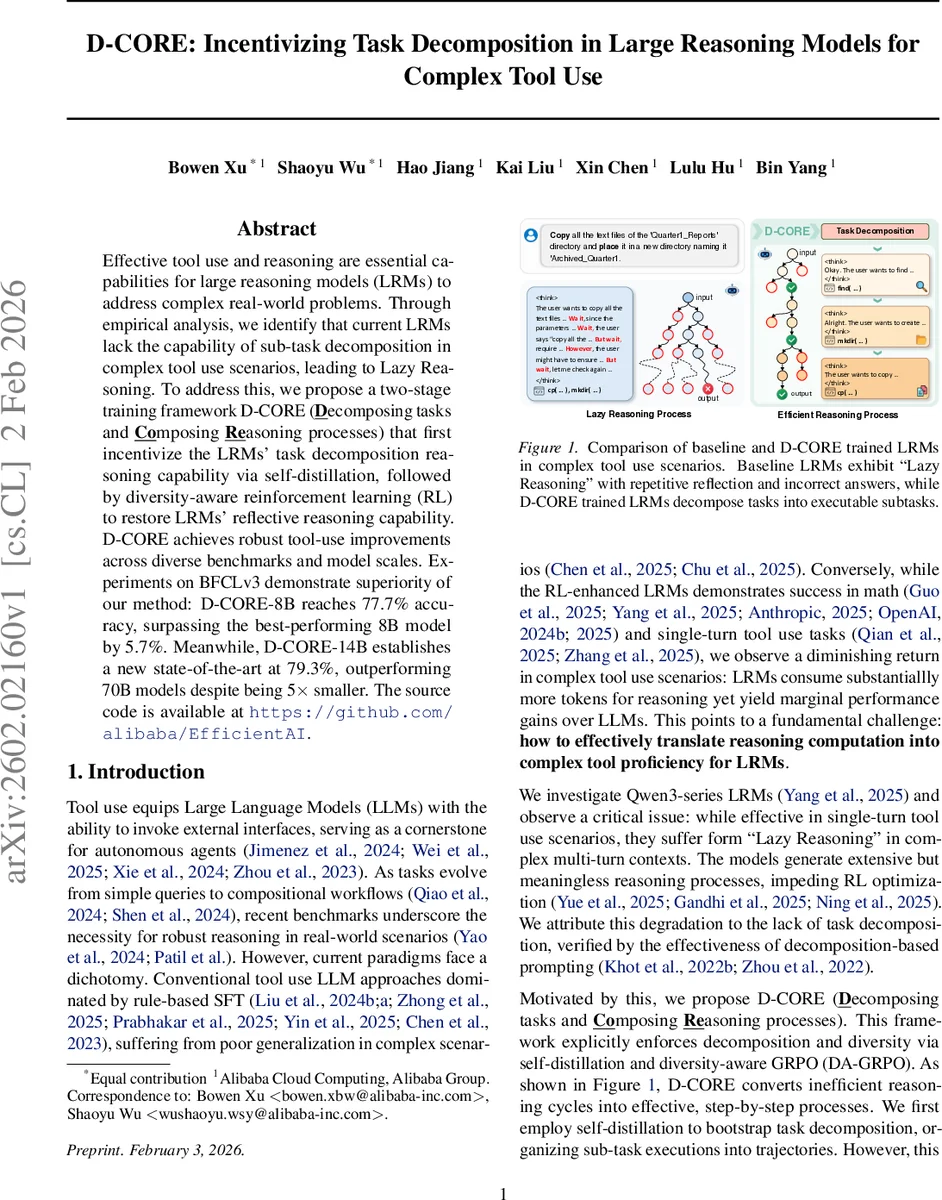

Effective tool use and reasoning are essential capabilities for large reasoning models~(LRMs) to address complex real-world problems. Through empirical analysis, we identify that current LRMs lack the capability of sub-task decomposition in complex tool use scenarios, leading to Lazy Reasoning. To address this, we propose a two-stage training framework D-CORE~(\underline{\textbf{D}}ecomposing tasks and \underline{\textbf{Co}}mposing \underline{\textbf{Re}}asoning processes) that first incentivize the LRMs’ task decomposition reasoning capability via self-distillation, followed by diversity-aware reinforcement learning~(RL) to restore LRMs’ reflective reasoning capability. D-CORE achieves robust tool-use improvements across diverse benchmarks and model scales. Experiments on BFCLv3 demonstrate superiority of our method: D-CORE-8B reaches 77.7% accuracy, surpassing the best-performing 8B model by 5.7%. Meanwhile, D-CORE-14B establishes a new state-of-the-art at 79.3%, outperforming 70B models despite being 5$\times$ smaller. The source code is available at https://github.com/alibaba/EfficientAI.

💡 Research Summary

The paper identifies a critical weakness in large reasoning models (LRMs) when faced with complex, multi‑turn tool‑use tasks: they tend to engage in “Lazy Reasoning,” a mode where the model generates long, repetitive reflection and verification steps without actually decomposing the problem into sub‑tasks. This behavior leads to excessive token consumption and marginal performance gains, especially in scenarios that require planning and sequential tool execution.

To address this, the authors propose D‑CORE, a two‑stage training framework.

Stage 1 – Self‑Distillation: Instead of relying on a stronger teacher model, the LRM itself is prompted to decompose a query into explicit subtasks and to produce a full reasoning trajectory wrapped in

Stage 2 – Diversity‑Aware GRPO (DA‑GRPO): The self‑distilled model exhibits near‑zero reward variance, causing the advantage term in standard GRPO (Generalized PPO) to collapse and gradients to vanish. To restore exploration and reflective reasoning, the authors augment the advantage with an entropy‑based term ψ(H), where H is the token‑level entropy of the model’s output distribution. When the raw advantage falls below a tiny threshold, ψ(H) replaces it (capped by a hyper‑parameter δ). This encourages the generation of high‑entropy tokens, which empirically correlate with more diverse, reflective reasoning. The final objective also includes a KL‑penalty to keep the policy close to a reference distribution.

Experiments are conducted on the BFCLv3 benchmark (Parallel, Irrelevance, Multi‑turn) and τ‑bench, comparing Qwen3‑8B/32B models with the specialized xLAM2 series. Results show:

- D‑CORE‑8B reaches 77.7 % accuracy, a 5.7 % absolute improvement over the best existing 8B model, with especially large gains on multi‑turn tasks.

- D‑CORE‑14B achieves 79.3 % accuracy, surpassing 70B‑scale models despite being five times smaller, establishing a new state‑of‑the‑art.

- Ablation studies reveal that self‑distillation alone improves task decomposition but harms reflective reasoning; adding DA‑GRPO restores diversity, as evidenced by increased token‑entropy and higher reward variance.

- Manual insertion of decomposition prompts further confirms that explicit planning dramatically reduces lazy reasoning and boosts performance.

The contributions are threefold: (1) defining and quantifying “Lazy Reasoning” as a failure mode in complex tool use; (2) introducing a self‑distillation pipeline that eliminates the need for external teachers while teaching the model to plan; (3) proposing an entropy‑aware reinforcement learning objective that prevents gradient collapse and encourages diverse reasoning.

Limitations include reliance on English‑centric benchmarks, sensitivity of entropy‑related hyper‑parameters to model size and data distribution, and a focus on a limited set of tool APIs. Future work should explore multilingual and domain‑specific extensions, automated hyper‑parameter tuning for the entropy term, and integration with human‑in‑the‑loop feedback.

Overall, D‑CORE demonstrates that coupling self‑generated decomposition with entropy‑driven RL can endow relatively small LRMs with the planning and reflective capabilities previously reserved for much larger models, paving the way for more efficient and robust autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment