HPE: Hallucinated Positive Entanglement for Backdoor Attacks in Federated Self-Supervised Learning

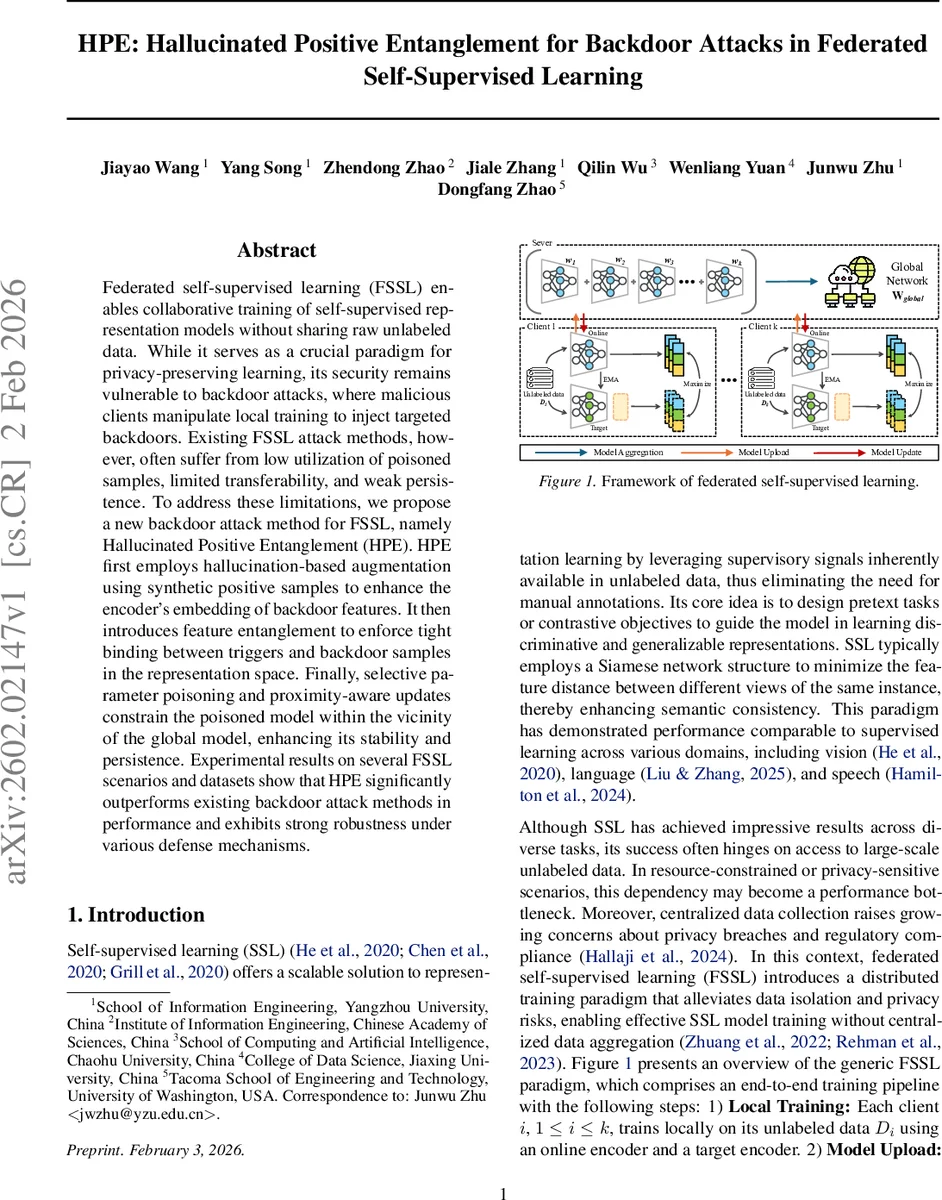

Federated self-supervised learning (FSSL) enables collaborative training of self-supervised representation models without sharing raw unlabeled data. While it serves as a crucial paradigm for privacy-preserving learning, its security remains vulnerable to backdoor attacks, where malicious clients manipulate local training to inject targeted backdoors. Existing FSSL attack methods, however, often suffer from low utilization of poisoned samples, limited transferability, and weak persistence. To address these limitations, we propose a new backdoor attack method for FSSL, namely Hallucinated Positive Entanglement (HPE). HPE first employs hallucination-based augmentation using synthetic positive samples to enhance the encoder’s embedding of backdoor features. It then introduces feature entanglement to enforce tight binding between triggers and backdoor samples in the representation space. Finally, selective parameter poisoning and proximity-aware updates constrain the poisoned model within the vicinity of the global model, enhancing its stability and persistence. Experimental results on several FSSL scenarios and datasets show that HPE significantly outperforms existing backdoor attack methods in performance and exhibits strong robustness under various defense mechanisms.

💡 Research Summary

The paper introduces Hallucinated Positive Entanglement (HPE), a novel backdoor attack framework specifically designed for federated self‑supervised learning (FSSL). Existing attacks on FSSL suffer from low poisoned‑sample utilization and rapid dilution of backdoor signals during the multi‑round model aggregation process. HPE addresses these challenges through three tightly integrated components.

First, the hallucination enhancement module generates synthetic hard‑positive samples. Using a MoCo‑style contrastive learner, the key encoder extracts a feature vector v_k for each poisoned anchor. This vector is projected onto a normalized hypersphere and clustered with k‑means on the memory queue to obtain a set of semantic prototypes P = {P₁,…,P_L}. The algorithm selects the prototype P* closest to v_k and a random base prototype P_base, then traverses the geodesic curve between them to create a hallucinated positive v_H that remains within the same prototype region while being feature‑wise distant from the anchor. This expands the distribution of backdoor‑related representations, allowing a small number of poisoned samples to exert a large influence on the encoder.

Second, the feature entanglement loss explicitly binds the trigger‑embedded poisoned samples (x⊕Δ) to the target class y_t in the representation space. By maximizing the cosine similarity between the encoder outputs of poisoned and clean target‑class samples, HPE forces the encoder to map trigger‑containing inputs to the same region as legitimate target‑class embeddings. An auxiliary clean‑sample consistency loss preserves the similarity between the backdoored and benign encoders on unpoisoned data, ensuring stealth.

Third, HPE employs a dual‑constrained poisoning strategy. The attacker limits overall parameter deviation from the global model using an L2 norm bound, while selectively perturbing low‑variance parameters (e.g., batch‑norm scale and shift, layer‑norm weights). This selective poisoning keeps the global update from drastically altering the backdoor signal, thereby reducing detection probability and enhancing persistence across communication rounds. A proximity‑aware update rule further enforces that the malicious client’s model stays close to the global model, preventing aggressive drift that could trigger aggregation‑level defenses.

The authors evaluate HPE on five vision benchmarks—CIFAR‑10, GTSRB, STL‑10, CIFAR‑100, and ImageNet‑100—under various federated settings (IID, non‑IID, multiple malicious clients). Baselines include BADFSS and attacks built on EmInspector, while defenses span model‑level (Neural Cleanse, DECREE), sample‑level (Beatrix, Grad‑CAM), and aggregation‑level (FLARE, Foolsgold, FLAME). Results show that HPE consistently achieves higher attack success rates (ASR) by 10–20 percentage points over baselines, while incurring less than 1 % drop in clean accuracy. Even when strong defenses such as EmInspector or FLARE are applied, HPE maintains a robust ASR, demonstrating its resilience.

In summary, HPE advances the state of the art in FSSL backdoor attacks by (1) amplifying backdoor feature learning through hallucinated hard positives, (2) tightly coupling triggers with target‑class representations via feature entanglement, and (3) preserving stealth and durability through selective, proximity‑constrained parameter poisoning. The work highlights a new class of threats to privacy‑preserving federated learning systems and provides valuable insights for developing more effective defenses.

Comments & Academic Discussion

Loading comments...

Leave a Comment