Are Semantic Networks Associated with Idea Originality in Artificial Creativity? A Comparison with Human Agents

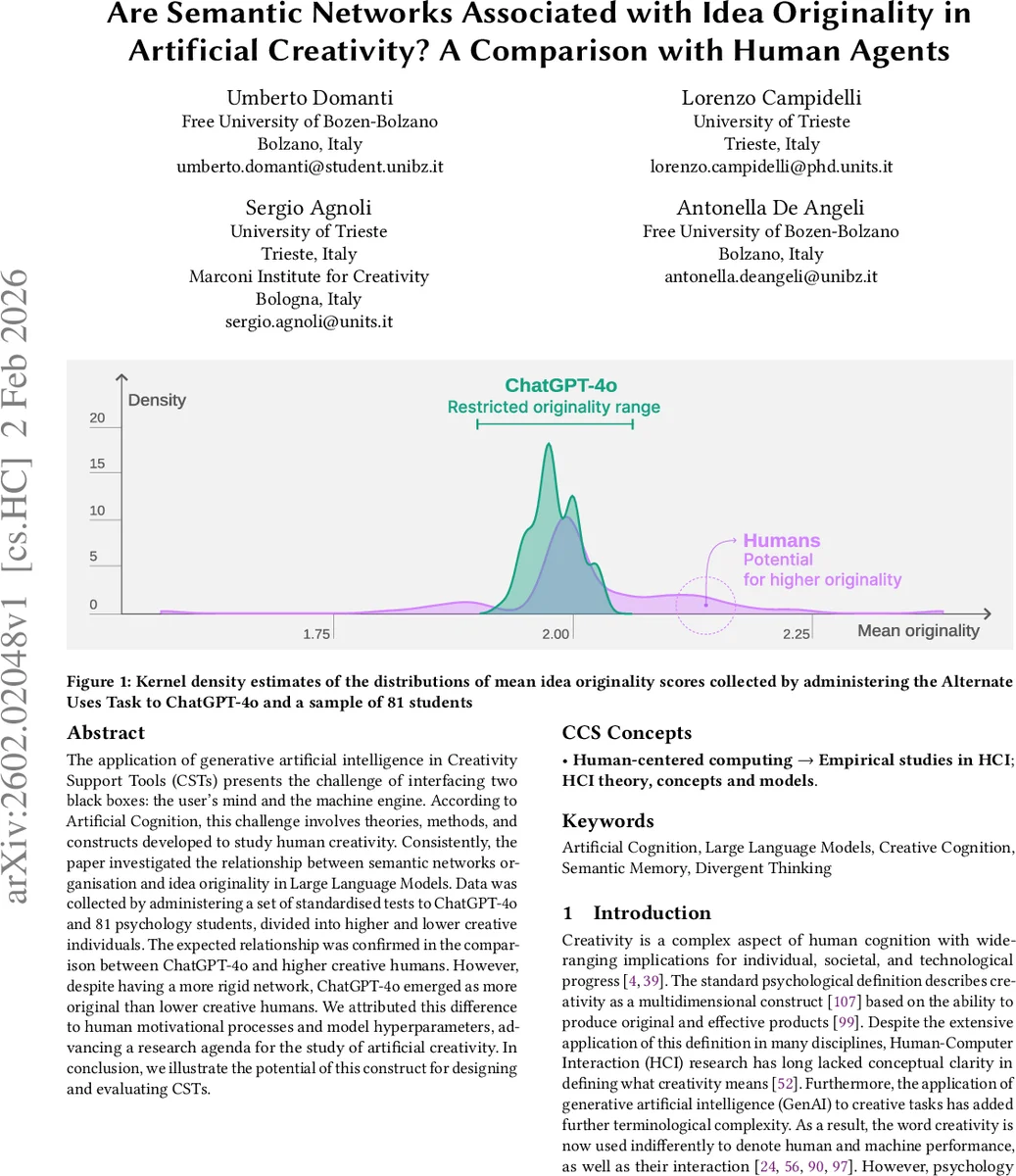

The application of generative artificial intelligence in Creativity Support Tools (CSTs) presents the challenge of interfacing two black boxes: the user’s mind and the machine engine. According to Artificial Cognition, this challenge involves theories, methods, and constructs developed to study human creativity. Consistently, the paper investigated the relationship between semantic networks organisation and idea originality in Large Language Models. Data was collected by administering a set of standardised tests to ChatGPT-4o and 81 psychology students, divided into higher and lower creative individuals. The expected relationship was confirmed in the comparison between ChatGPT-4o and higher creative humans. However, despite having a more rigid network, ChatGPT-4o emerged as more original than lower creative humans. We attributed this difference to human motivational processes and model hyperparameters, advancing a research agenda for the study of artificial creativity. In conclusion, we illustrate the potential of this construct for designing and evaluating CSTs.

💡 Research Summary

The paper investigates the relationship between semantic network structure and idea originality in both a large language model (ChatGPT‑4o) and human participants, aiming to inform the design of Creativity Support Tools (CSTs). Drawing on the Artificial Cognition framework, the authors treat creativity as an interplay of basic neurocognitive mechanisms (memory, attention, control) that manifest in divergent and convergent thinking. They focus on semantic memory networks—graph representations of conceptual associations—and their flexibility as a predictor of originality in divergent tasks.

Human data were collected from 81 psychology students in Italy. Participants completed standardized creativity assessments, including the Alternate Uses Task (AUT), and were split into Higher Creative Humans (HCH) and Lower Creative Humans (LCH) based on their overall creativity scores. AUT responses were rated for originality, fluency, flexibility, and elaboration by trained judges. Semantic networks for each participant were built from verbal fluency tasks, yielding graph metrics such as clustering coefficient, average path length, and node degree distribution.

ChatGPT‑4o was queried with the same AUT prompts multiple times through its chat interface. The model’s responses were similarly scored for originality, and a semantic network was derived automatically from the model’s internal token‑prediction probabilities, allowing comparable graph metrics to be extracted.

Results reveal three key patterns. First, HCH participants exhibit more flexible semantic networks (lower clustering, longer average paths) and higher originality scores than LCH participants, replicating established findings in creativity research. Second, ChatGPT‑4o’s semantic network is more rigid than that of HCH, yet its originality scores are comparable to—or slightly below—those of the high‑creativity human group. This suggests that LLMs can achieve human‑level originality through parameter settings (e.g., sampling temperature) that promote output diversity despite a less flexible internal knowledge structure. Third, when compared to LCH, ChatGPT‑4o outperforms the lower‑creativity humans in originality despite its more constrained network. The authors attribute this to differences in human motivational processes (self‑efficacy, intrinsic motivation) and to the model’s hyper‑parameter configuration, which can artificially inflate novelty.

Methodologically, the study’s strength lies in using identical divergent tasks and comparable scoring procedures for both humans and the AI, and in quantifying semantic networks with graph‑theoretic measures. However, limitations are acknowledged: the LLM remains a black box with undisclosed hyper‑parameters, limiting causal inference; the human sample is restricted to university students, reducing generalizability; and the construction of semantic networks may not fully capture the nuanced differences between human language production and model token generation.

The discussion extrapolates several implications for artificial creativity research and CST design. Semantic network flexibility is confirmed as a crucial predictor of human originality, but LLMs can simulate high originality via controllable parameters, indicating a divergent route to creative output. Designers of CSTs should therefore consider both the motivational and self‑regulatory aspects of human users and the tunable diversity mechanisms of LLMs. The authors propose a research agenda that includes systematic manipulation of LLM hyper‑parameters, experimental control of human motivational states during human‑AI interaction, and the development of meta‑learning approaches that adapt CST suggestions based on real‑time assessment of a user’s semantic network.

In conclusion, the paper provides the first empirical evidence linking semantic network organization—a process‑level construct—to idea originality—a product‑level measure—in both artificial and human agents. While the relationship mirrors human creativity only for high‑creativity individuals, the unexpected superiority of the model over low‑creativity humans opens new avenues for exploring how parameter‑driven diversity in LLMs can be harnessed or moderated within collaborative creative environments. The findings underscore the need for interdisciplinary frameworks that blend cognitive psychology, HCI, and AI engineering to advance the theory and practice of artificial creativity.

Comments & Academic Discussion

Loading comments...

Leave a Comment