Do I Really Know? Learning Factual Self-Verification for Hallucination Reduction

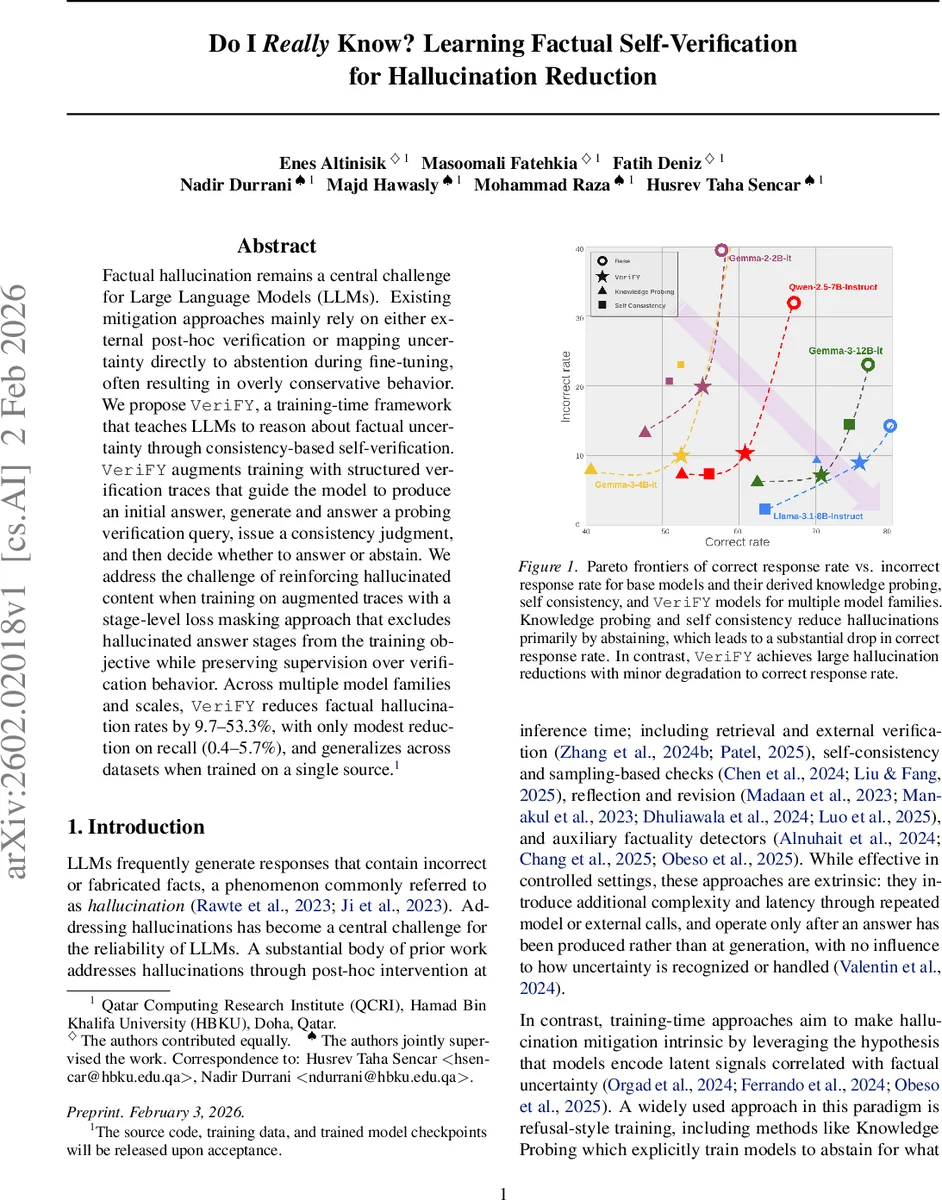

Factual hallucination remains a central challenge for large language models (LLMs). Existing mitigation approaches primarily rely on either external post-hoc verification or mapping uncertainty directly to abstention during fine-tuning, often resulting in overly conservative behavior. We propose VeriFY, a training-time framework that teaches LLMs to reason about factual uncertainty through consistency-based self-verification. VeriFY augments training with structured verification traces that guide the model to produce an initial answer, generate and answer a probing verification query, issue a consistency judgment, and then decide whether to answer or abstain. To address the risk of reinforcing hallucinated content when training on augmented traces, we introduce a stage-level loss masking approach that excludes hallucinated answer stages from the training objective while preserving supervision over verification behavior. Across multiple model families and scales, VeriFY reduces factual hallucination rates by 9.7 to 53.3 percent, with only modest reductions in recall (0.4 to 5.7 percent), and generalizes across datasets when trained on a single source. The source code, training data, and trained model checkpoints will be released upon acceptance.

💡 Research Summary

The paper tackles the persistent problem of factual hallucinations in large language models (LLMs) by introducing a training‑time framework called VeriFY (Verification for Factual Uncertainty). Existing mitigation strategies fall into two categories: post‑hoc methods that invoke external verification or additional inference steps, and training‑time approaches that map model uncertainty directly to abstention (e.g., Knowledge‑Probing). Post‑hoc methods improve factuality but add latency and complexity, while abstention‑focused training reduces hallucinations at the cost of overly conservative behavior, discarding many answerable queries.

VeriFY bridges these lines of work by internalizing a self‑verification process during fine‑tuning. For each training question q, a verification trace is constructed consisting of five ordered stages: (1) the model generates an unconstrained initial answer a₀; (2) a more capable model creates a verification question qᵥ by applying a controlled semantic transformation to q conditioned on a₀ (e.g., rephrasing, logical implication, temporal shift); (3) the model answers qᵥ independently, producing aᵥ; (4) conditioned on the pair (qᵥ, aᵥ), the model re‑answers the original question, yielding a revised answer a₁; (5) a consistency judgment r is emitted, indicating whether a₀ and a₁ are mutually supportive. If r signals consistency, a₁ is returned; otherwise the model abstains.

A central challenge is that some stages (especially a₀, a₁, or aᵥ) may contain hallucinated content, and naïvely training on the full trace could reinforce false facts. To prevent this, the authors introduce stage‑level loss masking. Each stage sⱼ receives a binary hallucination indicator Hⱼ (1 = hallucinated). Three masking regimes are explored: (i) No Masking (NM) – all stages contribute to loss; (ii) Minimal Masking (MM) – only stages flagged as hallucinated are excluded, while verification questions and consistency judgments remain supervised; (iii) Span Masking (SM) – the entire prefix up to the last hallucinated stage is masked. Empirically, MM yields the best trade‑off, allowing the model to learn how to reason about and react to hallucinated content without reproducing it.

For evaluation, the authors argue that traditional binary accuracy is unsuitable because it ignores abstentions. They formulate hallucination mitigation as a selective prediction problem and define a new metric, Hallucination F1, based on precision (correct answers among those attempted) and recall (fraction of truly known questions that are answered). This metric captures both factual reliability and answer coverage, and can be computed without an external oracle when comparing mitigation strategies built on the same base model.

Experiments use the TriviaQA dataset (≈87 k factoid questions) to generate verification traces, employing nine verification strategies. The traces are filtered to keep only those with aligned correctness and consistency outcomes. Models from several families (Llama‑2, Falcon, Mistral) and sizes (7 B–70 B) are fine‑tuned with VeriFY. Results show that VeriFY reduces hallucination rates by 9.7 %–53.3 % across model families, while decreasing recall (answer coverage) by only 0.4 %–5.7 %. Compared to Knowledge‑Probing and Self‑Consistency baselines, VeriFY achieves higher precision with far less loss in coverage, as visualized in Pareto frontiers. Cross‑dataset generalization is demonstrated on HotpotQA, NQ‑Open, and FreshQA, indicating that training on a single source suffices for broader applicability.

The paper acknowledges limitations: (1) verification question generation relies on a stronger model, which may not be available in all settings; (2) the current focus is on factoid questions, leaving open the extension to multi‑hop reasoning or open‑ended generation; (3) hallucination labeling for loss masking still depends on human annotation and two judge models, which may hinder scalability.

In summary, VeriFY proposes a novel paradigm where LLMs learn to self‑verify during training, effectively internalizing a reasoning loop that can flag and abstain from uncertain answers without external calls. By coupling structured verification traces with selective loss masking and a principled evaluation metric, the approach achieves substantial hallucination reduction while preserving answer coverage, offering a promising direction for building more trustworthy language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment