SNAP: A Self-Consistent Agreement Principle with Application to Robust Computation

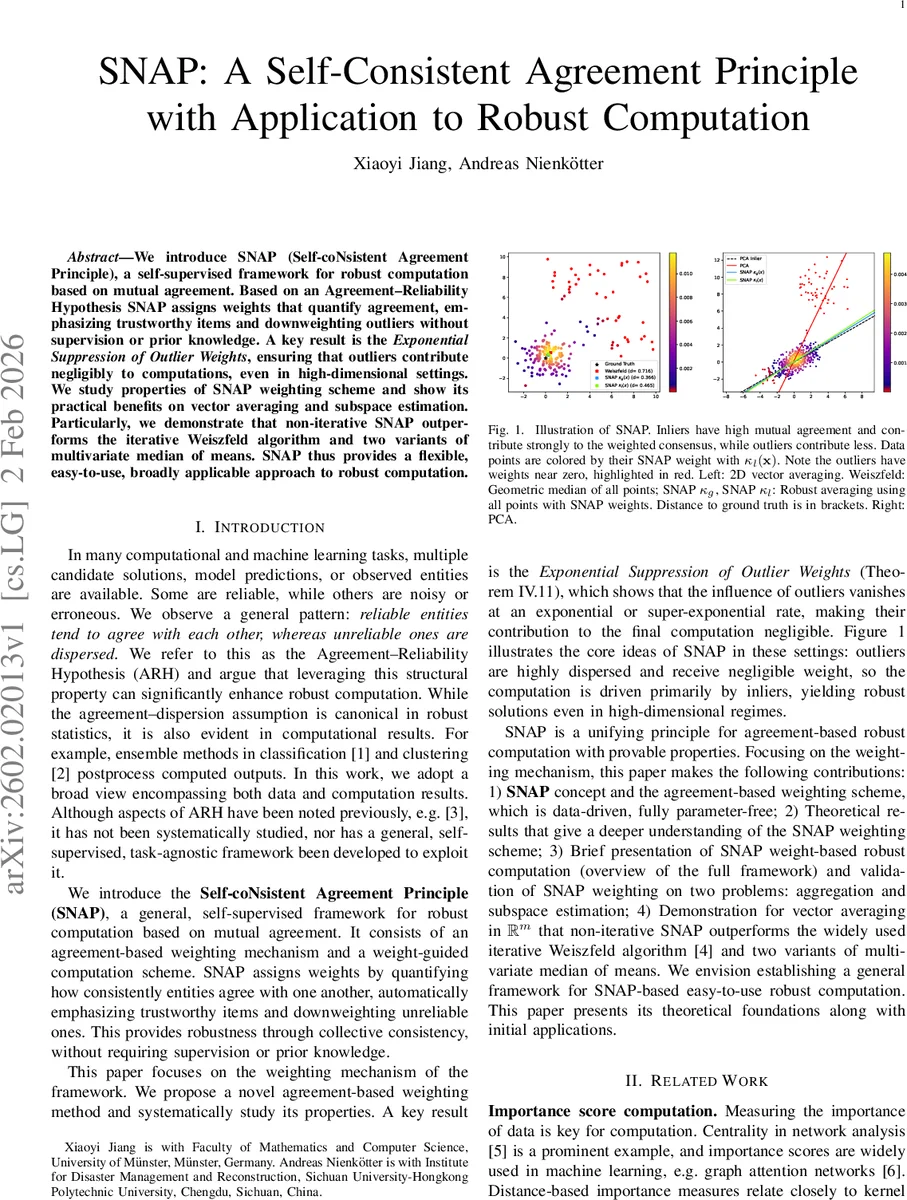

We introduce SNAP (Self-coNsistent Agreement Principle), a self-supervised framework for robust computation based on mutual agreement. Based on an Agreement-Reliability Hypothesis SNAP assigns weights that quantify agreement, emphasizing trustworthy items and downweighting outliers without supervision or prior knowledge. A key result is the Exponential Suppression of Outlier Weights, ensuring that outliers contribute negligibly to computations, even in high-dimensional settings. We study properties of SNAP weighting scheme and show its practical benefits on vector averaging and subspace estimation. Particularly, we demonstrate that non-iterative SNAP outperforms the iterative Weiszfeld algorithm and two variants of multivariate median of means. SNAP thus provides a flexible, easy-to-use, broadly applicable approach to robust computation.

💡 Research Summary

The paper introduces SNAP (Self‑coNsistent Agreement Principle), a self‑supervised framework for robust computation that leverages the intuitive “Agreement–Reliability Hypothesis” (ARH). ARH posits that reliable (inlier) entities tend to agree with each other, forming a tight cluster, while unreliable (outlier) entities are dispersed and disagree both with inliers and among themselves. SNAP operationalizes this hypothesis by assigning each entity a weight that reflects its level of agreement with the rest of the dataset, without any supervision, prior knowledge, or manually tuned parameters.

The weighting scheme works as follows. For a collection of objects (E={o_i}_{i=1}^n) in a space ((X,d)) (the distance need not be a metric, only (d(x,x)=0) is required), a normalized disagreement score is defined:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment