How Well Do Models Follow Visual Instructions? VIBE: A Systematic Benchmark for Visual Instruction-Driven Image Editing

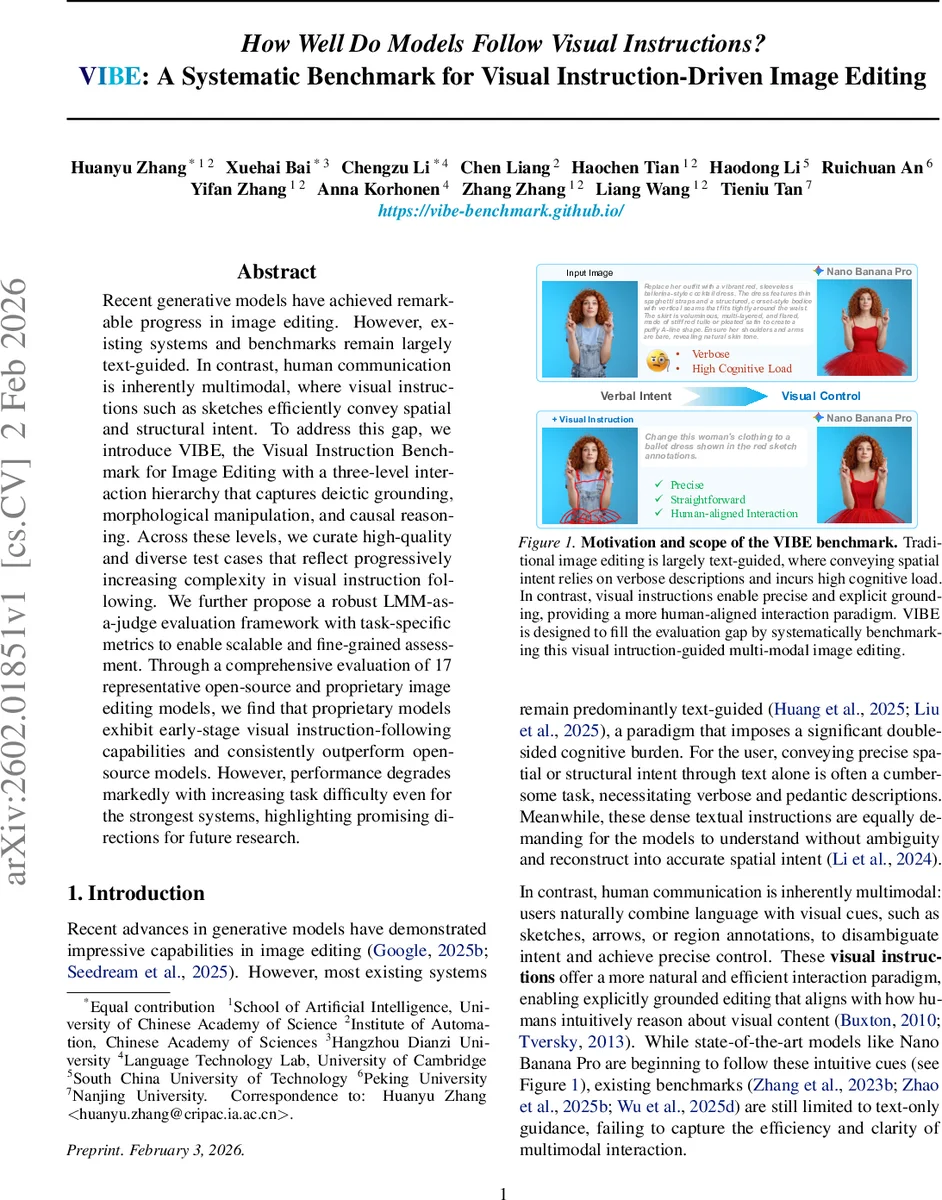

Recent generative models have achieved remarkable progress in image editing. However, existing systems and benchmarks remain largely text-guided. In contrast, human communication is inherently multimodal, where visual instructions such as sketches efficiently convey spatial and structural intent. To address this gap, we introduce VIBE, the Visual Instruction Benchmark for Image Editing with a three-level interaction hierarchy that captures deictic grounding, morphological manipulation, and causal reasoning. Across these levels, we curate high-quality and diverse test cases that reflect progressively increasing complexity in visual instruction following. We further propose a robust LMM-as-a-judge evaluation framework with task-specific metrics to enable scalable and fine-grained assessment. Through a comprehensive evaluation of 17 representative open-source and proprietary image editing models, we find that proprietary models exhibit early-stage visual instruction-following capabilities and consistently outperform open-source models. However, performance degrades markedly with increasing task difficulty even for the strongest systems, highlighting promising directions for future research.

💡 Research Summary

The paper introduces VIBE (Visual Instruction Benchmark for Image Editing), a systematic benchmark designed to evaluate how well image‑editing models follow multimodal visual instructions such as sketches, arrows, and bounding boxes. Recognizing that human communication often relies on visual cues to convey spatial and structural intent, the authors argue that existing text‑only benchmarks fail to capture this essential aspect of interaction.

VIBE is organized around a three‑level interaction hierarchy that reflects increasing cognitive and reasoning demands:

-

Deictic Level (Selector) – Visual cues act as selectors that pinpoint a region or object for basic operations. Four fundamental tasks are defined: Addition (inject new content within a marked region), Removal (erase the target and plausibly reconstruct the background), Replacement (swap the content while preserving spatial extent), and Translation (move an object without altering its appearance). Each task contains 100 samples, balanced across real‑world photos, animated frames, and sketch‑style illustrations, for a total of 400 Deictic samples.

-

Morphological Level (Blueprint) – Here visual instructions serve as abstract blueprints that specify shape, pose, or orientation. Three tasks are included: Pose Control (match a character’s pose to a reference image while preserving identity and style), Reorientation (align an object’s orientation to a given viewing frustum or directional cue), and Draft Instantiation (convert hand‑drawn sketches overlaid on the input into fully realized, style‑consistent objects). Again, each task has 100 manually curated samples.

-

Causal Level (Catalyst) – Visual cues encode underlying physical or logical dynamics that the model must simulate. The three tasks are Light Control (re‑light the scene according to an arrow indicating new light direction), Flow Simulation (apply a wind vector and make objects deform or move plausibly), and Billiards (predict the trajectory of a ball after a force arrow, handling collisions and rebounds). Flow Simulation and Light Control each have 100 samples; the Billiards task is built from 200 scripted candidates, filtered down to 134 high‑quality cases.

All 1,034 samples are manually annotated and verified through multiple rounds to ensure precise grounding, unambiguous intent, and physical plausibility.

Evaluation framework – Because image editing outputs are open‑ended and lack a single ground truth, the authors adopt an “LMM‑as‑a‑Judge” paradigm. They employ a state‑of‑the‑art large multimodal model (GPT‑5.1, accessed via Azure API) as an automated evaluator. For each test case, the judge receives the original image, textual instruction, visual instruction, and the model’s edited output, then answers a set of task‑specific binary questions. Three overarching metrics are defined:

- Instruction Adherence (IA) – Checks (i) correct localization of the visual cue, (ii) correct operator type (add, remove, etc.), and (iii) semantic consistency with the textual instruction. IA is the average of these three binary scores.

- Contextual Preservation (CP) – Verifies that non‑target regions remain unchanged, scored binary.

- Visual Coherence (VC) – Assesses (i) style consistency, (ii) seamless integration of edited region, and (iii) absence of artifacts; VC is the average of the three binary sub‑scores.

The final score for a sample is the mean of IA, CP, and VC. Human expert judgments were collected on a subset of samples, showing a Pearson correlation > 0.89 with the LMM judgments, confirming the reliability of the automated pipeline.

Experimental results – Seventeen models are benchmarked: ten open‑source (including Stable Diffusion‑based editors, ControlNet variants, and recent diffusion‑in‑painting models) and seven proprietary systems (e.g., Adobe Firefly, Google Gemini‑Image, OpenAI’s DALL‑E 3‑Edit, etc.). Key findings:

- Proprietary advantage – Commercial models consistently outperform open‑source counterparts across all levels, achieving roughly 38 percentage‑point higher overall scores.

- Performance drop with hierarchy – Even the strongest proprietary model scores above 70 % on Deictic tasks but falls below 50 % on Causal tasks. The average Causal‑level score across all models is under 48 %.

- Open‑source limitations – Many open‑source models struggle already at the Deictic level, often mis‑localizing the marked region or producing noticeable background artifacts, leading to IA and VC scores below 30 %.

- Error patterns – Errors on Morphological tasks often involve inaccurate pose transfer or failure to respect the sketch’s structural constraints, while Causal failures manifest as unrealistic lighting, missing wind‑induced deformations, or physically implausible billiard trajectories.

These results highlight that current image‑editing systems have only nascent capabilities for interpreting visual instructions, especially when those instructions require internal world modeling or physical simulation.

Contributions and future directions – The paper’s primary contributions are: (i) the introduction of VIBE, the first benchmark explicitly targeting visual‑instruction‑driven editing; (ii) a cognitively motivated three‑level hierarchy that isolates grounding, structural synthesis, and causal reasoning; (iii) a validated LMM‑as‑Judge evaluation pipeline with task‑specific metrics; and (iv) a comprehensive empirical study exposing clear capability gaps. The authors suggest several avenues for progress: integrating differentiable physics engines or neural simulators to handle Causal tasks; expanding pre‑training corpora with multimodal instruction pairs; improving LMM‑based evaluation to mitigate potential model bias; and encouraging the community to contribute additional visual‑instruction samples to broaden VIBE’s coverage.

In summary, VIBE fills a critical evaluation gap by providing a rigorous, scalable, and human‑aligned benchmark for multimodal image editing. It demonstrates that while commercial systems are beginning to understand simple visual cues, substantial research is needed to achieve reliable, high‑fidelity editing driven by complex visual instructions.

Comments & Academic Discussion

Loading comments...

Leave a Comment