SPIRIT: Adapting Vision Foundation Models for Unified Single- and Multi-Frame Infrared Small Target Detection

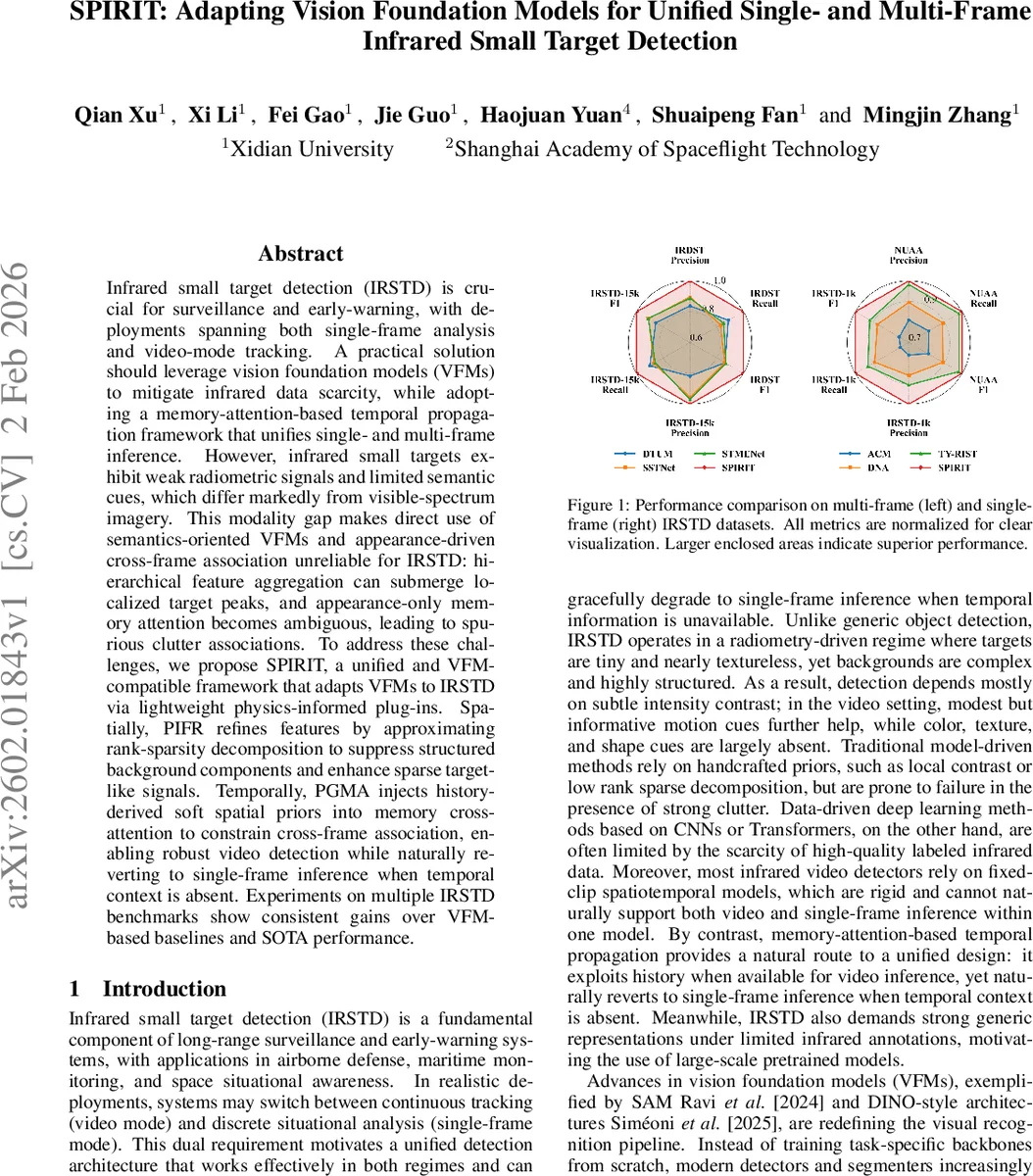

Infrared small target detection (IRSTD) is crucial for surveillance and early-warning, with deployments spanning both single-frame analysis and video-mode tracking. A practical solution should leverage vision foundation models (VFMs) to mitigate infrared data scarcity, while adopting a memory-attention-based temporal propagation framework that unifies single- and multi-frame inference. However, infrared small targets exhibit weak radiometric signals and limited semantic cues, which differ markedly from visible-spectrum imagery. This modality gap makes direct use of semantics-oriented VFMs and appearance-driven cross-frame association unreliable for IRSTD: hierarchical feature aggregation can submerge localized target peaks, and appearance-only memory attention becomes ambiguous, leading to spurious clutter associations. To address these challenges, we propose SPIRIT, a unified and VFM-compatible framework that adapts VFMs to IRSTD via lightweight physics-informed plug-ins. Spatially, PIFR refines features by approximating rank-sparsity decomposition to suppress structured background components and enhance sparse target-like signals. Temporally, PGMA injects history-derived soft spatial priors into memory cross-attention to constrain cross-frame association, enabling robust video detection while naturally reverting to single-frame inference when temporal context is absent. Experiments on multiple IRSTD benchmarks show consistent gains over VFM-based baselines and SOTA performance.

💡 Research Summary

The paper tackles infrared small‑target detection (IRSTD), a task that demands both single‑frame analysis and video‑mode tracking, by leveraging the powerful representations of large‑scale vision foundation models (VFMs) while addressing the modality gap between visible‑spectrum pretraining data and infrared imagery. The authors identify two fundamental failure modes when directly applying semantics‑oriented VFMs to IRSTD: (1) spatial feature submergence, where hierarchical token mixing and large receptive fields dilute the weak, localized intensity spikes of small infrared targets; and (2) temporal appearance ambiguity, where appearance‑only memory attention mistakenly associates clutter with targets because infrared targets lack texture and distinctive semantic cues.

To overcome these issues, the authors propose SPIRIT, a unified framework that augments any VFM backbone with two lightweight, physics‑informed plug‑ins: Physics‑Informed Feature Refinement (PIFR) and Prior‑Guided Memory Attention (PGMA).

PIFR (spatial refinement) approximates a low‑rank plus sparse decomposition directly on the deep feature maps. The feature tensor is flattened, and a fixed low‑rank subspace of rank r = 4 is estimated via spatial pooling into coarse prototypes, followed by a lightweight projector and a ridge‑projection formula that avoids costly SVD. The residual, which contains high‑frequency sparse components, is then processed with token‑wise group shrinkage based on L₂‑norm, producing a soft gate that highlights potential target locations. This gate is multiplied element‑wise with the original features and added back through a learnable scalar α initialized to zero, preserving the pretrained VFM at early training stages and gradually injecting the physical prior. The result is a refined feature map where structured background responses are suppressed and sparse target signals are amplified, mitigating the submergence problem without sacrificing the VFM’s semantic richness.

PGMA (temporal refinement) introduces a feasibility prior derived from detections in the previous frame. Each detected bounding box is projected onto the feature grid, and a Gaussian kernel centered at the box’s location (scaled by its size) yields a continuous feasibility field Gₜ₋₁(p). This field is down‑sampled, convolved with a 3 × 3 kernel, and passed through a sigmoid to obtain a gate map gₜ₋₁ ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment