Seeing Is Believing? A Benchmark for Multimodal Large Language Models on Visual Illusions and Anomalies

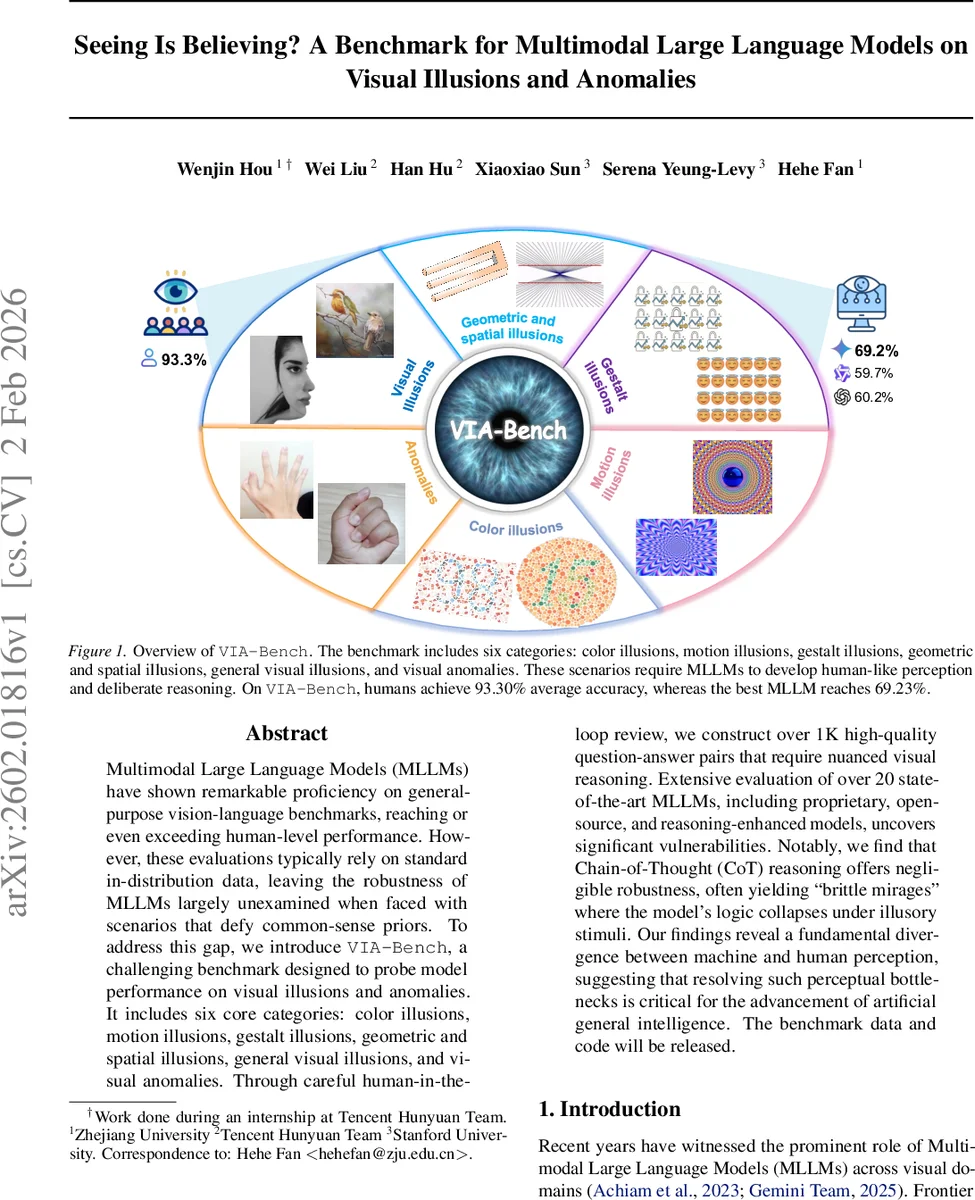

Multimodal Large Language Models (MLLMs) have shown remarkable proficiency on general-purpose vision-language benchmarks, reaching or even exceeding human-level performance. However, these evaluations typically rely on standard in-distribution data, leaving the robustness of MLLMs largely unexamined when faced with scenarios that defy common-sense priors. To address this gap, we introduce VIA-Bench, a challenging benchmark designed to probe model performance on visual illusions and anomalies. It includes six core categories: color illusions, motion illusions, gestalt illusions, geometric and spatial illusions, general visual illusions, and visual anomalies. Through careful human-in-the-loop review, we construct over 1K high-quality question-answer pairs that require nuanced visual reasoning. Extensive evaluation of over 20 state-of-the-art MLLMs, including proprietary, open-source, and reasoning-enhanced models, uncovers significant vulnerabilities. Notably, we find that Chain-of-Thought (CoT) reasoning offers negligible robustness, often yielding ``brittle mirages’’ where the model’s logic collapses under illusory stimuli. Our findings reveal a fundamental divergence between machine and human perception, suggesting that resolving such perceptual bottlenecks is critical for the advancement of artificial general intelligence. The benchmark data and code will be released.

💡 Research Summary

The paper introduces VIA‑Bench, a novel benchmark specifically designed to evaluate the robustness of multimodal large language models (MLLMs) when confronted with visual phenomena that contradict common‑sense priors, such as optical illusions and visual anomalies. The benchmark comprises six carefully curated categories: color illusions, motion illusions, Gestalt‑based illusions, geometric and spatial distortions, general visual tricks, and outright visual anomalies. Over 1,000 high‑quality multiple‑choice question‑answer pairs were generated through a multi‑stage, human‑in‑the‑loop pipeline that emphasizes both the “what” (the intrinsic visual content) and the “how” (the reasoning required). Distractor options are deliberately constructed to capture typical shortcut strategies that models might exploit, and a “Not Sure” choice is always included to probe model confidence.

To assess performance, the authors employ two evaluation protocols. The first, “Pattern Match,” simply checks whether the model selects the correct answer. The second, “LLM‑as‑Judge,” asks the model to produce a chain‑of‑thought explanation, which is then judged by a separate language model for correctness. This dual approach measures raw accuracy as well as the consistency of the model’s internal reasoning.

The study evaluates more than twenty state‑of‑the‑art MLLMs, spanning proprietary systems (e.g., Gemini‑3‑pro, GPT‑5‑chat), open‑source models (e.g., Qwen‑VL, InternVL series), and reasoning‑enhanced variants that generate explicit CoT outputs (e.g., OpenAI‑o3, Claude‑Sonnet). Model sizes range from 3 B to 235 B parameters. Each model is run five times and results are averaged to reduce stochastic variance.

Results reveal a substantial gap between human and machine performance. Human participants achieve an average accuracy of 93.3 %, whereas the best MLLM (Gemini‑3‑pro) reaches only 69.23 %. Performance varies widely across categories: models are relatively better on color and general visual tricks but struggle dramatically on geometric/spatial distortions and visual anomalies, sometimes falling below 30 % accuracy. Crucially, the addition of Chain‑of‑Thought prompting—intended to improve reasoning—often degrades performance, producing what the authors term “brittle mirages”: the model’s intermediate reasoning collapses under the deceptive visual stimulus, leading to incorrect final answers despite seemingly coherent explanations.

These findings suggest that current MLLMs rely heavily on statistical correlations learned from large text‑image corpora rather than genuine visual perception. The CoT framework, while useful for many tasks, may amplify internal priors and thus be counterproductive when visual evidence directly contradicts those priors. The paper argues that overcoming this “perceptual bottleneck” is essential for progress toward artificial general intelligence.

The authors conclude by outlining future research directions: (1) developing mechanisms that can explicitly reconcile conflicting visual evidence and prior knowledge, (2) designing more robust reasoning strategies that remain stable under visual ambiguity, and (3) incorporating insights from human cognitive science to enable models to recognize and handle visual illusions. VIA‑Bench is released publicly, providing a rigorous testbed for the community to diagnose and improve the perceptual capabilities of multimodal models.

Comments & Academic Discussion

Loading comments...

Leave a Comment