Backdoor Sentinel: Detecting and Detoxifying Backdoors in Diffusion Models via Temporal Noise Consistency

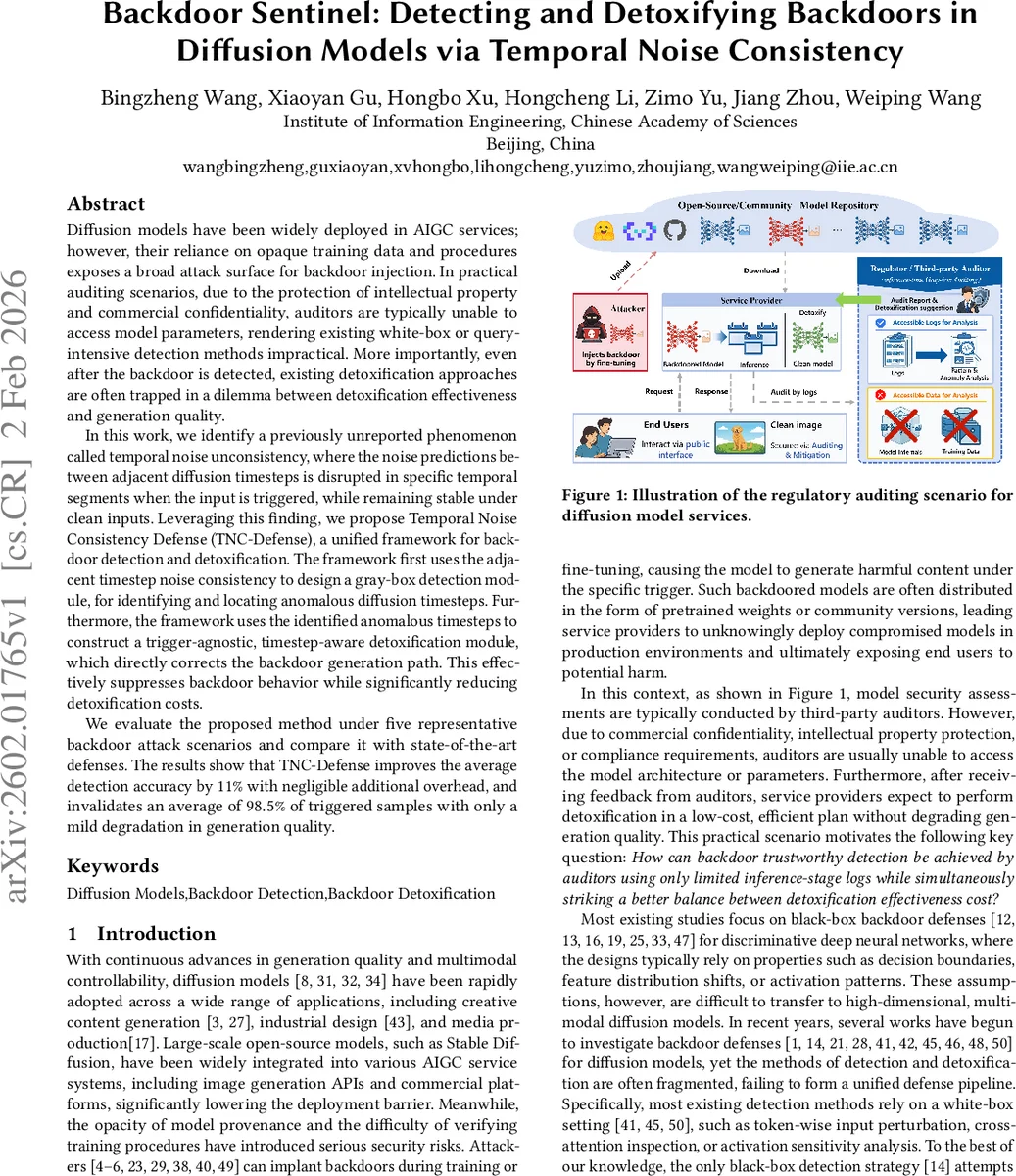

Diffusion models have been widely deployed in AIGC services; however, their reliance on opaque training data and procedures exposes a broad attack surface for backdoor injection. In practical auditing scenarios, due to the protection of intellectual property and commercial confidentiality, auditors are typically unable to access model parameters, rendering existing white-box or query-intensive detection methods impractical. More importantly, even after the backdoor is detected, existing detoxification approaches are often trapped in a dilemma between detoxification effectiveness and generation quality. In this work, we identify a previously unreported phenomenon called temporal noise unconsistency, where the noise predictions between adjacent diffusion timesteps is disrupted in specific temporal segments when the input is triggered, while remaining stable under clean inputs. Leveraging this finding, we propose Temporal Noise Consistency Defense (TNC-Defense), a unified framework for backdoor detection and detoxification. The framework first uses the adjacent timestep noise consistency to design a gray-box detection module, for identifying and locating anomalous diffusion timesteps. Furthermore, the framework uses the identified anomalous timesteps to construct a trigger-agnostic, timestep-aware detoxification module, which directly corrects the backdoor generation path. This effectively suppresses backdoor behavior while significantly reducing detoxification costs. We evaluate the proposed method under five representative backdoor attack scenarios and compare it with state-of-the-art defenses. The results show that TNC-Defense improves the average detection accuracy by $11%$ with negligible additional overhead, and invalidates an average of $98.5%$ of triggered samples with only a mild degradation in generation quality.

💡 Research Summary

The paper addresses the pressing security problem of backdoors hidden in diffusion models, which are increasingly deployed in AI‑generated content (AIGC) services. In realistic auditing scenarios, model weights, architecture, and training data are often proprietary, preventing auditors from using white‑box or query‑intensive detection methods. Existing detoxification techniques also suffer from a trade‑off: they either fail to remove the backdoor effectively or severely degrade generation quality because they rely on reconstructing or removing the trigger.

Key Observation – Temporal Noise Unconsistency

During the reverse diffusion (denoising) process, a diffusion model predicts the added noise ε̂θ(x_t, t, c) at each timestep t given the current latent x_t and the conditioning prompt c. The authors discover that, when a backdoor trigger is present, the mean‑squared error (MSE) between noise predictions of adjacent timesteps spikes dramatically in a specific temporal segment, whereas for clean inputs the MSE curve remains smooth and low. This phenomenon, termed Temporal Noise Unconsistency, reflects that the backdoor injects an abnormal generation path that distorts the natural temporal dynamics of the diffusion process.

Framework Overview – TNC‑Defense

The proposed defense consists of two tightly coupled modules that operate under gray‑box constraints (only inference‑time logs are available).

-

TNC‑Detect (Detection)

- Build a statistical baseline: Using a small set of clean prompts, compute the per‑timestep mean and variance of the adjacent‑step noise MSE.

- For any new input, record the same MSE sequence during a single generation pass.

- Apply a variance‑adaptive consistency bound to flag timesteps where the observed MSE exceeds the baseline by a statistically significant margin.

- The output is a set of anomalous timesteps that likely contain the backdoor’s influence.

This method requires no access to model parameters, no repeated sampling, and incurs negligible overhead.

-

TNC‑Detox (Detoxification)

- Trigger‑agnostic data synthesis: Using the identified anomalous timesteps, generate “poisoned” training pairs by augmenting the original prompt with content‑preserving variations (e.g., synonym substitution, paraphrasing) that still contain the hidden trigger effect.

- Timestep‑aware fine‑tuning: Restrict parameter updates to the layers responsible for the anomalous timesteps. Introduce a noise‑direction decoupling loss that forces the model’s predicted noise at those steps to align with the clean baseline, effectively breaking the malicious trajectory while leaving the rest of the diffusion process untouched.

- Because only a narrow temporal window and a small subset of parameters are altered, the approach is computationally cheap and preserves overall image quality.

Experimental Validation

The authors evaluate TNC‑Defense on five representative backdoor attacks: BadDiffusion, TrojDiff, BadT2I, VillanDiffusion, and EvilEdit. They compare against state‑of‑the‑art detection methods (e.g., DisDet, UFID) and detoxification techniques (e.g., Di‑Cleanse, T2IShield). Results show:

- Detection: Average accuracy improves by 11 % over the best prior gray‑box detector.

- Detoxification: 98.5 % of triggered samples are neutralized, while standard quality metrics (FID, IS) degrade only marginally (often <2 % change).

- Efficiency: The additional runtime is negligible (<0.5 % overhead) because only one forward pass is needed for detection and fine‑tuning touches a tiny fraction of the network.

Significance and Future Directions

By turning a previously unnoticed temporal artifact into a security signal, the paper provides a practical, unified solution for both detecting and repairing backdoors without needing model internals or extensive probing. The trigger‑agnostic nature sidesteps the difficulty of reconstructing diverse and covert triggers, and the timestep‑aware fine‑tuning minimizes collateral damage to generation quality.

Potential extensions include: applying the method to text‑to‑image or video diffusion pipelines, exploring robustness against multiple simultaneous backdoors, and adapting the statistical baseline to dynamic sampling schedules or alternative noise schedulers. Overall, TNC‑Defense represents a substantial step toward trustworthy deployment of diffusion‑based generative AI in real‑world services.

Comments & Academic Discussion

Loading comments...

Leave a Comment