Mutual-Guided Expert Collaboration for Cross-Subject EEG Classification

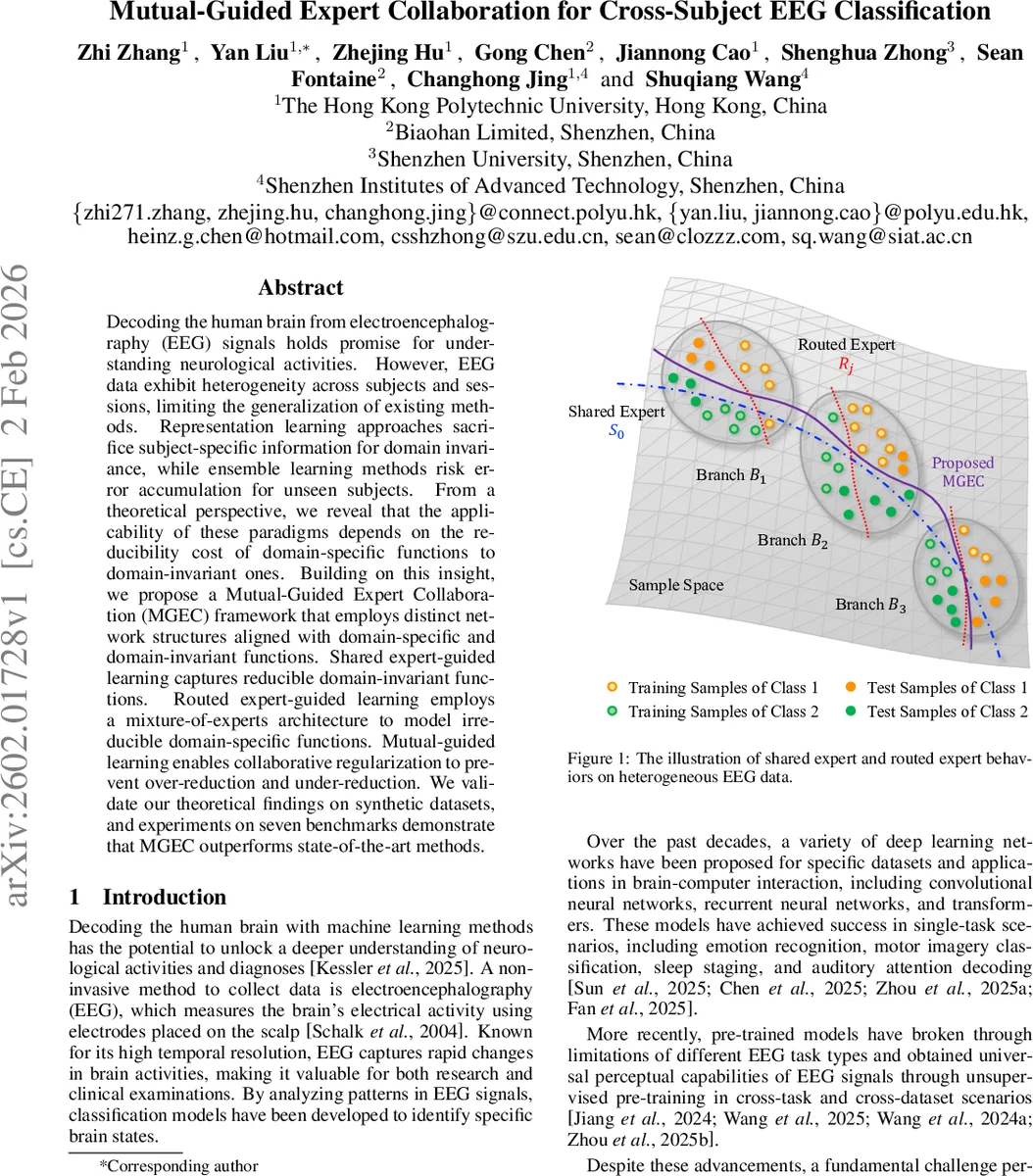

Decoding the human brain from electroencephalography (EEG) signals holds promise for understanding neurological activities. However, EEG data exhibit heterogeneity across subjects and sessions, limiting the generalization of existing methods. Representation learning approaches sacrifice subject-specific information for domain invariance, while ensemble learning methods risk error accumulation for unseen subjects. From a theoretical perspective, we reveal that the applicability of these paradigms depends on the reducibility cost of domain-specific functions to domain-invariant ones. Building on this insight, we propose a Mutual-Guided Expert Collaboration (MGEC) framework that employs distinct network structures aligned with domain-specific and domain-invariant functions. Shared expert-guided learning captures reducible domain-invariant functions. Routed expert-guided learning employs a mixture-of-experts architecture to model irreducible domain-specific functions. Mutual-guided learning enables collaborative regularization to prevent over-reduction and under-reduction. We validate our theoretical findings on synthetic datasets, and experiments on seven benchmarks demonstrate that MGEC outperforms state-of-the-art methods.

💡 Research Summary

The paper tackles the long‑standing problem of cross‑subject generalization in electroencephalography (EEG) classification. Because EEG recordings vary dramatically across individuals due to anatomical and physiological differences, models trained on a set of subjects often fail when deployed on unseen subjects. Existing solutions fall into two camps. The first seeks a domain‑invariant representation and trains a single classifier on pooled data, sacrificing subject‑specific cues. The second employs multiple subject‑specific experts (ensemble or mixture‑of‑experts), but suffers from coordination difficulties and error accumulation.

The authors provide a theoretical analysis based on algorithmic alignment. They decompose the target function into a domain‑invariant component (g_c) (shared across subjects) and a domain‑specific component (g_s) (present only in training subjects). Under three mild assumptions about distribution shift, they prove (Theorem 1) that a network aligned with (g_c) generalizes well, while alignment with (g_s) leads to large test error. They further model the target as a branching function (g(x)=\sum_{j=1}^M I_j(h_0(x)),h_j(x)), where (h_0) decides the branch (subject group) and each (h_j) handles branch‑specific processing.

The key insight is that the “reducibility cost” of turning branch‑specific functions into a single invariant function determines which paradigm is preferable. They introduce two ratios, (\alpha) and (\beta), that bound the sample‑complexity of a shared expert (S) versus a set of routed experts (R). If (\alpha) is large (high reducibility cost), routed experts are theoretically superior; if (\beta) is small (low cost), a shared expert suffices (Theorem 2).

Building on this analysis, the Mutual‑Guided Expert Collaboration (MGEC) framework integrates both paradigms and lets them cooperate. MGEC consists of three learning stages:

-

Shared Expert‑Guided Learning – All subjects are pooled and a single backbone (S_0) is trained with standard cross‑entropy loss. To encourage invariance, the authors generate temporally adjacent segments, apply stochastic spatial masking, and minimize a Joint Embedding Loss that maximizes cosine similarity between the current segment and its masked neighbor.

-

Routed Expert‑Guided Learning – A Mixture‑of‑Experts (MoE) architecture is employed. A router (R_0) projects the backbone features into a gate space and computes routing weights via cosine similarity with learned prototype embeddings (a codebook of subject‑specific neural patterns). Only the top‑(K) experts receive non‑zero weights. Additional losses enforce low entropy of routing probabilities per subject (promoting consistent expert assignment) and a balance loss that prevents a few experts from dominating.

-

Mutual‑Guided Learning – For each training sample, the cross‑entropy losses from the shared expert ((\ell_S)) and the routed experts ((\ell_R)) are compared. Samples that are easy for one model but hard for the other receive higher weights, causing the weaker model to learn from the stronger one. Theoretical Theorem 3 guarantees that this collaborative re‑weighting reduces the total alignment error over training epochs.

During inference, predictions from the shared expert and the routed experts are fused (e.g., weighted averaging) to produce the final label.

The authors evaluate MGEC on seven publicly available EEG benchmarks covering auditory attention decoding (AAD), motor imagery (MI), and sleep stage detection (SSD). Datasets vary in subject count (9–79), electrode count (2–64), and task complexity. MGEC consistently outperforms state‑of‑the‑art domain‑generalization methods (e.g., DANN, CORAL, MMD) and recent MoE‑based ensembles, achieving average accuracy gains of 3–5 percentage points. Ablation studies confirm that each component—joint embedding, routing entropy, balance loss, and mutual guidance—contributes positively, and that the optimal routing‑top‑(K) aligns with the theoretical reducibility analysis.

In summary, the paper proposes a principled, theoretically grounded framework that explicitly distinguishes between invariant and subject‑specific components of EEG signals, assigns them to appropriately structured networks, and lets the two networks guide each other during training. This dual‑expert collaboration mitigates both over‑reduction (loss of subject‑specific information) and under‑reduction (failure to capture shared patterns), offering a robust solution for cross‑subject EEG classification and a blueprint for other heterogeneous biomedical signal domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment