Joint Optimization of ASV and CM tasks: BTUEF Team's Submission for WildSpoof Challenge

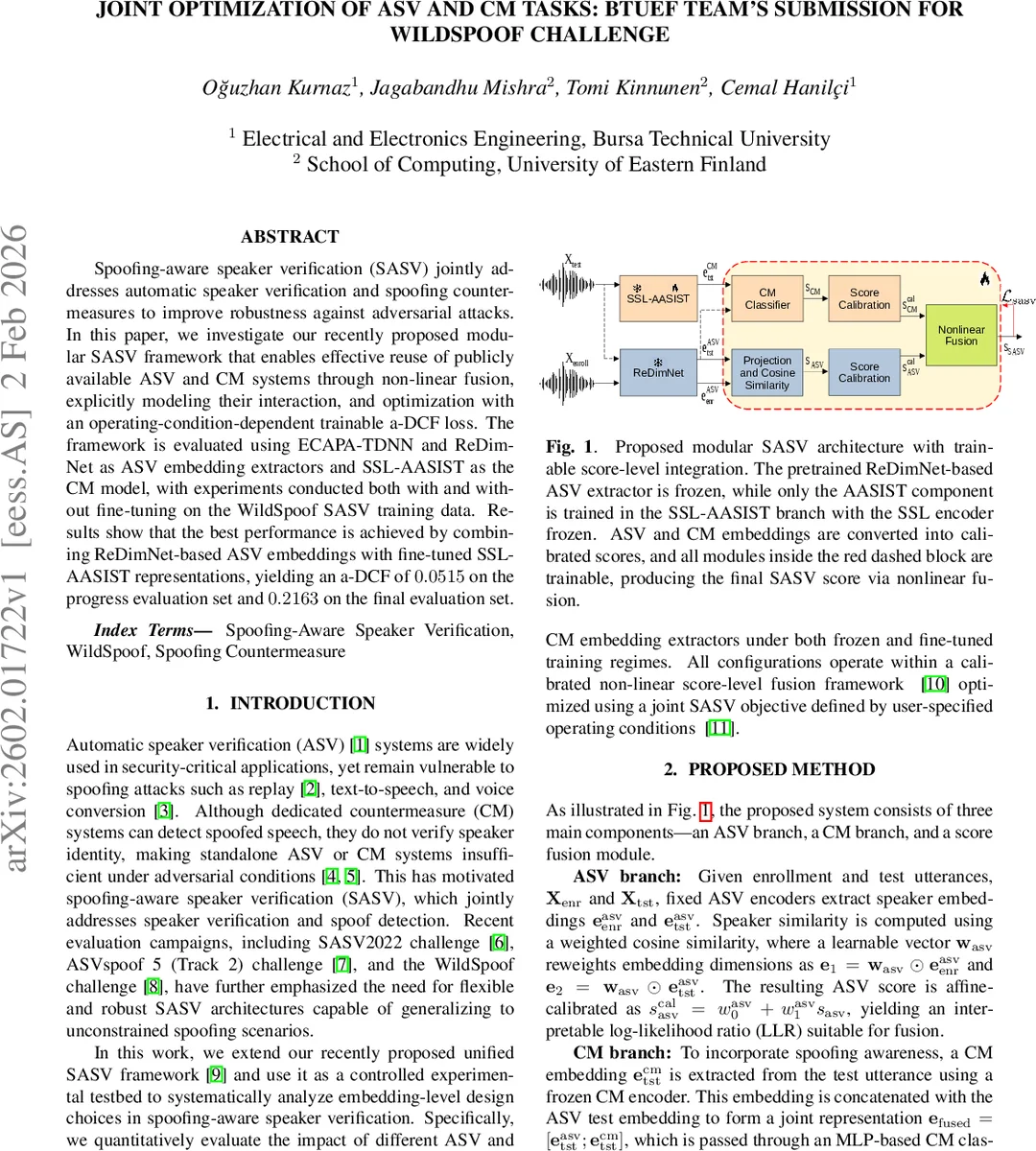

Spoofing-aware speaker verification (SASV) jointly addresses automatic speaker verification and spoofing countermeasures to improve robustness against adversarial attacks. In this paper, we investigate our recently proposed modular SASV framework that enables effective reuse of publicly available ASV and CM systems through non-linear fusion, explicitly modeling their interaction, and optimization with an operating-condition-dependent trainable a-DCF loss. The framework is evaluated using ECAPA-TDNN and ReDimNet as ASV embedding extractors and SSL-AASIST as the CM model, with experiments conducted both with and without fine-tuning on the WildSpoof SASV training data. Results show that the best performance is achieved by combining ReDimNet-based ASV embeddings with fine-tuned SSL-AASIST representations, yielding an a-DCF of 0.0515 on the progress evaluation set and 0.2163 on the final evaluation set.

💡 Research Summary

This paper presents the BTUEF team’s submission to the WildSpoof challenge, focusing on a modular framework for Spoofing-Aware Speaker Verification (SASV). The core objective is to enhance the robustness of Automatic Speaker Verification (ASV) systems against spoofing attacks (e.g., replay, synthetic speech) by jointly optimizing speaker verification and spoofing countermeasure (CM) tasks within a unified, trainable architecture.

The proposed method is built upon a recently introduced modular SASV framework. It ingeniously allows for the reuse of publicly available, pre-trained ASV and CM systems by integrating them through a non-linear, score-level fusion mechanism. The system comprises three main branches: an ASV branch, a CM branch, and a fusion module. In the ASV branch, speaker embeddings are extracted from enrollment and test utterances using a fixed encoder (e.g., ECAPA-TDNN or ReDimNet). A learnable weight vector reweights the embedding dimensions before computing a weighted cosine similarity, which is then affine-calibrated to produce a speaker log-likelihood ratio (LLR) score. The CM branch extracts a spoofing embedding from the test utterance using a fixed CM encoder (SSL-AASIST). This embedding is concatenated with the ASV test embedding and processed by an MLP classifier, whose output is similarly affine-calibrated into a CM LLR score.

The key innovation lies in the fusion stage. Instead of simple summation, the two calibrated LLRs are combined using a non-linear formula: s_sasv = -log

Comments & Academic Discussion

Loading comments...

Leave a Comment