Beyond Dense States: Elevating Sparse Transcoders to Active Operators for Latent Reasoning

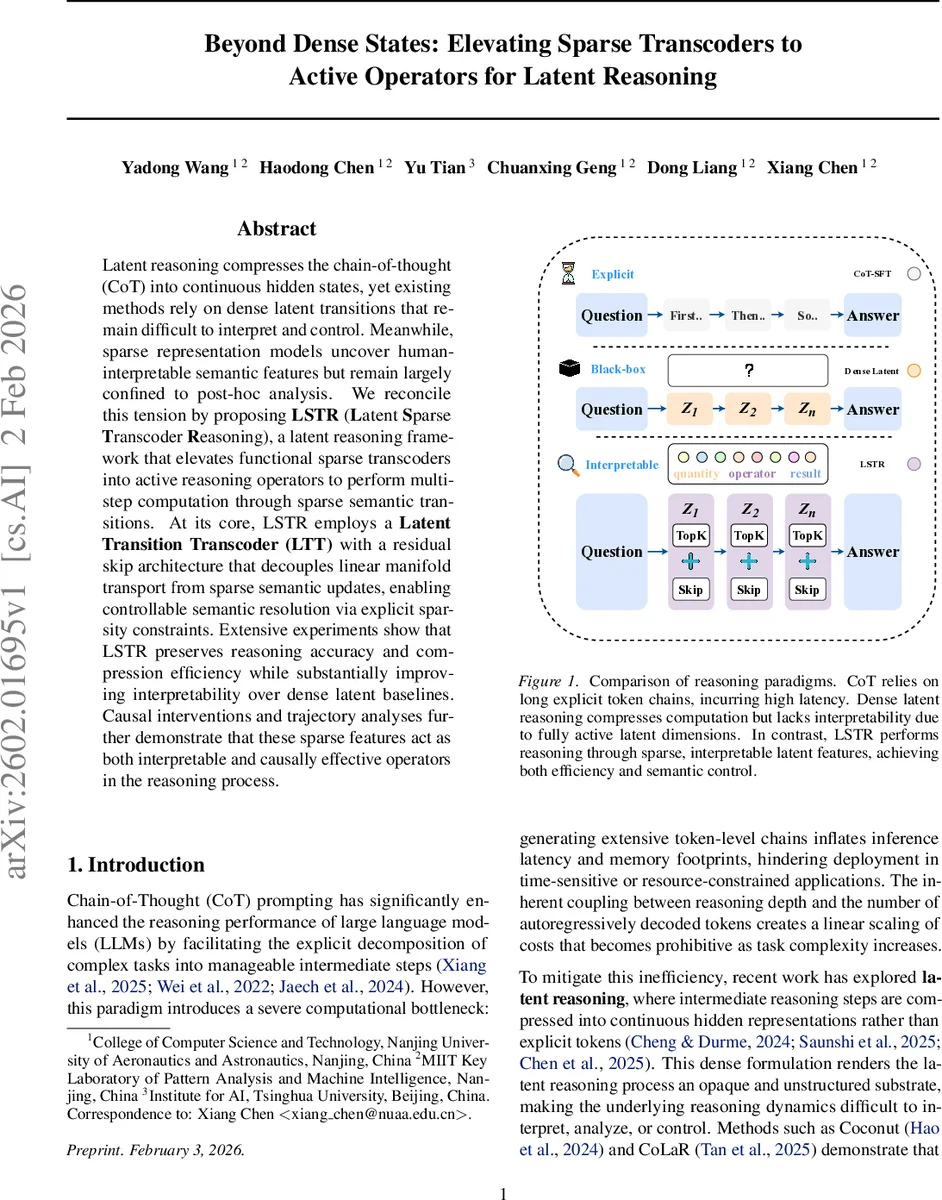

Latent reasoning compresses the chain-of-thought (CoT) into continuous hidden states, yet existing methods rely on dense latent transitions that remain difficult to interpret and control. Meanwhile, sparse representation models uncover human-interpretable semantic features but remain largely confined to post-hoc analysis. We reconcile this tension by proposing LSTR (Latent Sparse Transcoder Reasoning), a latent reasoning framework that elevates functional sparse transcoders into active reasoning operators to perform multi-step computation through sparse semantic transitions. At its core, LSTR employs a Latent Transition Transcoder (LTT) with a residual skip architecture that decouples linear manifold transport from sparse semantic updates, enabling controllable semantic resolution via explicit sparsity constraints. Extensive experiments show that LSTR preserves reasoning accuracy and compression efficiency while substantially improving interpretability over dense latent baselines. Causal interventions and trajectory analyses further demonstrate that these sparse features act as both interpretable and causally effective operators in the reasoning process.

💡 Research Summary

The paper addresses a fundamental tension in modern large language model (LLM) reasoning: chain‑of‑thought (CoT) prompting yields strong performance but incurs high latency because it generates long token sequences, while recent latent‑reasoning approaches compress these sequences into a few dense hidden states, dramatically reducing inference cost but making the reasoning process opaque and hard to control. At the same time, mechanistic interpretability research has shown that sparse autoencoders and transcoder models can uncover human‑interpretable semantic features in frozen LLMs, yet these features have only been used for post‑hoc analysis and never participate in the model’s actual computation.

To bridge this gap, the authors propose LSTR (Latent Sparse Transcoder Reasoning), a framework that elevates sparse transcoders from diagnostic tools to active operators that drive multi‑step latent reasoning. The core component is the Latent Transition Transcoder (LTT), which receives the backbone hidden state hₜ at each latent step and produces the next latent state ẑₜ₊₁ through a bilinear decomposition: a linear “skip” pathway (W_skip hₜ) that preserves smooth manifold drift, and a sparse “innovation” pathway that projects hₜ into a high‑dimensional dictionary, applies a ReLU, then a hard Top‑k selection (keeping only the k largest activations). The selected sparse vector sₜ is decoded by W_dec and added to the skip output, yielding ẑₜ₊₁ = W_skip hₜ + W_dec sₜ + b_dec.

Sparsity is not a regularizer but an architectural principle. By fixing a sparsity budget k, LSTR offers “semantic resolution control”: the number of active semantic features per step can be tuned at inference time, trading off granularity for efficiency without retraining. The linear skip path is zero‑initialized and bias‑free, ensuring it only captures predictable background drift, while the sparse path handles the reasoning‑specific residual. This separation improves training stability and makes each latent transition interpretable.

Training uses a composite loss: (1) Fraction of Variance Unexplained (FVU) normalizes the squared error between predicted latent states and compressed targets z* by the target variance, providing scale‑invariant supervision across different compression ratios; (2) a skip‑alignment loss forces the linear path to approximate the dominant drift, preventing semantic information from leaking into it; (3) a “ghost‑gradient” term encourages utilization of the entire sparse dictionary by propagating reconstruction residuals back to inactive encoder units, mitigating dead‑unit collapse. Gradients through the non‑differentiable Top‑k are handled with a Straight‑Through Estimator.

The authors evaluate LSTR on several mathematical reasoning benchmarks: GSM8K‑Aug (≈385 k training samples), GSM‑Hard, SVAMP, MultiArith, and the more challenging MA‑TH dataset. They compare two LSTR configurations—LSTR‑5 (compression ratio r = 5, k = 5) and LSTR‑2 (r = 2, k = 2)—against dense latent baselines such as CoLaR‑5, CoLaR‑2, Coconut, and standard CoT. Results show that LSTR matches or exceeds dense baselines in accuracy while reducing the average number of latent steps (#L). Notably, LSTR‑2 achieves comparable performance to CoLaR‑2 with fewer active features, demonstrating that the sparsity budget can be lowered without sacrificing correctness.

Interpretability analyses reveal that the Top‑k activations correspond to recognizable reasoning primitives (e.g., addition, subtraction, logical comparison). Causal intervention experiments—forcing specific sparse units on or off—lead to predictable changes in model output, confirming that these sparse features act as causal operators rather than passive descriptors. Trajectory visualizations further illustrate that the skip pathway provides a smooth backbone, whereas the sparse pathway injects sharp semantic jumps, confirming the intended functional separation.

In summary, LSTR introduces a novel paradigm where sparse, human‑readable features are the actual computational units of latent reasoning. It preserves the efficiency gains of dense latent compression while restoring interpretability and controllability through explicit sparsity constraints. This work paves the way for deploying large language models in latency‑sensitive or safety‑critical settings where both performance and transparency are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment